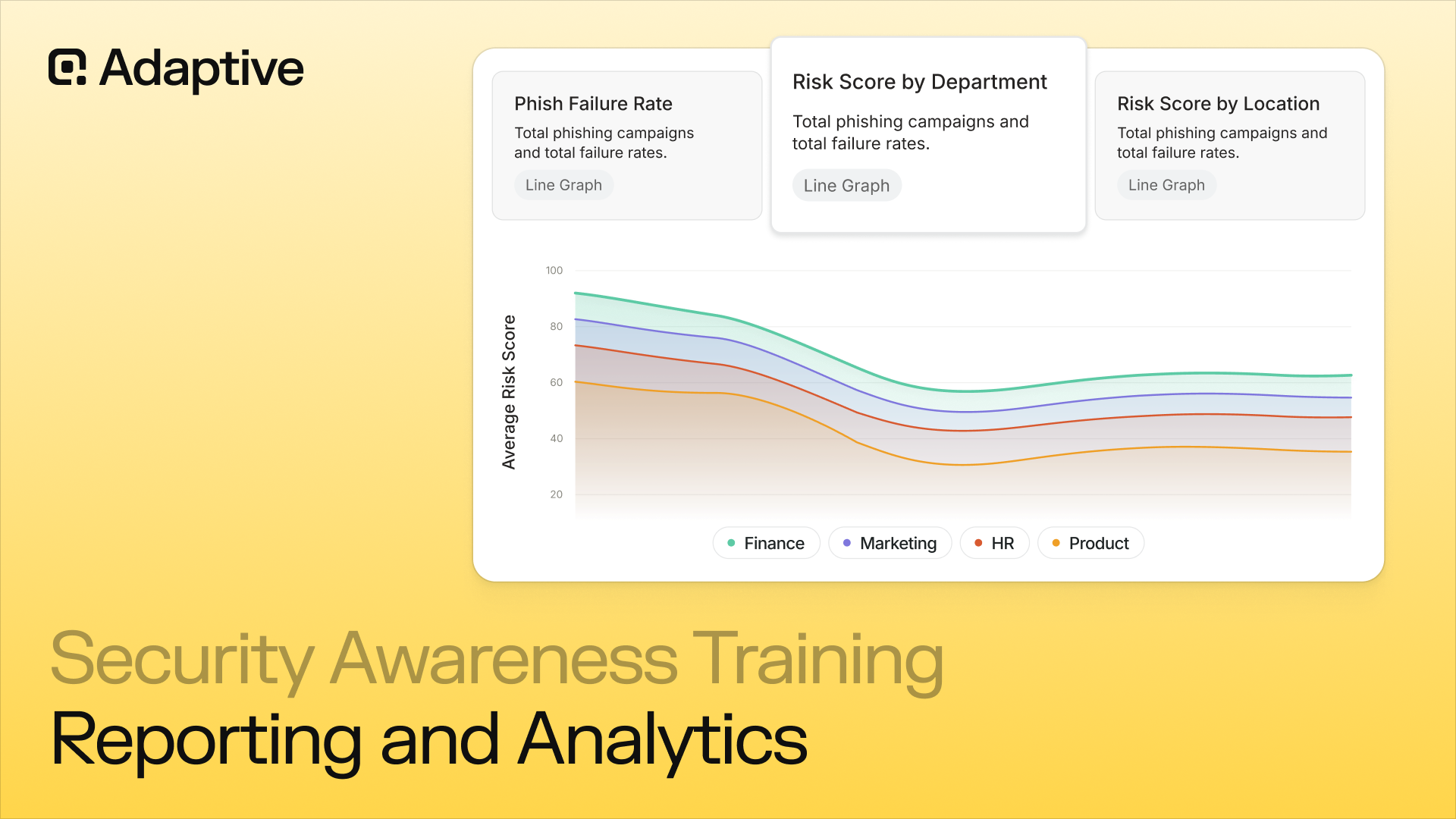

The security awareness training platform reporting and analytics determine whether a program produces documented compliance, measurable risk reduction, and robust data protection.

This article examines every reporting capability a platform must deliver, including:

- Behavioral metrics that distinguish genuine risk visibility from vanity data

- Compliance audit exports that satisfy HIPAA, PCI-DSS, and SOC 2 requirements, supporting audit readiness and helping organizations meet regulatory requirements

- Executive dashboards that make human risk legible to a board

According to Verizon's Data Breach Investigations Report 2025, approximately 60% of all cyber breaches are caused by human error, underscoring the need for training programs that directly address this vulnerability through targeted phishing and social engineering simulations.

Organizations seeking a platform built around intelligent reporting and analytics that also supports data protection and audit readiness are encouraged to explore the Adaptive Security demo to understand how metrics can be effectively applied at every level of training.

Why Do Security Awareness Training Platform Reporting and Analytics Result in More Effective Programs?

Many organizations define security awareness training platform reporting and analytics solely through training completion rates. This approach measures only part of the equation, as behavioral metrics are considerably more important than completion metrics. Analytics that focus on behavioral change help organizations achieve real outcomes in reducing security risks, not just tracking participation.

A platform's reporting and analytics capabilities determine whether a security awareness program produces compliance documentation or actual risk reduction. Effective reporting provides visibility into potential threats and security risks, enabling organizations to proactively address vulnerabilities before they lead to incidents. Reporting that surfaces only completion data creates the illusion of a functioning program.

Reporting that tracks behavioral signals, including click rates, reporting rates, and risk score trajectories, gives security leaders the evidence needed to justify investment, direct remediation, and demonstrate measurable progress to a board that evaluates security performance in financial terms rather than operational activity counts.

That distinction defines every metric worth tracking, and the most defensible programs treat those metrics as a continuous feedback loop rather than a quarterly snapshot.

What Is the Difference Between a Vanity Metric and an Actionable One?

Completion rates and quiz pass rates are coverage metrics that confirm an employee watched a video and answered multiple-choice questions correctly. They do not mean that the employee would recognize a spear-phishing email disguised as a vendor invoice or resist a vishing call from a synthetic voice cloned from their CEO. Conversely, behavioral metrics tell a different story:

- Phishing simulation click rate trends over time indicate whether employees are making better decisions under realistic pressure

- Phish reporting rates reveal whether employees actively defend the organization rather than passively avoid obvious traps.

- Human risk scores at the individual and department levels identify precisely where behavioral gaps remain and which employees require targeted intervention before a real attack exploits them.

Analytics can identify knowledge gaps, allowing targeted resource allocation and enabling organizations to refine content based on dashboard reviews and measured behavioral and risk metrics, rather than relying on a one-size-fits-all approach.

Security Awareness Training Platform Reporting and Analytics Core Metrics

The security awareness training platform reporting and analytics layer converts simulation events, training activity, and behavioral signals into quantifiable risk intelligence, encompassing phishing click rates, training completion rates, and human risk scores across the entire employee population.

These metrics also support compliance needs and regulatory compliance by aligning with standards such as ISO/IEC 42001 and the NIST AI Risk Management Framework.

The reporting and analytics layer serves a dual purpose, supporting both compliance documentation and real-time risk management. As a result, it must capture not only what actions employees performed but also track user behavior and employees' performance to determine whether their behavior demonstrably changed.

Security Awareness Training Platform Reporting and Analytics: Phishing Simulation Metrics

Automated phishing simulations with realistic emails provide a safe environment for employees to practice identifying and responding to threats, enhancing their instinctive responses to real-world scenarios.

The following represent some of the highest-value phishing simulation metrics for security awareness training platform reporting and analytics:

- Phish-prone percentage: A headline metric, tracking the share of employees who clicked on phishing tests and simulation lures over time, trending downward as the program matures. These simulations are automated and designed to closely mimic real-world phishing attacks

- Click rate per campaign: Adds granularity, isolating which attack types still break through, including credential harvesting, invoice fraud, and vishing follow-up. Automated simulations replicate actual phishing emails to test employee vigilance

- Miss rate: Employees who were targeted but never interacted with a simulation pose an invisible risk, as non-engagement with training, rather than resistance to phishing, constitutes a meaningful gap

- Phishing reporting rate: A stronger behavioral signal than non-clicking, as an employee who actively flags a suspicious simulation has internalized a response behavior, not just avoided a mistake

- Repeat clicker tracking: Identifies persistent high-risk individuals who require targeted intervention rather than a second pass through the same generic module

- Phishing template performance: Extends analysis beyond click rate to capture the full behavioral arc of a simulation, including whether employees reported it, how quickly they acted, and what follow-up microlearning was triggered. A campaign with a 12% click rate and a 45% report rate presents a fundamentally different risk profile than one with a 12% click rate and zero reports

Realistic simulations in a safe environment significantly improve employees' ability to recognize and respond to cyber threats by allowing them to practice with authentic phishing emails and social engineering scenarios.

According to the Verizon Data Breach Investigations Report 2025, phishing ranked as the third-highest initial access vector, appearing in 16% of confirmed breaches. That makes identifying employees who never responded to a simulation just as consequential as identifying those who clicked on it.

Security Awareness Training Platform Reporting and Analytics: Training Metrics

Training metrics are long-term participation data that, while insufficient to capture the complete picture, still yield considerable useful information on security awareness training platform reporting and analytics.

The value of interactive training and content, including scenario-based learning, lies in their ability to engage employees through hands-on, realistic scenarios that drive better knowledge retention and cybersecurity behavior:

- Completion rate and course-level progress: Confirm that employees have encountered the material and that their progress is sufficient to satisfy audit requirements for frameworks such as HIPAA, PCI-DSS, and SOC 2

- Time-to-completion: Adds a behavioral dimension, as employees who rush through a 10-minute module in 90 seconds are completing it rather than absorbing it

- Pre- and post-training knowledge assessment scores: Measure gap closure, specifically the difference between what employees knew entering a module and what they retained immediately after

- Long-term retention scores: Measured weeks after training rather than immediately following a quiz, separate genuine behavioral uptake from short-term recall. An employee who scores 95% on a post-quiz and 60% three weeks later has not retained the behavior. Platforms that capture only immediate post-quiz scores provide security leaders with a misleadingly incomplete picture of program effectiveness

Interactive training and scenario-based learning with realistic simulations have been shown to significantly improve employees' performance in identifying and mitigating cyber threats by engaging them in active learning rather than passive instruction.

Security Awareness Training Platform Reporting and Analytics: Behavioral and Risk Metrics

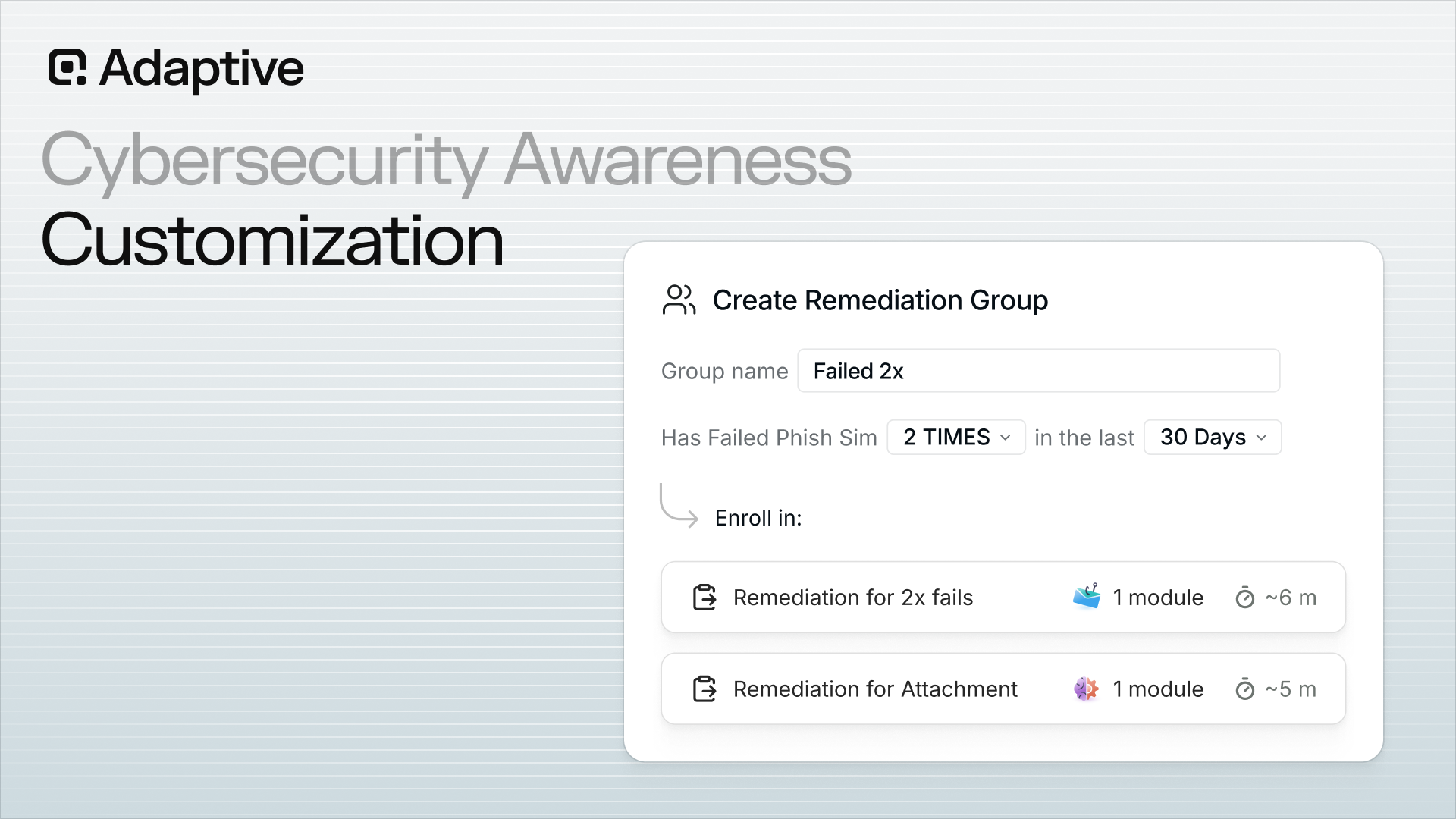

Behavioral and risk metrics represent the highest-value category within security awareness training platform reporting and analytics. They track whether employees have meaningfully changed their approach to cybersecurity threats. Additionally, they support a human risk management strategy through a comprehensive human risk management platform that continuously tracks and reduces risk:

- Human risk scores per employee: Aggregates simulation behavior, training completion, open-source intelligence (OSINT) exposure, credential breach history, and AI and shadow IT behavior signals into a single, trackable number

- Adaptive content and behavioral analytics: Delivers personalized training that adapts in real time based on individual user behavior and risk profiles, using behavioral analytics to tailor content and ensure relevant, timely education for each employee

- MFA adoption rate and password manager usage rate: Moves the analytics layer from passive measurement into active verification that employees are practicing the security hygiene the training prescribes

- AI and shadow IT behavior signals: Incorporates practices such as employees pasting sensitive data into unauthorized tools, feeding directly into the risk score, and flagging training gaps that phishing simulations alone cannot surface

Effective personalized training programs use behavioral analytics to track user interactions and adjust training content, enhancing learning outcomes and engagement. Human Risk Management platforms track user behavior and risk reduction over time, providing analytics that validate training effectiveness and inform future training adaptations based on user performance.

The difference between engagement metrics and behavioral metrics is the difference between a security program and a compliance checkbox: engagement metrics only indicate which employees completed training.

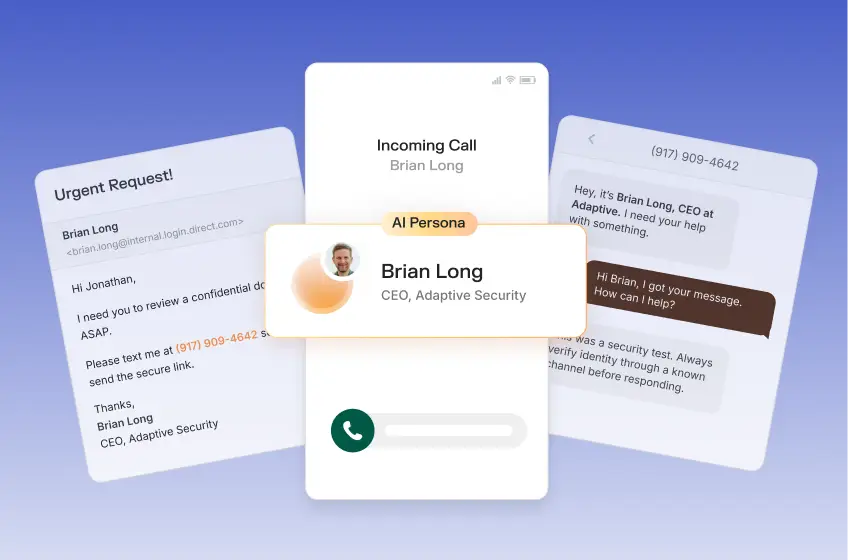

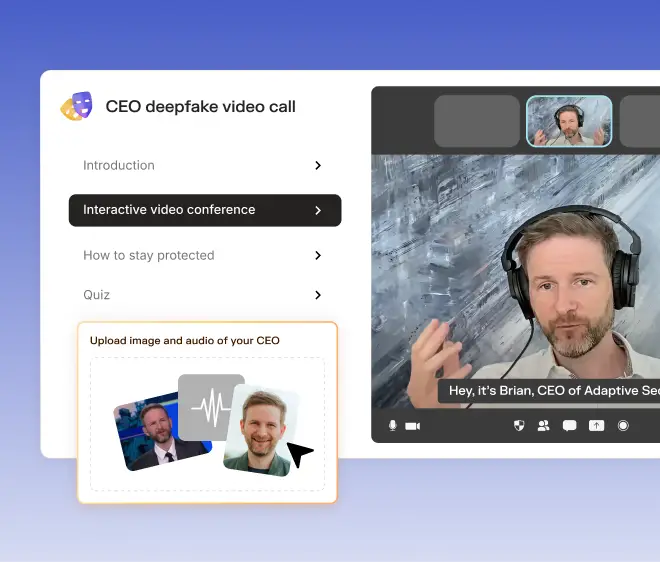

Adaptive Security's Risk Monitoring tracks which employees are measurably less likely to cause a breach. The reporting infrastructure that surfaces those insights is only as useful as the program design feeding it. Adaptive Security's demo illustrates how risk monitoring reduces the attack surface.

Security Awareness Training Platform Reporting and Analytics: Reporting Cadence and Role-Based Access

Reporting frequency is more than just a setting in a security awareness training platform's reporting and analytics; it is a program design decision that directly supports audit readiness and helps organizations meet regulatory requirements.

A newly launched program generating volatile week-over-week data benefits from monthly reporting, which smooths out noise and surfaces directional trends.

A mature program with 12 months of baseline data can shift to quarterly executive narratives demonstrating cumulative risk reduction, paired with real-time alerts for anomalous spikes. Optimal cadence and access processes include:

- Role-based access: Control to determine which stakeholders have access to which data within those cadences, including support for role-based training paths to ensure relevant reporting and training content for each job function

- Department managers: Receive a filtered view of their direct reports' simulation performance and training progress

- IT administrators: Require individual-level data to investigate persistent high-risk employees or triage phishing reports

- Automated scheduled distribution: Through pre-built report packages delivered to defined stakeholder groups on a configured cadence, it eliminates the manual pull cycle that causes reporting to lapse during demanding periods

Organizations are increasingly required to document and demonstrate their training efforts for compliance benchmarks, making robust reporting and analytics capabilities essential for regulatory adherence and audit readiness.

Determining which metrics should populate each reporting layer is where most programs either generate insight or generate noise.

The Optimal Security Awareness Training Platform Reporting and Analytics Dashboard

A security awareness training platform reporting and analytics dashboard is only valuable if it surfaces decisions, not just data. Modern platforms built for CISOs deliver a fundamentally different reporting experience than the static exports most legacy tools still produce, helping organizations achieve real outcomes by tracking real world threats and security threats as they evolve.

Whereas a modern dashboard translates simulation behavior and training activity into a human risk narrative, legacy platforms reduce the entire program to a single metric: completion rate. Monitoring emerging risks, such as new scams and AI-generated attacks, through dashboard analytics is essential to ensure employees stay vigilant against evolving cyber threats.

Effective dashboards provide clear, easy-to-read reports that display participation rates, phishing-click rates, and risk scores, helping management identify vulnerabilities and measure improvement over time.

Modern dashboards trend human risk scores over time, break down vulnerability by department and role, and benchmark the organization against industry peers. Legacy systems generate static PDF snapshots that require manual extraction and provide security leaders with no insight into behavioral change.

Role-based access control makes this distinction operational. Managers require department-level visibility, IT administrators require per-employee drill-down, and executives require aggregated risk narratives rather than raw simulation logs.

Automated report delivery at defined cadences eliminates the manual effort that makes legacy reporting a burden rather than a signal.

Reporting frequency should match program maturity. Early-stage programs require monthly trend data to support course correction, while mature programs at large enterprises typically shift to quarterly board narratives anchored in risk score movement rather than activity counts.

What Does a CISO-Ready Security Awareness Training Platform Reporting and Analytics Dashboard Include?

A high-quality security awareness training platform reporting and analytics dashboard addresses the central question security leaders face before a board meeting: Is human risk trending downward? Answering that question requires eight interconnected data surfaces working in concert:

- Organization-wide human risk score trend: A time-series view showing whether aggregate behavioral risk is improving, plateauing, or worsening across the program lifecycle

- Department- and role-level breakdowns: Risk scores segmented by team, business unit, and job function, revealing whether finance is more vulnerable than engineering or whether a specific manager's direct reports consistently fail simulations

- Simulation click rate and reporting rate trends: Both metrics carry equal weight. A falling click rate paired with a flat reporting rate indicates that employees are avoiding the bait but have not yet adopted a defender mindset

- Top vulnerable populations and persistent high-risk individuals: Identifies the specific employees and clusters who account for disproportionate risk, enabling targeted intervention before a real attack occurs

- Compliance training completion mapped to required frameworks: Completion status aligned to SOC 2, HIPAA, GDPR, PCI-DSS, and ISO 27001 requirements, with audit-ready export built into the platform rather than added as an afterthought

- Industry peer benchmarks: Contextualizes internal metrics against organizations of similar size and vertical, providing boards with a reference point beyond internal trend lines

- Executive Dashboards: Track key performance indicators to demonstrate return on investment and program effectiveness to stakeholders.

- Real-Time Campaign Tracking: Enables live monitoring of phishing simulations and training assignments for immediate insight and response.

Automated reporting provides a clear audit trail of training completion and test scores, supporting audit readiness and regulatory compliance with standards such as GDPR, HIPAA, ISO 27001, ISO/IEC 42001, and the NIST AI Risk Management Framework. This ensures organizations can demonstrate adherence to regulatory requirements and governance frameworks.

Legacy vs. Modern Security Awareness Training Platform Reporting and Analytics

Most legacy platforms were designed when reporting meant proving training happened, not proving risk changed, and that design assumption remains reflected in what they produce. Modern security awareness training platforms, however, enable measurable risk reduction by tracking real behavioral outcomes and providing actionable analytics.

The default output is a completion-rate dashboard indicating how many employees finished the annual module, broken down by department. Simulation results, when available, are provided as a separate PDF export and are not linked to individual risk profiles, trend data, or behavioral context.

Per-employee risk profiles do not exist in legacy platforms because their architectures were not designed to accumulate behavioral signals over time, treating each simulation as a standalone event rather than a data point in a continuous risk model.

The result is a reporting stack that satisfies a compliance auditor's requirements but provides a CISO with no actionable intelligence about where the organization is genuinely exposed.

Research indicates that data-driven programs reduce security incidents at a rate measurable against prior baselines, and human risk management platforms track user behavior and risk reduction over time, providing analytics that validate training effectiveness and inform future training adaptations based on user performance.

Adaptive Security's human risk dashboards and reporting are built on a unified risk score that aggregates simulation behavior, training completion, open-source intelligence (OSINT) exposure, and credential breach history into a single, continuously updated view. The architecture of legacy tools was never designed to produce a unified, continuously updated risk view.

Security Awareness Training Platform Reporting and Analytics: Turn Aggregate Data Into Targeted Action

Analyzing security awareness training platform reporting and analytics in broad, organization-wide terms conceals the pockets of concentrated risk that cause real breaches. Leveraging analytics to address common and evolving cyber threats and security risks is essential for proactive defense.

To act on that risk, security teams should filter and segment data by department, role, business unit, location, and employee tenure, then use the resulting signals to trigger automated manager escalations and inspect the e-learning engagement funnel for precise dropout points.

The final consideration before acting is to verify that segmented data is driving training enrollment decisions, not simply populating dashboards.

Effective security awareness training programs teach people to recognize and respond appropriately to cyberthreats, going beyond theory to drive real behavior change.

Apply Role and Department Filters to Surface Concentrated Risk

A 12% organization-wide phishing click rate may appear manageable. However, when broken out by department, for example, it can reveal that finance employees click at 28% while IT staff click at 6%.

Role-based filtering reveals whether finance team members are disproportionately susceptible to business email compromise (BEC) simulations and whether engineers are underperforming in credential-harvesting scenarios specific to their workflows.

Department-level segmentation converts that aggregate finding into a targeted training directive, enabling the implementation of role-based training paths so employees receive relevant, practical instruction tailored to their job functions and risk profiles.

Use Tenure and Location Filters to Close Onboarding and Regional Gaps

Filtering by hire date answers a direct question: Do employees in their first 90 days complete onboarding training before encountering live threats? Continuous updates to training materials are necessary to address emerging risks, such as new scams and AI-generated attacks, ensuring employees are prepared for the latest cyberthreats.

New hires who have not yet submitted a phishing report constitute an untested population, and tenure-based segmentation identifies them before a simulation in a production environment exposes the gap.

Location and regional filters reveal whether teams in specific offices or locations are outliers, a pattern that frequently reflects inconsistent program rollout rather than individual employee behavior.

Both filters feed directly into human risk scoring and automated remediation workflows, enrolling high-risk cohorts without requiring manual intervention from administrators.

Activate Manager Escalation Emails to Compress the Risk Response Window

Identifying a persistent clicker through segmented data reduces risk only if the signal is acted upon promptly.

By tracking employees' performance and user behavior, the platform enables timely manager interventions when risky patterns or vulnerabilities are detected.

Automated manager-escalation emails surface the highest-risk and non-participating employees to their direct managers earlier in the program cycle, thereby compressing the lag between detection and intervention.

A manager who receives a weekly digest listing the three employees on their team who have clicked every simulation and completed zero training modules is positioned to act on that information faster than a security team working from a centralized dashboard alone.

This workflow distributes accountability without requiring security leaders to manually pursue individual cases.

Read the E-Learning Engagement Funnel to Find Exact Dropout Points

The e-learning engagement funnel breaks completion rate into three distinct populations, each demanding a different response

- Employees who never started an assigned module: Require a re-enrollment nudge or a manager escalation

- Employees who started and abandoned mid-course: Require a shorter or more effectively sequenced module

- Employees who completed the content but failed the post-assessment: Require targeted remediation on the specific concept missed

Incorporating interactive content and scenario-based learning into modules can significantly reduce dropout rates and improve engagement by making training more relevant to employees.

Tracking which funnel step generates the most attrition identifies precisely where content design or delivery is breaking down, replacing guesswork with a measurable corrective action. That diagnostic precision is what separates programs that move risk scores from programs that only move completion percentages.

How Security Awareness Training Platform Reporting and Analytics Supports Compliance Audit Requirements

Security awareness training platform reporting and analytics exist primarily because regulators demand documented proof, not program completion alone. Regulatory requirements and compliance requirements drive the need for audit readiness, ensuring organizations can demonstrate that their training programs align with industry mandates and governance policies.

Every major compliance framework governing data security requires organizations to train employees and produce time-stamped, auditable records confirming that training occurred, that the content was appropriate, and that employees demonstrated comprehension.

NIST SP 800-50 formalized this expectation by requiring organizations to define program metrics, conduct knowledge assessments, and evaluate program effectiveness on a documented schedule.

Without a reporting layer capable of producing this evidence on demand, a training program fails the compliance test the moment an auditor arrives.

Organizations are increasingly required to document and demonstrate their training efforts, particularly those related to AI risk management, as part of continuous updates to their training programs to maintain compliance.

What Do Major Compliance Frameworks Require From Security Awareness Training Platforms?

Documentation requirements vary by framework, but the pattern is consistent: every major standard demands completion records, assessment evidence, and the ability to prove training was delivered to the right people at the right time.

Audit readiness and regulatory compliance are best supported by role-based training, ensuring that security awareness programs are tailored to specific job functions and risk levels.

- HIPAA requires covered entities to implement a security awareness and training program for all workforce members and maintain written documentation. Platforms must produce timestamped completion records linked to individual employee IDs, rather than aggregate counts

- PCI-DSS mandates a formal, documented security awareness program with evidence of annual training delivery and acknowledgment from all personnel

- SOC 2 requires evidence of security awareness training. Auditors request training logs, assessment scores, and content version records during Type II reviews

- ISO 27001 requires documented evidence of competence and awareness training, including records showing what content was delivered, to whom, and when

- NIST CSF requires that all users be informed and trained, with the 2024 CSF 2.0 update reinforcing measurable outcomes rather than mere program existence

- NIST SP 800-53 requires organizations to maintain training records and provide evidence of literacy training and role-based instruction aligned with personnel categories

Training content mapped to HIPAA, PCI-DSS, GDPR, and ISO 27001 satisfies the program substance requirement. The reporting layer satisfies the audit evidence requirement. Platforms must use "maps to" language rather than claiming certification status for any of these frameworks.

Security Awareness Training Platform Reporting and Analytics vs. Individual Privacy

The tension between individual-level risk data and employee privacy obligations is substantive, particularly under GDPR.

Aggregate dashboards protect privacy but obscure the behavioral signals security teams require to intervene before a breach occurs. Addressing data protection and compliance requirements, privacy controls in reporting ensure sensitive information is handled in accordance with regulatory standards and organizational policies.

Most enterprise platforms address this by allowing administrators to configure anonymization thresholds: individual data is visible to authorized security personnel when the risk score exceeds a defined threshold, while aggregate reporting is used for board-level and regulatory submissions. Audit-ready export formats must include:

- Completion records with timestamps and employee IDs

- Assessment scores with passing thresholds

- Training content version histories

- Role-based assignment logs

These records address the four questions every auditor raises: who was trained, on what content, when, and whether comprehension was demonstrated.

Identifying which records each framework requires is the prerequisite to constructing a reporting architecture that withstands scrutiny. That foundation directly shapes which behavioral metrics the platform must continuously track.

The Adaptive Security reporting dashboard surfaces each of these data points across 35+ prebuilt reports in exportable formats aligned with the documentation requirements organizations face for SOC 2, HIPAA, and PCI DSS audits.

Using Benchmarking Data to Contextualize the Security Awareness Program Performance

Security awareness training platform reporting and analytics deliver strategic value only when raw metrics are measured against a relevant external baseline. Benchmarking not only contextualizes performance but also helps organizations achieve real outcomes by tracking real world threats, security risks, and potential threats as they evolve.

Global averages flatten meaningful differences between industries; a 14% phish-prone percentage in financial services signals a different risk profile than the same figure in education, where that rate often represents the sector baseline.

NIST SP 800-50 Rev. 1, published in September 2024, explicitly calls for evaluation methods that allow organizations to regularly improve and update their programs, a standard that requires comparison data rather than isolated metrics.

A metric without a benchmark provides no actionable signal; measured against an industry baseline, it becomes a defensible indicator of risk posture.

Why Do Industry Benchmarks Vary So Dramatically Across Verticals?

Threat profiles differ by sector, and benchmark expectations must reflect that variation. Small businesses and development teams may have different benchmark expectations and risk profiles compared to large enterprises, requiring tailored approaches to security awareness training and analytics.

For instance, financial services employees face concentrated spear phishing and business email compromise (BEC) attempts, with attackers prioritizing high-value wire transfer access.

Conversely, healthcare organizations contend with vendor impersonation and credential harvesting targeting electronic health record systems, while educational institutions present a larger, less controlled user base, including students and part-time staff who receive less frequent training.

Considering risk profile and the consequences of an attack, a 10% phish-prone rate represents a material improvement for a university. However, for a bank managing $25 billion in assets, the same figure is unacceptable.

Setting program targets against global averages rather than vertical-specific baselines produces a false sense of progress and leaves the organization exposed to the specific threats its sector attracts.

How Does Simulation-to-Incident Correlation Prove Training ROI?

The most compelling argument a security leader can present to a board is a direct link between simulation performance and fewer incidents, demonstrating clear outcomes and measurable risk reduction.

Forward-thinking security teams track phishing simulation click rates over 12-month periods and map them against confirmed phishing incidents reported to IT. When click rates drop by 20%, the relevant question is whether confirmed incidents fall by a proportional amount.

This correlation converts training data into business-value evidence, as phishing remains one of the most dominant initial access vectors in confirmed breaches, according to the Verizon Data Breach Investigations Report 2025. Consequently, any measurable reduction in employee susceptibility has a direct, quantifiable link to reduced breach risk.

How Do Phish Reporting Rates Connect to Attacker Dwell Time?

Phish reporting rates are an indirect but powerful proxy for incident detection speed. Organizations with higher reporting rates surface suspicious activity faster, compressing the window between initial compromise and discovery, a metric commonly referred to as attacker dwell time.

Shorter dwell time limits the damage an attacker can inflict before containment begins, particularly for cyberattacks with extended progression timelines, such as ransomware.

A workforce trained to report suspicious emails, deepfake video requests, or unusual vishing calls via a one-click mechanism serves as a distributed detection layer that no technical control can replicate.

Tracking reporting rates over time by department, role, and simulation type turns employee behavior into an early-warning system that feeds directly into incident-response workflows. Real-time campaign tracking and user behavior analytics further enhance these early-warning systems, enabling faster detection and more effective incident response. The stronger the signal, the clearer the case for continuous, role-specific simulation rather than annual training cycles.

Translating Security Awareness Training Platform Reporting and Analytics Into Business Risk Narratives

Most CISOs enter board meetings presenting security awareness training platform reporting and analytics data, including click rates, completion percentages, and simulation pass/fail ratios, only to find that executive engagement diminishes within minutes.

Translating analytics into business narratives is essential for demonstrating outcomes in addressing security risks and potential cyberthreats that organizations face.

Converting simulation data into board-level language requires a deliberate translation framework rather than an unstructured presentation. Every metric should be treated as raw material, with the board presentation as the finished product.

Lead With Dollar Risk

A phish-prone percentage carries no actionable meaning for a CFO in isolation. However, when paired with a breach probability estimate and the IBM Cost of a Data Breach Report 2025 average breach cost of $4.44 million, it becomes a quantified financial exposure, especially when considering that data protection failures can lead to significant financial losses.

If 22% of employees fail phishing simulations and social engineering drives the majority of initial access events, the board is examining a calculable probability-weighted loss, not a security metric.

Focus On Behavioral Trends

A single-period click rate provides boards with no meaningful indication of program effectiveness. Analytics that track user behavior and employees' performance over time are essential for understanding progress.

Conversely, a downward trend from 28% to 9% in phish-prone rate over 12 months demonstrates that the program is producing results, that risk is declining, and that continued investment is justified.

Reporting dashboards should surface trend lines by default rather than point-in-time scores.

Benchmark Against Industry Peers

Absolute numbers carry little persuasive weight with executives unfamiliar with security baselines. Demonstrating that an organization's phish-prone rate sits 14 points below the industry average frames human risk performance as a competitive advantage and makes the program's ROI visible.

Peer benchmarking data transforms raw simulation output into a context for executives to act.

Map Compliance Gaps to Regulatory Exposure

Incomplete training completion poses a major regulatory liability, as compliance and regulatory requirements necessitate closing these gaps.

Data Integration, Export, and API Access for Security Awareness Training Platform Reporting and Analytics

A security awareness training platform reporting and analytics only delivers full value when its data flows into the systems teams already use. Selecting the right platform, ideally a comprehensive human risk management platform, ensures seamless integration and robust analytics that support compliance needs and ongoing risk reduction.

Connecting simulation outcomes, training completions, and risk scores to BI tools, SIEMs, LMS platforms, GRC systems, and HRIS platforms requires a clearly defined integration architecture, from API access to downstream systems that consume the data.

MSP and multi-tenant operators introduce an additional layer of requirements, and security culture surveys provide the qualitative signal that quantitative dashboards alone cannot supply.

Connect to BI Tools for Custom Executive Visualization

Native platform dashboards serve security operators effectively, but executive audiences typically use BI tools that enable finance, HR, and risk teams to layer security training data against headcount, departmental costs, and audit timelines. Executive dashboards support custom executive visualization and reporting, ensuring stakeholders have clear, actionable insights.

A reporting API that exposes per-user simulation outcomes, phishing click rates, and risk score trends in JSON or CSV format feeds directly into these environments. The result is a unified executive view in which declining phishing susceptibility sits alongside incident costs, presenting the ROI case without requiring a separate slide deck.

Feed SIEM and SOAR Platforms with Human Risk Signals

The most consequential integration connects training data to the Security Information and Event Management (SIEM). By integrating user behavior analytics, organizations can better identify and respond to security threats. When an employee clicks a simulated phishing email, that behavioral signal belongs in the same data stream as an actual endpoint alert.

Correlating those two events inside a SIEM identifies which employees represent compounding risk, and a SOAR playbook can automatically trigger targeted retraining when that pattern appears, a capability IBM documents in its overview of SIEM event correlation and analytics. This integration transforms the security awareness training platform from a training tool into a live contributor to the SOC's threat picture.

Push Training Records to LMS and GRC Platforms via Results API

Organizations running a centralized learning management system require security training completion data to flow in automatically, rather than via manual exports, to ensure alignment with compliance requirements and audit readiness.

A results or reporting API enables this process by pushing per-user training completion records, simulation pass/fail outcomes, and individual risk scores into the LMS, HRIS, or GRC platform on a scheduled or event-triggered basis.

Adaptive Security's HRIS and GRC integrations support this continuous data flow, keeping compliance records up to date without analyst intervention.

Support MSP and Multi-Tenant Reporting Requirements

Managed service providers and organizations managing multiple subsidiaries require tenant-level data aggregation, cross-tenant benchmarking, and white-labeled report output.

Choosing the right platform is essential, as it should support the unique needs of both small businesses and multi-tenant operators, ensuring that even smaller enterprises receive tailored security awareness training and reporting.

A platform that displays a subsidiary's phishing click rate relative to the broader portfolio average and exports that comparison as a client-branded PDF provides MSPs with a differentiated deliverable.

Without these capabilities, multi-tenant operators must manually pull and format reports for each entity separately.

Add Security Culture Surveys as a Qualitative Signal Layer

Simulation click rates and training completion percentages measure behavior, but do not capture whether employees understand why certain actions matter. Periodic security culture surveys combined with interactive content that actively teaches people to recognize and respond to threats, address:

- Confidence in recognizing phishing

- Comfort level in reporting suspicious activity

- Perception of security as a shared organizational responsibility.

This data surfaces sentiment gaps that behavioral data alone cannot identify. Platforms that present survey results alongside risk score trends allow security teams to identify departments where scores are improving, but confidence remains low, a gap that indicates further reinforcement is required before behavioral change becomes durable.

Human Risk Scoring and Continuous Risk Monitoring as the Reporting Foundation

A human risk score is a dynamic, per-employee vulnerability rating that quantifies the probability of a successful attack against a given individual at any point in time, spanning active and passive signal categories.

Human error, user behavior, and employees' performance are key inputs to this scoring, as they directly influence an organization's exposure to cyber threats.

Active signals derive directly from employee behavior under simulated conditions, including:

- Whether an employee clicked a spear-phishing email

- Whether the incident was reported using a Phish Alert Button

- Whether the employee clicked repeated simulations over time despite prior training

- Whether the employee fully completed training within a reasonable time

Passive signals add depth that behavioral data alone cannot capture, including:

- OSINT data reflecting what an attacker could discover about an employee from LinkedIn, professional directories, or public databases

- Credential breach records indicating whether their email address or password appears in known breach dumps

- Shadow IT behavior, such as inputting sensitive data into unauthorized AI tools

Why This Differs From Legacy Completion-Rate Reporting

This approach fundamentally distinguishes modern security awareness training platform reporting and analytics from the completion-rate dashboards that defined the previous decade of Security Awareness Training (SAT) tools.

Legacy SAT platforms report program activity rather than the state of individual vulnerabilities.

To illustrate, a 94% training completion rate communicates nothing about whether those employees can identify a deepfake vishing call or resist a business email compromise (BEC) attempt under deadline pressure.

Risk scores shift the reporting layer from a backward-looking compliance record to a forward-looking threat signal that identifies precisely where exposure is concentrated at any given time.

Measurable risk reduction and actionable analytics are what set modern platforms apart, enabling organizations to demonstrate real progress in reducing human-layer threats rather than just tracking participation.

How Risk Scores Trigger Automated Remediation

The reporting layer becomes operational when risk thresholds connect directly to training enrollment. When an employee's score crosses a defined threshold, triggered by a simulation failure, a newly discovered OSINT exposure, or a credential appearing in a breach database, the platform automatically enrolls that individual in targeted microlearning without requiring analyst intervention.

Adaptive content and behavioral analytics work together to ensure that automated remediation is both relevant and effective, dynamically adjusting training based on user actions and risk signals.

Adaptive Security's Risk Monitoring and Mitigation module applies this model across 1,000+ OSINT data points per employee, feeding a unified risk score that drives automated remediation at scale. The metrics powering that score are also what determine whether risk is declining, and that distinction separates a defensible board report from a completion percentage.

What to Look For When Evaluating a Security Awareness Training Platform's Reporting and Analytics Capabilities

Evaluating a security awareness training platform's reporting and analytics layer requires moving through two distinct tiers: must-have capabilities that establish baseline visibility, and advanced capabilities that produce the granular risk intelligence CISOs need to make budget and program decisions.

When considering solutions, it is essential to assess how each addresses the organization's unique needs and risk profile to ensure the right platform is selected.

Confirm the Must-Have Security Awareness Training Platform Reporting Baseline

Every platform under evaluation should produce, at a minimum, the following capabilities:

- Phish-prone percentage and click rate trending over time

- Phishing reporting rate tracking

- Per-employee risk profiles

- Department- and role-level segmentation

- Automated scheduled report delivery

- Compliance audit exports in CSV and PDF formats

- Basic SIEM integration

- Reporting on scenario-based learning and interactive training engagement

These capabilities define functional visibility. Without them, a security leader cannot address the board's question of whether employees are improving. Platforms that lack role-level segmentation require administrators to export raw data and manually build reports, undermining the operational purpose of a dedicated analytics layer.

Assess the Advanced Security Awareness Training Platform Analytics Capabilities

Advanced capabilities distinguish platforms that track activity from those that produce actionable intelligence.

Priority should be given to human risk scoring using a multi-input calculation that incorporates simulation behavior, training completion, OSINT exposure, and credential breach signals, evaluated collectively rather than in isolation. Additional advanced capabilities worth evaluating include:

- Adaptive content delivery that personalizes training in real time based on user behavior and performance data

- Behavioral analytics to identify trends and risky patterns across the workforce

- Human risk management platform features that combine automated training, risk assessment, phishing simulations, and comprehensive reporting for ongoing, measurable risk reduction

- Simulation-to-incident correlation

- BI tool integration via API

- Multi-tenant or MSP reporting

- Role-based access control for dashboard views

- Security culture survey integration

- Pre/post training knowledge assessment tracking with long-term retention measurement

Measure Long-Term Knowledge Retention

Employees who score well the day after completing a module often revert to baseline behavior within 60 days, a pattern researchers identify as knowledge decay.

Vendors should be asked specifically how the platform supports re-testing at 30, 60, and 90 days post-training, and whether the system tracks retention curves over time rather than completion timestamps alone. Tracking employees' performance and user behavior over time helps ensure that knowledge is retained and that training leads to lasting behavioral change.

Platforms that map only completion rates to training outcomes provide compliance information rather than behavioral evidence. The 30/60/90-day re-test cadence represents the minimum standard needed to distinguish durable behavior change from short-term assessment performance.

Verify Data Privacy and Anonymization Controls

Platforms vary significantly in how they handle individual-level behavioral data in analytics. Organizations operating under GDPR, or equivalent privacy regulations, require an analytics layer that supports configurable anonymization, enabling aggregated reporting without exposing individually identifiable risk data to unauthorized dashboard viewers.

Privacy controls in leading security awareness training platform reporting and analytics solutions are designed to address both data protection and compliance requirements, ensuring that sensitive information is safeguarded and regulatory standards are met.

Under the GDPR, processing employee behavioral data for monitoring purposes requires a documented lawful basis, thereby making the platform's data-handling architecture a procurement-stage legal consideration rather than an implementation detail.

Match Reporting Depth to Simulation Coverage

Reporting is only as strong as the data feeding it; platforms that simulate threats exclusively via email generate click-rate data from a single channel, capturing only a fraction of actual human risk exposure.

Reporting depth should encompass phishing, AI-generated cyberattacks, and broader security threats to ensure organizations track and mitigate the most relevant risks.

Multi-channel simulation coverage across email, voice, SMS, and deepfake video generates a broader behavioral signal set, enabling correlation between attack type and employee response, channel-specific risk scoring, and more precise targeting of remedial training.

Simulation coverage and analytics depth must be evaluated together rather than as separate product categories. The specific metrics that surface from multi-channel data ultimately determine whether a program is reducing risk or simply recording it.

Security Awareness Training Reporting and Analytics FAQs

What Is a Phish-Prone Percentage, and How Is It Used to Measure Security Awareness Training Effectiveness Over Time?

Phish-prone percentage (PPP) is the share of employees who click on a simulated phishing lure during a given campaign, often delivered through realistic phishing emails designed to mimic actual phishing threats.

This metric is central to security awareness training platform reporting and analytics, as it measures an organization's susceptibility to phishing attacks at a point in time and tracks trends over time rather than providing just a snapshot.

A single campaign result indicates how many employees interacted with a lure, while a downward PPP trend tracked over 12 months indicates whether training is producing lasting behavioral change.

For security leaders, the practical application of PPP is threefold:

- Establishing a pre-training baseline

- Benchmarking against industry peers

- Demonstrating ROI to leadership by connecting percentage-point reductions to reduced breach probability.

What Does a Security Awareness Training Platform Need to Satisfy HIPAA, PCI-DSS, and SOC 2 Compliance Audits?

Each framework mandates specific documented evidence, and a security awareness training platform must produce distinct artifacts for each. Robust reporting not only supports audit readiness but also helps organizations demonstrate regulatory compliance with standards such as ISO/IEC 42001 and the NIST AI Risk Management Framework.

- HIPAA: Covered entities must implement a security awareness and training program for all workforce members. Auditors require timestamped training completion records tied to individual employee IDs, content version history, and documentation that training is periodic rather than one-time

- PCI-DSS: Auditors expect records demonstrating that all personnel receive training upon hire and at least annually, including confirmation that employees acknowledge security policies. Completion logs and signed acknowledgment records satisfy this requirement

- SOC 2: Require evidence that personnel understand their security responsibilities. Auditors examine completion records, assessment scores, and evidence of program frequency. Platforms must support export of these records in audit-ready formats, typically timestamped CSV or PDF packages, rather than requiring manual data extraction

How to Calculate a Human Risk Score in a Security Awareness Training Platform, and What Inputs Does It Use?

A human risk score is a per-employee composite metric that quantifies an individual's vulnerability state at a given moment, rather than measuring program activity. It replaces static completion rates with a dynamic signal that changes as employee behavior changes.

User behavior, employee performance, and behavioral analytics are key inputs for human risk scoring, enabling platforms to analyze actions and vulnerabilities to deliver more effective, personalized training. The inputs that drive a well-constructed human risk score typically include:

- Simulation behavior: Click rate history across phishing simulations, phishing reporting rate, repeat-click history, and response speed, indicating how quickly an employee acted on a lure

- Training completion and assessment scores: Whether the employee has completed assigned modules, pre- and post-training knowledge assessment delta, and long-term retention scores at 30, 60, and 90 days post-training

- Open-source intelligence (OSINT) exposure: Publicly available information about an employee, including job title, LinkedIn activity, and published email addresses, that an attacker could use to craft a targeted spear phishing or vishing attack

- Credential breach history: Whether the employee's credentials have appeared in known data breach repositories

- Behavioral signals: MFA adoption status, shadow IT tool usage, and AI application behavior, where the platform tracks them

The score aggregates these inputs into a single risk figure per employee, enabling security teams to identify and prioritize the highest-risk individuals for targeted intervention without waiting for the next simulation cycle.

Adaptive Security's Risk Monitoring and Mitigation capabilities demonstrate how human risk scoring drives automated remediation.

How Can Security Awareness Training Analytics Be Integrated With SIEM or SOAR Platforms for Incident Correlation?

Security awareness training analytics can be pushed to a security information and event management (SIEM) or security orchestration, automation, and response (SOAR) platform via API or webhook, allowing SOC teams to correlate human behavioral data with real-time threat telemetry.

A human risk management platform enhances this process by providing comprehensive tracking and response capabilities for security threats, ensuring organizations can proactively address human-related vulnerabilities.

The core use case is employee-level incident correlation. When a user clicks a simulated phishing email in week one and then triggers a credential access alert in the SIEM in week three, the SIEM can surface that prior simulation record as context, providing analysts with a behavioral profile before an investigation begins.

In a SOAR context, human risk scores can feed automated playbooks. An employee whose risk score crosses a defined threshold can trigger automatic enrollment in targeted training, a manager notification, or a temporary access restriction, all without manual intervention.

The practical integration pathway for most platforms involves a results API that exposes per-user simulation outcomes, training completion records, and risk scores in a machine-readable format that SIEM tools can ingest. Platforms that support syslog or CEF output provide the broadest compatibility across SIEM environments.

This transforms training data from a program-management artifact into active threat intelligence.

What Is the Difference Between Coverage Metrics and Behavioral Metrics in a Security Awareness Training Program?

Coverage metrics confirm that training activity occurred, and behavioral metrics confirm that employee behavior changed as a result. Both are necessary, but conflating them represents the most common reporting error security teams make.

Coverage metrics include training completion rate, course-level progress, time-to-completion, and quiz pass rate, all of which address whether employees received the training.

A 95% completion rate confirms to an auditor that content was delivered, but it does not indicate to a CISO whether employees can recognize a business email compromise (BEC) attempt in their inbox the following week.

Behavioral metrics include phish-prone percentage trend over time, phishing reporting rate, repeat-clicker rate, and knowledge retention scores measured at 30 and 90 days post-training.

These address whether training changed employee behavior under pressure. A rising phishing reporting rate, in which employees actively flag suspicious messages rather than ignore them, is a behavioral signal with direct operational value. It indicates that the human layer is functioning as an active detection input rather than a compliance checkbox.

A mature security awareness program tracks both categories, with coverage metrics meeting audit requirements and behavioral metrics demonstrating that the program reduces real-world risk.

Analytics that connect behavioral change to real outcomes, such as fewer successful incidents or faster reporting of real world threats, help organizations prove that their security awareness training platform is delivering tangible, measurable improvements against current attack techniques.

A reporting layer that surfaces both categories, segmented by department, role, and tenure, distinguishes a program that produces compliance documentation from one that produces measurable risk reduction, which is the standard against which every security awareness platform should be evaluated.

Experience Security Awareness Training Platform Reporting and Analytics with Adaptive Security

Measuring completion rates alone leaves the most consequential risks invisible. Adaptive Security's reporting and analytics layer translates simulation behavior, training outcomes, and OSINT exposure into per-employee risk scores, giving security teams the visibility to act before an incident occurs.

Selecting the right platform ensures compliance requirements are met and delivers measurable risk reduction across the organization. Book a demo to see the dashboards in action.

As experts in cybersecurity insights and AI threat analysis, the Adaptive Security Team is sharing its expertise with organizations.

Contents