This article examines the growing threat of AI deepfake attacks in corporate cybersecurity, analyzing how cybercriminals use deepfake AI to target organizations and presenting concrete defensive strategies. A recent example is the $25 million deepfake cyberattack against an engineering firm.

The article addresses the following topics:

- What is an AI deepfake, and how does it work

- Why deepfake AI is one of the biggest cybersecurity threats in 2026

- How cybercriminals use AI deepfakes to target companies

- The biggest challenges in deepfake AI detection and protection

- How to protect your organization from AI deepfake attacks

What Is an AI Deepfake?

An AI deepfake is a synthetically generated image, video, or audio file in which artificial intelligence is used to depict an individual saying or doing something without their knowledge or consent.

In the context of cybercrime, deepfakes are primarily deployed to enhance the credibility of phishing campaigns, thereby increasing the success rate of social engineering attacks.

Deepfake AI vs. Other Fake Media

The primary distinction between AI deepfake and other forms of fabricated media lies in their degree of automation and realism.

Traditional fake media relies on manual editing techniques, such as image manipulation, video splicing, or selective quotation. This approach imposes inherent limitations on the output, as it constrains cybercriminals to working within the bounds of existing material. Furthermore, traditionally fabricated content cannot be modified in real time.

AI deepfakes, by contrast, use existing media to train generative models, enabling the synthetic creation and manipulation of content with a significantly higher degree of freedom and perceived authenticity.

This grants threat actors precise control over the depicted speech and behavior of their targets. Critically, these models are capable of operating in real time, allowing them to adapt dynamically to live interactions and substantially amplifying the threat they represent.

How Does an AI Deepfake Work

AI deepfake functions by training artificial intelligence models to learn and replicate an individual's communication style, with the specific methodology varying depending on the medium employed, whether audio or video. The central risk stems from the degree of realism that modern deepfake technology is capable of achieving.

Deepfakes typically rely on Generative Adversarial Networks (GANs), a machine learning framework designed to produce highly realistic synthetic data, including images, audio, and video. The framework operates as a continuous feedback loop in which the model generates synthetic media, evaluates it against the original to identify inconsistencies, incorporates the resulting feedback, and iterates until the fabricated content becomes visually or audibly indistinguishable from the source material.

This process can be summarized in three core steps:

Step 1: AI Deepfake Data Collection

Threat actors collect existing media samples of a target individual and use them to train the deepfake AI model. Sufficient training data is frequently accessible through both public sources and previously compromised materials. When targeting a corporate figure such as a chief executive officer, threat actors may draw from the following sources:

- Publicly available interviews

- Appearances in podcasts, webinars, and professional workshops

- Earnings calls and recorded video conferences

- Social media content

- Internal recordings obtained through prior unauthorized access

The volume and quality of available training material directly determine the fidelity of the resulting deepfake model.

Step 2: AI Deepfake Training

All collected material is used to train the AI deepfake model to replicate the target's communication patterns.

Modern deepfake models extend beyond vocal tone and facial appearance to replicate the subtle non-verbal cues that distinguish one individual from another. Depending on the volume and nature of the available training data, these models are also capable of replicating the subtle non-verbal cues that distinguish one individual from another.

Step 3: AI Deepfake Content Creation

Once the model has been sufficiently refined, it can be used to generate AI deepfake content. Practical applications include voicemails and pre-recorded video messages impersonating corporate executives.

The most operationally significant application of deepfake AI for cybersecurity purposes, however, is its deployment in live video calls. In this context, deepfakes function as a vector for heightened psychological pressure, preventing targets from seeking external verification while enabling threat actors to adapt the interaction in real time.

How Long It Takes to Create an AI Deepfake

The time required to produce an AI deepfake ranges from a few minutes to several hours, depending on the following factors:

- Type of deepfake: Static deepfake images represent the simplest output and can be generated within minutes, whereas more complex formats require considerably more time. Short audio or video clips may take approximately one hour to produce, while detailed impersonation models can require several days. A full deepfake phishing campaign requires additional time proportional to the number of assets being deployed.

- Available source material: The volume and quality of training data directly affect the efficiency of the creation process. Executives and other public-facing corporate figures present a heightened risk, as their broad media exposure provides threat actors with abundant high-quality material from multiple angles.

- Tools employed: Threat actors may develop custom or semi-custom workflows or rely on commercially available deepfake tools. Custom workflows require more time to configure but afford greater control and produce higher-fidelity results, whereas ready-to-use tools offer speed at the cost of output quality.

- Hardware: Processing power has a direct impact on production time. While high-end hardware is not strictly necessary for cybercriminal purposes, some threat actors do deploy substantial computational resources to improve output quality and reduce production time.

- Objective: A deepfake does not need to achieve perfect realism to be effective in a cybercrime context; it needs only to be sufficiently convincing to deceive the intended target. The objective of organizational security teams is therefore to raise the threshold of employee skepticism, making it progressively more difficult for deepfake content to succeed.

What Is Deepfake-as-a-Service

Deepfake-as-a-service refers to a growing ecosystem of tools, platforms, and commercial services that enable cybercriminals to construct and deploy deepfake phishing campaigns without requiring specialized technical expertise. This development represents a significant shift in the cybercrime landscape, as it extends the capacity to produce highly convincing fraudulent content to a substantially broader population of potential attackers.

The practical barriers that previously constrained the production of deepfake content, including the time, technical skill, effort, and production quality required, have been largely eliminated by this service model.

For cybercriminals, the objective is not to produce a flawless deepfake, but rather one of sufficient quality to deceive the target under realistic conditions. Deepfakes-as-a-service platforms lower the threshold required to meet that standard to a degree that makes such attacks accessible at scale.

How Much Does Deepfake-as-a-Service Cost

According to Group IB's 2026 Weaponized AI: Inside the Criminal Ecosystem Fuelling the Fifth Wave of Cybercrime, deepfake-as-a-service offerings are available at prices as low as US$5. Reported pricing across the ecosystem includes US$15 for fully synthetic identities, US$10-US$50 for deepfake image generation services, and up to US$10,000 for renting advanced face-swapping software.

These low price points represent a material economic shift in the viability of deepfake-based attacks. Prior to the proliferation of this service model, producing a convincing deepfake required substantial investments of time and technical expertise, rendering such attacks impractical for most cybercriminals. The commoditization of these capabilities has removed that barrier, significantly improving the cost-to-return ratio of deepfake phishing operations.

The consequences of this shift are reflected in documented attack volume. Resemble AI's Q3 2025 Deepfake Incident Report recorded a 317% increase compared to earlier in 2025, and a 1,500% increase since 2023, underscoring the pace at which this attack category has expanded as a direct result of reduced production costs and increased accessibility.

Why Is AI Deepfake Dangerous in 2026

Modern AI deepfakes have emerged as a significantly more prevalent cyberattack vector due to AI's transformative impact on the quality and volume of synthetic media production. This advancement represents a critical threshold for cybercriminals: AI-generated deepfakes are no longer a technological novelty designed to attract social media attention, but rather an operational tool of sufficient realism to drive targeted individuals to take concrete action.

Deepfakes are effective because they exploit three fundamental psychological triggers in employees:

Urgency: Deepfake content is used to construct scenarios that appear to demand an immediate response, reducing the target's capacity for critical evaluation.

Authority: Deepfakes leverage the likeness of authority figures or familiar individuals to lend credibility to fraudulent requests, increasing the likelihood of compliance.

Sensory input: Employees have developed a degree of skepticism toward written communications, but the instinct to trust visual and auditory information remains a deeply ingrained human tendency. Deepfakes exploit this bias by presenting fabricated content through inherently trusted sensory channels.

Cases of Deepfake Phishing Attacks

The following cases illustrate the financial and operational impact of AI deepfake attacks on corporate targets

The most widely documented case involves a multinational engineering firm in which an employee was deceived into transferring $25 million to cybercriminals. The attack was carried out via a fraudulent video call, during which the employee believed they were communicating with the company's chief financial officer. The individual on the call was, in fact, a deepfake. This case demonstrates the immediate and severe financial consequences of deepfake phishing attacks.

A comparable incident targeted an advertising firm, in which cybercriminals orchestrated a meeting between the chief executive officer and individuals posing as fellow executives. The objective of the attack was to compel the executive to authorize a financial transfer or disclose sensitive organizational information.

A further notable case involved an employee at a cybersecurity firm who was similarly targeted through chief executive officer impersonation fraud. This incident is particularly significant as it illustrates the increasing boldness of cybercriminals, who are now willing to target individuals with specialized knowledge of cybersecurity threats and defenses.

Deepfake AI Statistics for 2026

The scale and trajectory of AI deepfake threats are reflected in current industry data.

According to Signicat's 2025 The Battle Against AI-Driven Identity Fraud, deepfake fraud attempts increased by 2,137% over the past three years, rising from 0.1% to 6.5% of all recorded fraud attempts. This growth is attributable to the rapid advancement of AI-driven threat capabilities during this period.

As reported in Entrust's 2025 Identity Fraud Report, deepfake attacks occur every five minutes, underscoring that this is an active and widespread threat rather than an emerging one, and placing fraud prevention among the foremost priorities for security teams globally.

The effectiveness of these attacks compounds the concern. According to Cisco Talos' Incident Response Q1 2025 Quarterly Report, vishing is the most common type of phishing attack, present in over 60% of all respondents' engagements.

Verizon's 2025 Data Breach Investigations Report indicates that the human element was a factor in 60% of all data breaches, reinforcing that any comprehensive cybersecurity strategy must address human vulnerabilities, including susceptibility to phishing and social engineering attacks.

These trends are reflected in market projections. According to Marketsandmarkets' 2024 Deepfake AI Report, the global deepfake AI market is forecast to reach $5.13 billion by 2030, representing a compound annual growth rate of 44.5%.

Industries Most Targeted by Deepfake Attacks

Cybercriminals select targets based on a combination of potential financial return and the degree to which operational conditions within a given industry facilitate successful social engineering attacks. The following sectors represent the most frequently targeted industries.

- Financial services: The financial sector is a primary target due to the direct monetization potential of successful attacks. Cybercriminals commonly impersonate executives through deepfake audio and video to authorize fraudulent transfers, as illustrated in the cases documented above

- Healthcare: The healthcare sector combines high-pressure operational environments with repositories of sensitive patient data. The potential disruption of life-critical services further amplifies the severity of successful attacks in this industry

- Technology: In addition to holding valuable intellectual assets, technology organizations operate at an accelerated pace that incentivizes rapid decision-making. The sector's heavy reliance on remote communication creates conditions particularly conducive to deepfake impersonation attacks

- Manufacturing: Manufacturing organizations depend on interconnected operational systems whose disruption can produce significant material and financial damage. Decentralized physical locations further increase vulnerability to communication-based fraud

- Legal: Legal firms handle substantial volumes of sensitive and privileged information within communication workflows that place a high premium on personal trust. Impersonation of legal counsel or high-value clients may provide cybercriminals with access to confidential case materials or sensitive organizational data

- Retail: The retail sector presents one of the largest attack surfaces across all industries, due to a high employee headcount, extensive third-party vendor and supplier interactions, and decentralized organizational structures

- Education: Educational institutions typically operate on open, collaborative models with large and diverse user bases. In addition to impersonating faculty, cybercriminals may target students, whose personal images are frequently and publicly available on social media.

Vulnerability is determined not only by industry but also by organizational role. Employees in financial functions, for example, represent high-value targets regardless of the sector in which they operate.

Key takeaway: The role of the individual is therefore as significant a factor as industry classification in assessing deepfake attack risk.

For security teams seeking further guidance on countering AI-driven phishing campaigns, the complete phishing guide presents ten practical mitigation strategies.

The Main Types of AI Deepfakes

The following represent the primary categories of AI deepfakes currently employed by threat actors in corporate cybercrime.

- Audio deepfakes: Synthetic audio content generated to replicate an individual's voice, enabling cybercriminals to fabricate spoken statements. This format is particularly effective due to its relatively low production cost and ease of replication at scale.

- Video deepfakes: Synthetic video content generated to depict an individual saying or performing actions of the cybercriminal's choosing. As production barriers continue to decrease, video deepfakes are becoming an increasingly prevalent attack format. Their effectiveness derives from the additional layer of perceived authenticity that visual content provides.

- Face-swapping deepfakes: A subcategory of video deepfakes in which one individual's facial features are digitally superimposed onto another person's body, enabling impersonation within existing video footage.

- Lip-sync deepfakes: A format in which the mouth movements of an individual in an existing video are altered to synchronize with fabricated audio, creating the appearance that the individual is delivering statements they never made.

- Deepfake overlays: Technology that enables the real-time manipulation of an individual's appearance during live video calls, allowing cybercriminals to impersonate a target without pre-recorded content.

- Image deepfakes: Synthetically generated static images produced to meet the specific needs of a given cyberattack. Image deepfakes are particularly valuable as supporting documentation in phishing campaigns. They are faster and require less effort to produce than manually edited ones, making them a highly efficient tool for cybercriminal operations.

- Live deepfakes: Real-time deepfake technology that replicates an individual's likeness and adapts dynamically to live interactions. This format requires the greatest investment of time and resources but represents one of the most dangerous tools available to cybercriminals, particularly in the context of high-value spear-phishing operations.

Generative AI Threats Beyond Deepfake

Generative AI threats are being leveraged across the full spectrum of cybercrime, both by enabling new attack vectors and by augmenting established methods used by cybercriminals.

- Phishing Emails: Large language models (LLMs) increase the speed and quality of phishing email generation while eliminating common indicators of fraudulent communication, such as grammatical errors.

- AI-Powered OSINT Profiling: Cybercriminals utilize Open Source Intelligence (OSINT) to gather information and refine the targeting of attacks. AI significantly reduces the time and technical effort required to conduct this research.

- Identity Fraud: AI systems are capable of generating fully synthetic identities and fabricated personas for use in fraudulent activities, including falsified job applications and account creation.

- AI-Generated Malicious Code: The capacity for LLMs to produce functional malicious code in response to plain-language requests substantially lowers the technical barrier to entry for conducting cyberattacks.

- Prompt Injection and LLM-Based Attacks: AI models themselves have emerged as a distinct cyberattack surface, as cybercriminals seek to exploit vulnerabilities in internally deployed AI tools through techniques such as prompt injection.

- AI Agents: Autonomous AI agents represent an advanced evolution in AI-enabled cyberattacks. These systems possess the capability to independently browse the web, generate code, and execute deployments without human intervention. In late 2025, Anthropic identified and dismantled the first documented instance of a fully autonomous agent-based cyberattack.

How Do Cybercriminals Use an AI Deepfake in Phishing Scams Against Companies

An AI deepfake presents distinct challenges across industries and organizational roles, as cybercriminals continuously adapt their methods to target specific victims. Underlying these varied attack scenarios is a consistent strategic intent: to construct scams that feel personal, credible, and difficult to challenge, while remaining consistent with the target's professional context.

- Personalization: Generative AI enables cybercriminals to craft highly targeted communications that reference an employee by name, cite their specific role, or incorporate details related to personal or organizational events. This specificity increases the perceived legitimacy of the attack.

- Believability: Advances in deepfake quality substantially increase the credibility of fraudulent communications. This effect is compounded when cybercriminals coordinate attacks across multiple channels simultaneously. An employee who receives a fraudulent email, phone call, and live video interaction, all presenting a consistent narrative, is significantly more likely to accept the scenario as authentic.

- Resistance to Scrutiny: Cybercriminals employ a range of psychological techniques to suppress critical evaluation by the target. These include initiating contact during periods of distraction, imposing artificial urgency to eliminate decision-making time, and timing outreach to coincide with moments of reduced alertness, such as the end of the workday or high-volume work periods. The use of authority figures further discourages employees from questioning instructions or raising concerns about potential errors.

- Role-Aligned Targeting: Cybercriminals design scams that align with the professional responsibilities of the intended target. A financial analyst approached with a request to authorize a third-party payment, or an HR professional engaged in what appears to be a routine recruiting interaction, may not immediately recognize the anomaly. The same scenario presented to a mismatched role would raise immediate suspicion.

The following section presents concrete examples of how deepfake-based attacks are currently being deployed against organizations.

AI Deepfake Videos of Executives

AI-generated deepfake videos of executives represent one of the most consequential phishing threats facing organizations today, with the potential to target virtually any company regardless of size or sector. Given that executives serve as the public faces of their organizations, substantial volumes of audio and video material are readily available to cybercriminals for use in constructing convincing deepfake content.

These videos are designed to manipulate employees into taking unauthorized actions. The most prevalent objective is financial fraud, though attacks may equally target sensitive organizational data, access credentials, or, in certain contexts, manipulate market integrity.

Executive deepfake scams demonstrate a high rate of effectiveness because they activate multiple psychological vulnerabilities simultaneously. Employees are conditioned to comply with directives from senior leadership and are frequently reluctant to question instructions issued by an executive or equivalent authority figure. This reluctance is further reinforced by the fear of professional consequences associated with inaction or error.

Key takeaway: A video communication or live call purportedly from an executive can no longer be considered a sufficient or reliable method of identity verification.

AI Deepfake in Voice Authentication

Voice authentication has historically been regarded as a reliable and straightforward method of identity verification. However, the emergence of AI-powered voice cloning has fundamentally undermined this assumption. Cybercriminals can now generate voice clones capable of bypassing security systems and organizational workflows that rely solely on vocal identity as a verification mechanism.

The effectiveness of voice cloning stems from its ability to replicate not only the general characteristics of an individual's voice but also the subtle attributes that distinguish it, including tone, pitch, cadence, accent, pronunciation, speech patterns, and verbal mannerisms. This level of fidelity makes synthetic voice output difficult to distinguish from authentic speech under standard verification conditions.

From an organizational security perspective, many teams do not currently employ formal voice verification protocols. Where such protocols are in place, contemporary voice cloning technology may not achieve complete bypass in every instance; however, it is sufficiently advanced to produce a credible imitation, particularly in the context of targeted attacks where the victim has been specifically researched in advance.

While deepfake AI can circumvent certain biometric solutions, formal voice authentication systems remain a viable defense, as most vendors have incorporated anti-spoofing and liveness detection into their platforms.

Key takeaway: In both formal verification systems and informal communication contexts, voice authentication can no longer be treated as inherently secure. This limitation is expected to become more pronounced as voice synthesis technology continues to advance.

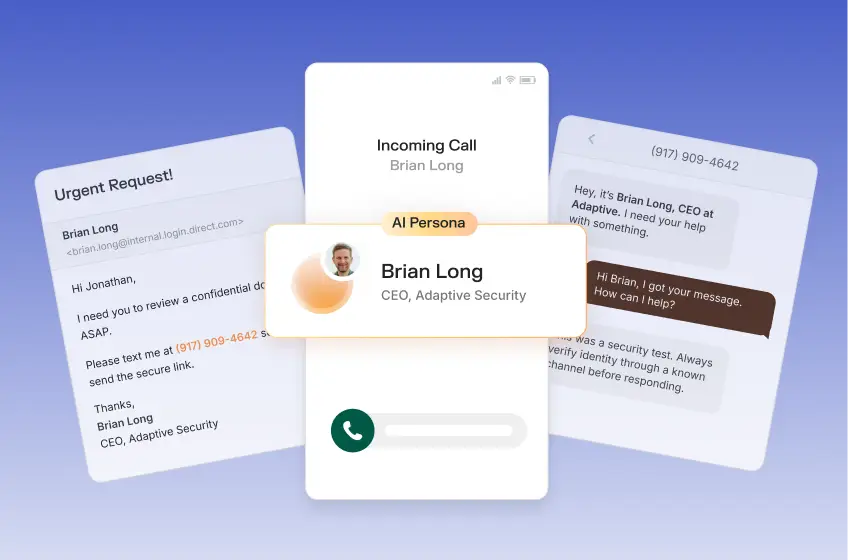

AI Deepfake in Multi-Channel Phishing Attacks

AI deepfakes are especially powerful in multi-channel phishing scams, as cybercriminals no longer rely on a single email or link as their sole phishing lure.

Many phishing scams against companies are coordinated, multi-channel campaigns spanning email, phone calls, SMS, voice messages, video calls, and more. The objective is to construct a consistent fraudulent narrative across multiple communication channels.

Consider a scenario in which an employee receives a fraudulent email, followed by a phone call and a voice message, all consistent with one another. The risk is further compounded by threats such as thread hijacking, which make these messages harder to detect.

The following section examines the industries most frequently targeted by AI deepfake attacks, illustrating the specific vulnerabilities and attack vectors that cybercriminals exploit within each sector.

Key takeaway: Multi-channel deepfake phishing campaigns require a structural response — not just individual recognition skills, but consistently enforced processes for handling sensitive requests regardless of apparent source.

AI Deepfake Payment Approvals, and Other Finance Scams

AI deepfake financial scams are a preferred method among cybercriminals, as they directly target the primary objective of most cyberattacks: financial gain. By impersonating a range of organizational stakeholders, including executives, vendors, and legal contacts, cybercriminals can fabricate scenarios to trigger unauthorized financial transfers.

The range of scenarios that cybercriminals may employ to manipulate financial teams into executing fraudulent payments is effectively limitless. Attempting to catalog every possible attack scenario is not a viable security strategy, as cybercriminals continuously develop and refine new methods. Any enumeration of known tactics would be incomplete by definition and would risk creating a false sense of preparedness.

For this reason, the objective of deepfake protection is not to anticipate every possible attack scenario, but to establish layered security controls around high-value individuals and sensitive financial processes. This approach ensures that protective measures remain effective regardless of the specific method employed by cybercriminals.

AI Deepfake in Healthcare

In its 2025 Cybersecurity Year in Review, the American Hospital Association reported that more than 33 million Americans were affected by healthcare data breaches over the preceding year, establishing healthcare as one of the most heavily targeted sectors.

Two primary factors account for this concentration of attacks. First, healthcare organizations maintain repositories of highly sensitive and monetizable personal data. Second, successful attacks can disrupt services essential to patient survival, giving cybercriminals significant leverage.

The predominant deepfake-related risk in healthcare involves AI-generated impersonation to obtain confidential patient data and prescription information, either by assuming the identity of clinical staff or, in some cases, of patients themselves. This vulnerability is compounded by the operational culture of the healthcare environment, in which urgency is a routine condition, and employees frequently operate under sustained pressure.

Another serious concern involves the use of AI to manipulate patient diagnostic data. Deepfake technology can be used to gain unauthorized access to medical imaging systems and alter diagnostic images, with the potential to mislead practitioners into rendering inaccurate and potentially life-threatening diagnoses.

Lastly, AI-enabled attacks in healthcare can also involve the fabrication of insurance claims and fraudulent billing submissions. These attacks follow a pattern similar to financial scams in other sectors, exploiting trust and process gaps within administrative workflows.

AI Deepfake in HR

Human resources departments are increasingly exposed to AI deepfake threats, most prominently in the form of fraudulent job applications. Cybercriminals are able to generate AI deepfake video personas specifically constructed for use during interview processes, enabling them to present fabricated identities with a high degree of visual and behavioral authenticity.

The objectives motivating this type of attack vary and may include corporate espionage, intellectual property theft, unauthorized data access, and the exfiltration of sensitive organizational information.

Beyond the direct risks associated with fraudulent applicants, human resources departments represent a high-value target due to their central role in organizational communication and information management. HR teams maintain comprehensive records on every employee across all levels of the organization, making them a particularly valuable point of entry for cybercriminals seeking to gather personnel data.

HR impersonation presents an additional and distinct attack vector. Employees are unlikely to regard an unexpected call or video meeting initiated by an apparent HR representative as suspicious, given the routine nature of such interactions. This baseline level of institutional trust significantly reduces the likelihood of critical scrutiny.

These two vulnerabilities can be exploited in combination. A cybercriminal may conduct multiple fraudulent interviews using fabricated candidates to gather detailed audio and video material of HR personnel. This material can subsequently be used to generate highly convincing deepfakes of those individuals, which are then deployed in targeted attacks against other employees within the organization.

AI Deepfake in IT

IT departments occupy a similarly central position within organizational infrastructure as human resources, though their domain encompasses data, systems, and access controls rather than personnel. The sensitivity and breadth of the assets managed by IT teams make them a primary target for cybercriminals and a critical component of any organizational fraud prevention strategy.

Common deepfake phishing scams directed at IT departments include fraudulent helpdesk verification requests, account recovery schemes, and various forms of unauthorized access acquisition. In these scenarios, cybercriminals impersonate distressed or time-pressured employees and engage IT personnel in order to obtain access to systems or credentials that would otherwise be beyond their reach.

IT teams face a particular vulnerability in that the psychological triggers most frequently employed by cybercriminals closely mirror the conditions of their normal working environment. Urgency and pressure from colleagues, including senior executives, are standard features of IT operations rather than anomalies, which reduces the likelihood that such cues will be recognized as indicators of a potential attack.

To illustrate this risk, consider a scenario in which an IT employee receives an AI deepfake call from an individual presenting as the CEO, who claims to be mid-presentation and to have lost access to their multi-factor authentication credentials. This scenario is operationally plausible, consistent with the kinds of requests IT personnel routinely handle, and would, if successful, result in a significant and potentially wide-ranging compromise of organizational access controls.

Other Types of Deepfake Scams

Several additional categories of AI deepfake, while not primarily associated with enterprise environments, are sufficiently significant to warrant acknowledgment in this context.

The most prevalent is the non-consensual generation of deepfake sexual content, which represents a serious and widespread harm, disproportionately affecting women. In response to this concern, Adaptive Security has developed a no-cost program designed to help educational institutions train students to recognize and protect themselves from deepfake sexual abuse.

Deepfake-generated disinformation is a second major category of concern. Reuters has reported on the potential for AI-generated fake news to influence the 2026 United States midterm election campaigns, highlighting the broader societal implications of this technology. Within a corporate context, the same capability can be directed toward market manipulation, with fabricated content used to artificially influence investor behavior or asset valuations.

Beyond these categories, deepfake scams are also deployed in attacks targeting individuals directly, including romance and relationship-based fraud and family emergency scams, in which fabricated audio or video content is used to deceive victims into believing a loved one is in distress.

Key takeaway: AI deepfakes are not exclusively a corporate security concern; they represent a challenge of broad societal consequence. By investing in employee awareness and training programs, organizations contribute to the protection of their workforce while simultaneously strengthening the resilience of the wider communities in which they operate.

Biggest Challenges Presented by AI Deepfake

AI deepfakes introduce a range of complex challenges, spanning automated detection limitations and inherent human vulnerabilities. As a relatively nascent technology, deepfake-based attacks continue to evolve rapidly, and organizations are still developing the frameworks and capabilities required to respond effectively.

Concurrently, cybercriminals are also refining their methods, producing increasingly sophisticated and targeted scams. The following section outlines the most significant challenges associated with this threat category.

AI Deepfake Detection Tools Are Not Perfect

AI deepfake detection tools constitute a valuable component of an organizational defense strategy and should be incorporated accordingly. However, these tools are not infallible, and an approach that relies exclusively on automated detection introduces meaningful risk. Environmental factors, such as suboptimal video or audio quality during live calls, can interfere with detection accuracy and produce unreliable results under conditions that are common in standard business communications.

A further limitation is that cybercriminals are able to reverse-engineer existing detection tools, using that knowledge to generate training data specifically designed to defeat them. This dynamic reflects a fundamental and enduring pattern in cybersecurity, in which offensive and defensive capabilities develop in continuous opposition to one another, with advances on either side prompting corresponding adaptations on the other.

A defining characteristic of AI-driven cybersecurity threats is that this cycle of attack and defense operates at a substantially accelerated pace compared to previous generations of cyber threats. The speed at which both offensive tools and detection capabilities evolve increases the probability that organizations will find themselves exposed during the intervals between the emergence of a new attack method and the development of an effective countermeasure.

AI Deepfake Scams Are Also Not Perfect

AI deepfakes are not, in their current state, technically flawless. However, this limitation does not substantially reduce their effectiveness as an attack vector, as a high degree of technical perfection is not required to achieve the intended outcome. This asymmetry constitutes a structural advantage for cybercriminals.

For a deepfake attack to succeed, the generated content needs only to be sufficiently convincing to prompt the target into taking a specific action. The threshold for achieving this varies considerably depending on the circumstances of the attack and, critically, the preparedness of the individual targeted. An employee with no exposure to deepfake awareness training represents a low-resistance target.

Conversely, an employee operating within an environment that combines awareness training, established verification processes, and supporting security tools presents a substantially higher barrier, one that would require near-flawless deepfake content to overcome.

This distinction illustrates the strategic value of layered security. By raising the technical threshold that deepfake content must meet in order to be effective, organizations can render a significant proportion of attacks unsuccessful without requiring any single defensive measure to be comprehensive on its own.

Key takeaway: The effectiveness of an AI deepfake attack is not contingent on technical perfection. It is contingent on whether the content is sufficiently convincing given the preparedness of the target and the conditions of the interaction.

AI Deepfake Forces Employees to Challenge the Normal

Phishing in cybersecurity has evolved substantially, advancing from text-based email scams to sophisticated AI deepfake video communications depicting familiar colleagues and executives issuing requests that align precisely with the target's professional responsibilities. This evolution renders deepfake phishing simulation an essential component of contemporary security awareness training programs rather than an optional supplement.

Traditional employee training focused on the identification of conspicuous indicators of fraudulent communication, such as suspicious hyperlinks, unusual sender addresses, or poorly constructed messages. This approach is insufficient in a threat environment where those indicators are frequently absent. When deepfake content is of sufficient quality and contextual relevance, employees cannot be expected to rely on the same visual and textual cues that previously served as reliable warning signs.

This challenge is further compounded by the real-time nature of AI-enabled attacks. Expecting an employee to accurately identify and respond to a sophisticated deepfake attack as it unfolds, without structural support, is both operationally unrealistic and places an undue burden on the individual. Effective deepfake protection, therefore, requires organizations to go beyond individual recognition skills, embedding verification frameworks and clear processes that reduce reliance on in-the-moment human judgment.

Deepfake Awareness Training Lies Beyond the Content

A fundamental misconception undermines the effectiveness of most existing deepfake awareness training programs. The predominant focus of these programs is the evaluation of content authenticity, training employees to assess whether a given video, audio recording, or image is genuine or synthetically generated. This framing, while intuitive, addresses only a subset of the actual risk.

The core insight that effective training must convey is that the authenticity of the content itself is secondary to the legitimacy of the process through which a request is made.

Organizations should train employees to evaluate whether a communication adheres to established protocols, particularly when it involves requests related to sensitive information, financial transactions, access credentials, or other high-value assets.

A request that bypasses standard verification procedures or applies pressure to circumvent established workflows should be treated as suspect regardless of the apparent quality or authenticity of the accompanying media.

Key takeaway: Deepfake awareness training should prioritize the identification of process violations over the assessment of content quality. An employee who recognizes that a request deviates from established protocol is better protected than one who attempts to determine whether the video or audio content in front of them is authentic.

Employees Are Bad at Detecting an AI Deepfake

The 2024 Human Performance in Detecting Deepfakes paper found that individuals successfully identified deepfake content in 55 percent of cases. Detection rates were lowest for video content, where accurate identification dropped to 39 percent. Notably, the research also demonstrated that targeted training produced meaningful improvements, increasing detection accuracy by an average of 10 percentage points across video, audio, and image formats.

These findings have direct implications for organizational security strategy. A baseline detection rate of 55 percent is insufficient to function as a reliable safeguard, as it implies that nearly half of all deepfake content presented to untrained employees will go undetected.

This limitation is further compounded by the operational conditions under which attacks frequently occur. Factors such as stress, time pressure, and contextually plausible scenarios, all of which are deliberately engineered into targeted deepfake attacks, further reduce the likelihood of accurate detection.

These results reinforce the conclusion that unaided human judgment cannot serve as a primary line of defense against deepfake-based attacks. Structured awareness training, combined with process-based controls that reduce reliance on individual detection capability, is essential to achieving a defensible security posture.

The Phishing Prevention Framework

The proliferation of AI deepfakes fundamentally redefines the parameters of phishing prevention in 2026 and beyond. Established assumptions that underpin conventional security controls, including the premise that visual or auditory confirmation constitutes reliable verification, are no longer operationally valid. Adapting to this evolved threat environment requires a structured and comprehensive response.

Cybersecurity professionals must implement a three-layered defense framework in which each layer is designed to compensate for the inherent limitations of the others. This approach recognizes that no single control, whether technological, procedural, or human, is sufficient in isolation, and that effective protection against AI deepfake attacks requires the deliberate integration of complementary defensive measures.

The Layered Phishing Prevention: People, Processes, and Technology

The defining principle of layered phishing prevention is redundancy. Each layer is designed to compensate for the failures of the others, operating on the premise that any individual control will, under sufficient pressure or in specific circumstances, prove inadequate.

- People: Develops employee competency through structured deepfake awareness programs designed to improve recognition and response

- Process: Establishes clearly defined and consistently enforced procedures for handling sensitive requests, independent of the communication channel or apparent source

- Technology: Employs current detection and filtering tools to block the greatest possible volume of deepfake-based attacks through automated means.

No single layer is sufficient in isolation, as each is subject to failure under specific conditions. Technological controls are vulnerable to circumvention as cybercriminals develop more sophisticated attack models, often using data derived from existing defensive systems to engineer tools specifically designed to bypass them. AI accelerates this process, compressing the time between the deployment of a defensive measure and the development of a corresponding countermeasure.

Even extensively trained employees may fail to identify a deepfake attack when operating under conditions of elevated stress or diminished cognitive capacity. Process-based controls fail either through inconsistent enforcement or through active circumvention, as cybercriminals are capable of bypassing verification mechanisms, including multi-factor authentication, under certain conditions. Policies that are not consistently applied provide no meaningful protection.

The sections that follow examine each of these three layers in detail and outline recommended implementation strategies for defending against deepfake fraud.

For a more comprehensive step-by-step framework, Adaptive Security's deepfake-proofing guide provides an extended program of controls designed to protect organizations against this category of threat.

Key takeaway: Cybercriminals do not attempt to overcome every defensive layer. They identify and exploit the weakest point. A layered defense strategy is only as effective as its least robust component.

Organizations seeking comprehensive guidance on workforce protection may request an Adaptive Security phishing simulation demo.

How to Protect Executives from Phishing Deepfake Scams

Executives warrant a distinct and elevated level of protective attention within any deepfake defense strategy. They represent both the primary targets of deepfake phishing attacks and the individuals most frequently impersonated, given the decision-making authority, system access, and organizational influence they hold.

Their prominence as public figures also means that substantial volumes of audio and video material are publicly available, providing cybercriminals with extensive source data for training deepfake models.

The same three-layered defensive framework applies to executive protection; however, each layer must be implemented with a greater degree of rigor and personalization than is standard for the broader workforce. The following measures are recommended.

- Threat Exposure Mapping: Organizations should systematically identify and document the conditions under which executives are most vulnerable to social engineering. An executive attending a public industry conference, for example, represents a predictable and well-documented exposure window, during which contextual information is readily accessible, and the executive may be operating under time pressure or reduced oversight. Shared organizational awareness of an executive's attack surface can preempt a significant proportion of targeted cyberattacks.

- Digital Footprint Reduction: Organizations should audit and, where operationally feasible, limit the public availability of executive audio and video content, including podcast appearances, earnings calls, and personal social media activity. While such measures carry business considerations, the security risk associated with unrestricted public exposure of high-fidelity voice and video material may outweigh the benefits in many cases

- Leaked Credential Monitoring: Compromised executive credentials may provide cybercriminals with access to an organization's broader digital infrastructure. A dedicated monitoring system capable of detecting credential exposure as close to real time as possible is an essential control at this level of risk

- Enhanced Technical Controls: Standard technical controls are calibrated for standard risk profiles. Recommended measures for executives include replacing conventional multi-factor authentication with hardware security keys, restricting the authorization of financial decisions to previously verified and designated workstations, and incorporating deepfake detection tools into all relevant communication workflows

- Individualized Security Awareness Training: While role-based security awareness training is appropriate for the general workforce, executive training should be customized to the individual. Adaptive Security consistently observes that executives are most effectively engaged through live demonstrations of deepfake content replicating themselves or their colleagues, an approach that reliably conveys the practical severity of the threat in a manner that abstract instruction does not

- Process-Based Verification: Process controls represent the most reliable safeguard at the executive level. Out-of-band verification should be treated as mandatory for all executive interactions involving sensitive or high-value requests. The use of pre-established secret passwords or passphrases between executives provides an additional and highly effective verification mechanism that remains difficult to circumvent through deepfake-based attacks

- Continuous Monitoring and Iteration Security awareness training should be treated as an ongoing program rather than a discrete event. Executives should be assigned the highest priority within any update or review cycle, given the elevated and evolving nature of their risk exposure.

Many executives demonstrate an existing awareness of these risks and independently adopt protective measures. Nevertheless, structured support from the organizational cybersecurity team strengthens both enterprise security and the personal digital security of the executives themselves.

What Should a Company Do After a Deepfake Attack

When a cybersecurity team identifies that an employee has been targeted by a deepfake phishing attack, a structured and time-sensitive response must be initiated, the following six steps outline the recommended incident response procedure.

- Financial Containment: In cases involving fraudulent fund transfers, contacting the relevant financial institution is the immediate priority. Prompt action at this stage maximizes the likelihood of preventing transferred funds from becoming unrecoverable.

- Isolation: Access associated with any compromised or impersonated account should be revoked or reestablished without delay. Contact with the impersonated individual must be conducted exclusively through out-of-band channels to ensure the integrity of the communication.

- Evidence Preservation: All evidence related to the attack must be retained in its original state. Employees should be explicitly instructed not to delete any communications, files, or records associated with the incident. This material is essential to the subsequent investigation and may be required for legal or regulatory purposes.

- Stakeholder Communication: Given the speed and potential scale of AI-generated attacks, timely and targeted communication is a critical response component that is frequently underutilized. Internal communication should be prioritized to halt any pending sensitive approvals, and a designated press contact should be identified and prepared in the event that details of the attack become public.

- Recovery and Control Reassessment: Normal operations should not be resumed without a thorough reassessment of existing security controls. Returning to standard workflows without addressing the conditions that enabled the attack increases organizational exposure to subsequent incidents.

- Investigation: The incident should be subject to a comprehensive investigation, with the objective of extracting the maximum possible amount of actionable information. It is important to recognize that deepfake phishing attacks frequently serve as initial access vectors for more disruptive follow-on attacks, including ransomware. The investigation must confirm that the attack pathway has been fully identified and closed before standard operations are restored.

How to Develop a Deepfake Awareness Training Program?

The first layer of a deepfake protection strategy is centered on people, with the objective of ensuring that employees are equipped to identify and respond appropriately to deepfake-based threats. The purpose of deepfake awareness training extends beyond the development of detection skills. It is designed to transform employees into an active defensive layer against phishing attacks, which remain one of the most frequently used initial access vectors for more sophisticated follow-on attacks, including ransomware.

Achieving this objective requires implementing a structured, cyclical training program. According to CrowdStrike's 2025 State of Ransomware Survey, 76% of global organizations struggle to match the speed and sophistication of AI-powered attacks. This finding underscores the inadequacy of static or infrequent training models. Given the pace at which deepfake technology and associated attack methods continue to evolve, awareness training must be continuous and regularly updated to remain operationally relevant.

The Deepfake Awareness Training Program Cycle

An effective deepfake awareness training program is an ongoing and iterative process. Each phase of the program builds upon the preceding one, creating a cumulative improvement in organizational resilience. The following four-step cycle represents a proven framework for structured deepfake awareness training.

- Assessment:The program begins with a comprehensive assessment of the organization's current security maturity. This may take the form of a pre-training quiz, knowledge test, or phishing simulation, and should be conducted on a role-by-role basis to evaluate both individual and departmental strengths and vulnerabilities. Existing training policies should also be reviewed as part of this phase. The objective is to establish a clear and accurate baseline prior to any training intervention.

- Education: The findings of the initial assessment inform the development of targeted training content. Rather than applying a uniform curriculum across the organization, training paths should be personalized to reflect each employee's current level of knowledge and role-specific risk exposure. This approach improves training efficiency and increases the relevance of content for each participant.

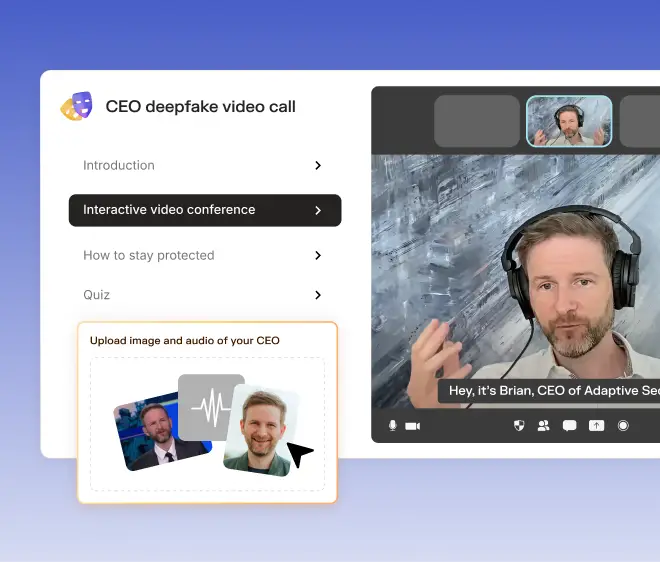

- Exercise: Practical reinforcement is delivered through phishing simulations and scenario-based exercises. Deepfake phishing simulation tools are particularly valuable in this phase, as they replicate the visual and psychological characteristics of real attacks, exposing employees to the specific triggers and manipulation techniques employed by cybercriminals in a controlled environment.

- Measurement: Ongoing measurement is essential to evaluating program effectiveness and identifying areas requiring additional attention. Tracking should prioritize behavioral metrics, such as simulation response rates and protocol adherence, over completion metrics alone. Measuring whether employees have changed their behavior, rather than simply whether they have completed the training, provides a more accurate and actionable indicator of program impact.

Upon completion of the measurement phase, organizations should conduct a reassessment using the updated data to inform the parameters of the subsequent training cycle. This reassessment ensures that each iteration of the program reflects the most current threat landscape as well as the evolving competency profile of the workforce.

The appropriate frequency of training cycles will vary according to the organization's risk profile and should be determined through role-based analysis rather than applied uniformly across the workforce. For individuals in high-risk roles, such as executives and members of financial teams, monthly phishing simulations supplemented by more frequent targeted microsessions represent a sound baseline from which the program can be calibrated over time.

How to Set Up Role-Based Deepfake Awareness Training Paths

The development of role-based deepfake awareness training paths requires a structured understanding of the risk profile associated with each organizational role. The underlying principle is to align training content as closely as possible with the specific threats each employee is likely to encounter in their daily responsibilities.

The first step in this process is to segment the workforce by risk level.

- High-risk: Executives, financial personnel, and IT administrators

- Medium-risk: Managers, HR professionals, legal staff, and customer service representatives

- Low-risk: General staff

A foundational training module should be completed by all employees regardless of role. This module should address the following core topics:

- What AI deepfakes are

- Basic techniques for identifying synthetic voice and video content

- The psychological triggers that cybercriminals commonly exploit

- Prevalent attack types to which all employees are vulnerable, including executive impersonation

- Internal reporting procedures for suspected deepfake incidents

- Organization's policies and verification protocols

Role-specific training content should then be layered onto this foundation, tailored to the threat scenarios most relevant to each team:

- Financial personnel should receive training focused on voice and video-based financial fraud scenarios

- IT staff should be trained on helpdesk impersonation and account compromise attempts

- HR personnel should address candidate impersonation and employee data theft

- Legal teams should focus on client and colleague impersonation for the purpose of sensitive data extraction

- Customer service staff should be prepared for client impersonation attacks targeting account access or personal data

- Managers across all departments should receive targeted training that reflects their access to organizational data, tools, and, in some cases, purchasing authority, which, while representing a lower-value target than executives, still constitutes a meaningful attack surface.

Deepfake training frequency should also be differentiated by risk level. A basic guideline is monthly training for high-risk roles, quarterly for medium-risk roles, and biannually for lower-risk roles. These intervals should be treated as a starting point rather than a fixed schedule. Where behavioral metrics indicate insufficient improvement following training cycles, frequency should be increased accordingly until measurable progress is observed.

What Makes for a Good Deepfake Awareness Training Program: Process Over Instincts

An effective deepfake awareness training program extends beyond instructing employees on how to identify synthetic content, as maintaining pace with the rate of technological evolution in this domain is not a realistic objective for most organizations.

A well-designed program focuses instead on cultivating a fundamental shift in employee behavior, moving away from the assumption that perceived authenticity is sufficient grounds for action, and toward a default posture of verification before response.

The most effective deepfake security awareness training programs are grounded in a straightforward but consequential premise: detection is not a reliable primary defense strategy. Both automated tools and trained individuals will, under certain conditions, fail to identify a convincing deepfake.

Designing a security culture around the expectation of perfect detection is, therefore, insufficient. Instead, training should establish process adherence as the primary safeguard, ensuring that employees default to verified procedures when handling sensitive requests, regardless of how credible the associated communication appears.

Key takeaway: An effective cybersecurity awareness training program aims to replace reliance on instinct with consistent adherence to process.

Deepfake Awareness Training Program Key Metrics

Most deepfake awareness training programs default to measuring basic program metrics, such as completion rates and simulation click rates. While these figures provide a baseline picture of program participation, they do not constitute the most meaningful indicators of training effectiveness.

The metrics that carry the greatest analytical value are those that capture actual changes in employee behavior and provide substantive insight into program impact. The following metrics are recommended as the foundation of a robust measurement framework.

- Completion Rate by Role: Completion rates should be tracked and reported at the role level rather than in aggregate. Gaps in completion among high-risk employees, such as executives or financial personnel, represent a material security exposure and should be treated as a priority issue requiring immediate remediation.

- Time-to-Complete Relative to Estimated Duration: This metric is most informative in the early stages of a training program. Employees who complete training in substantially less time than the estimated duration may be progressing through content without adequate engagement, which reduces the likelihood that learning objectives are being met.

- Simulation Failure Rates: Tracking the rate at which employees fail phishing simulations identifies individuals who present elevated susceptibility to deepfake-based attacks. This data informs targeted follow-up training and enables the organization to prioritize resources toward those who pose the greatest residual risk.

- Correct Protocol Adherence Rate: This metric measures the proportion of employees who, upon encountering a simulated or actual deepfake attack, followed the correct organizational verification and reporting procedure. As the primary behavioral objective of the training program, this figure should be treated as the central performance indicator.

- Report Rate and Time-to-Report: These metrics collectively indicate the degree to which employees have internalized their role as an active component of the organization's defensive posture. A high report rate combined with a low time-to-report suggests that the workforce is approaching the standard of a functional first line of defense.

- Organizational Safety Score: Organizations should administer periodic surveys modeled on the Net Promoter Score methodology to assess how confident employees feel about reporting suspected incidents or acknowledging mistakes without fear of negative consequences. This metric serves as an indicator of security culture health, which is a foundational condition for effective program participation and honest reporting.

What Is a Deepfake Phishing Simulation Software

Deepfake phishing simulation software is a purpose-built tool that enables security teams to design and execute controlled AI-powered impersonation attacks within a safe and managed environment. These platforms typically support simulation across multiple content formats, including voice, video, and multi-channel scenarios, and provide accompanying administrative capabilities for the creation, deployment, and measurement of simulation campaigns.

What Does a Deepfake Phishing Simulation Software Do

Deepfake phishing simulations integrate knowledge transfer with practical application, equipping employees with the competencies required to recognize and respond to deepfake-based threats in operational conditions. These simulations function as a component of a broader security awareness training program, designed to provide comprehensive coverage of the threat landscape.

Within the layered phishing protection framework described in the preceding sections, deepfake phishing simulation software is classified as a technological tool; however, its primary function is to strengthen the human layer of the defensive strategy. Simulation platforms contribute to this objective through four principal mechanisms.

- Deepfake Security Awareness: A meaningful distinction exists between general awareness of AI deepfakes and security-specific awareness. While many employees understand what deepfakes are, familiarity with the concept does not translate into preparedness for their use as a cyberattack vector. The objective of security awareness training is to ensure that employees understand how deepfakes are actively deployed in cybercrime, with particular emphasis on the attack scenarios most relevant to their professional context.

- Developing Recognition: Recognition skills are developed primarily through practical exercises, with deepfake phishing simulations serving as the most effective training mechanism. The most capable simulation platforms offer customizable and regularly updated content that reflects the evolving nature of generative AI threats, placing employees in scenarios that closely replicate the conditions of a real attack. Training content should be reviewed and refreshed on a continuous basis to ensure relevance.

- Establishing Response Procedures: The recommended approach is for employees to disengage from the suspicious interaction and immediately contact a designated colleague or security team member to initiate an investigation. Training should include instruction on a standardized verification procedure that employees can apply in these situations.

- Enabling Reporting: Effective reporting enables security teams to respond promptly, conduct further investigation, and prevent a localized incident from escalating. Beyond the immediate operational benefit, consistent reporting generates data that allows security teams to assess and monitor human risk across the organization over time, providing a measurable indicator of program effectiveness.

A critical consideration in program design is the avoidance of traditional content-focused exercises that challenge employees to identify whether a given piece of media is authentic. Well-developed deepfake phishing simulation software is designed to build a deeper understanding of the underlying threat mechanics, rather than training employees to detect specific artifacts or anomalies in synthetic content.

Key Features in Deepfake Phishing Simulation Software

Selecting a deepfake phishing simulation platform requires careful evaluation of several key capabilities. The following criteria should be considered when assessing available solutions.

- Video and Voice Quality: Simulation content must achieve a level of realism comparable to that of actual deepfake attacks. Simulations that fall short of this standard are unlikely to develop the intended behaviors and may produce a false sense of preparedness.

- Deepfake Threat Coverage: Deepfake attacks are inherently multi-channel in nature, extending beyond standalone voice or video content to encompass live calls, SMS communications, and collaboration platform interactions. Effective simulation platforms must demonstrate that these channels can be deployed in combination.

- Campaign Management: Platforms should provide comprehensive campaign management functionality spanning the full lifecycle of a simulation, from planning and content creation through scheduling, execution, and results analysis. Automation of administrative tasks is an important consideration in reducing the operational burden on security teams.

- Personalization Capabilities: Simulation effectiveness is significantly enhanced when content is tailored to the role of the intended recipient. Platforms that support personalization at the individual level, adapting scenarios to each employee's specific responsibilities and risk profile, provide the greatest training value.

- Results Measurement: Robust measurement capabilities are essential both for tracking behavioral improvement over time and for communicating program outcomes to organizational leadership. An intuitive and clearly presented reporting dashboard facilitates both functions and increases the practical utility of the data generated.

- Human Risk Profiling: Platforms that aggregate simulation data into a structured human risk profile provide security teams with a comprehensive view of organizational vulnerability. This capability transforms raw simulation results into actionable intelligence that can inform training prioritization and resource allocation.

- Just-in-Time Feedback: The period immediately following a simulation failure represents the most effective moment for targeted learning intervention. Platforms that deliver instant feedback at the point of failure maximize the instructional impact of each simulation and accelerate behavioral change.

- Integration Capabilities: Effective integration with existing organizational systems and security tools extends the utility of the platform and reduces administrative complexity. Compatibility with current infrastructure should be evaluated as a practical prerequisite for sustainable deployment.

- AI-Powered Capabilities: Underlying AI capabilities are what make the preceding functionalities operationally viable at scale. Without a robust AI infrastructure, delivering high-quality, personalized, and continuously updated simulation content at an acceptable cost and administrative burden would not be feasible.

Deepfake Phishing Simulation Software vs Generic Phishing Simulation Tools

The distinction between deepfake phishing simulation software and conventional phishing simulation tools extends beyond the format of the content they generate. A deepfake simulator exposes employees to synthetic content that is designed to appear authentic across both visual and audio channels, replicating the specific conditions under which real deepfake attacks occur.

Traditional phishing simulation reinforces a defensive orientation grounded in the principle of not trusting unfamiliar sources. This approach has been the standard for years, with training programs focused on teaching employees to treat unrecognized senders with suspicion and to identify anomalous elements within communications, particularly in email-based interactions.

Deepfake phishing simulation software challenges not skepticism toward the unfamiliar, but verification, even when content appears entirely familiar and credible. This represents a materially different cognitive posture and one that conventional simulation tools are not designed to develop.

A further distinction lies in the nature and depth of impersonation. Phishing attacks, in all forms, are predicated on impersonation. In traditional phishing, cybercriminals impersonate institutions or brands, presenting themselves as representatives of a bank or service provider, for example.

AI deepfake attacks operate at a fundamentally different level, impersonating specific individuals whom the target knows personally and trusts professionally, including colleagues, executives, and clients. Conventional phishing simulation platforms do not possess the capability to replicate this level of impersonation or to prepare employees for the associated threat.

How to Measure Deepfake Simulation Software ROI

Measuring the return on investment of deepfake phishing simulation software presents an inherent methodological challenge. Demonstrating the value of attacks that did not occur is difficult to quantify with precision. This challenge is further compounded by the nature of cyberattacks, which are infrequent but carry the potential for catastrophic financial and operational consequences when they do occur. The following approach provides a practical framework for this analysis.

A standard return on investment formula provides the foundation for this calculation. Applying the formula of savings minus costs, divided by costs, multiplied by 100, yields a percentage return on investment figure. The cost component is straightforward to determine, encompassing all expenditures associated with the platform. The more complex variable is the avoided cost component, which represents the financial exposure that the organization did not incur as a result of the program.

The FAIR framework, which stands for Factor Analysis of Information Risk, provides a structured and widely recognized methodology for translating cybersecurity risk into quantifiable business terms, and is recommended as a tool for estimating avoided costs in this context.

Cyber insurance premium costs represent an additional financial variable to incorporate into this analysis, as security controls may qualify an organization for reduced insurance premiums.

Questions to Ask Deepfake Phishing Simulation Software Vendors

Evaluating deepfake phishing simulation software vendors requires a structured line of inquiry to determine whether a given platform is appropriately suited to the organization's specific security requirements. The following questions are recommended as part of the vendor assessment process, along with the rationale underlying each.

What Is a Deepfake Detection Tool

A deepfake detection tool is a technological solution designed to identify and, where operationally feasible, block artificial media across its various forms, including fully synthetic content, manipulated recordings, and spoofed audio or video.

Upon identifying indicators of synthetic or manipulated media, these tools generate prompt notifications to the relevant user or security team and, where the technical conditions permit, intervene to prevent further engagement with the content.

Within the three-layered phishing protection framework outlined in preceding sections, deepfake detection tools occupy a defined position within the technological layer, functioning as one component of the broader defensive asset library that organizations can deploy.

How a Deepfake Detection Tool Works

Deepfake detection tools typically apply multiple concurrent analytical methods, including forensic AI, behavioral modeling, and additional proprietary techniques, to assess whether a given piece of media has been synthetically generated or manipulated.

The following describes six detection layers, presented in order from surface-level to deeper analytical examination. The following provides a general overview of detection methodology; operational details are intentionally kept confidential for security reasons:

- Pixel-Level Analysis: The first layer examines raw image or video content at the pixel level, identifying anomalies such as noise patterns, compression artifacts, blending boundaries, inconsistencies in lighting and shadow rendering, and irregularities in surface texture.

- Facial and Biometric Behavioral Analysis: The second layer analyzes facial and biometric behavior by tracking facial landmarks to detect irregular movement patterns, anomalies in blinking frequency and duration, inconsistent eye direction, unnatural micro-expressions, and asymmetries in facial geometry.

- Audio Analysis: The third layer is specific to audio content and examines frequency patterns, rhythm, stress, intonation, breathing, and pauses for indicators of synthetic generation. This layer also evaluates background audio and lip synchronization.

- Video Consistency Analysis: The fourth layer focuses on video-specific characteristics, assessing inter-frame consistency, head movement patterns, and subtle physiological indicators such as color variation in facial skin tone associated with blood flow, which synthetic content frequently fails to replicate accurately.

- Metadata Examination: The fifth layer analyzes file metadata, including information pertaining to the origin and processing history of the content. This examination covers camera and device information, editing history, evidence of processing through tools commonly associated with deepfake generation, and the presence or absence of cryptographic signatures that verify content provenance.

- Machine Learning Analysis: The sixth layer employs machine learning models in a manner analogous to the methods used by cybercriminals to generate deepfake content. Neural networks compare known authentic and synthetic media samples to produce a probability score indicating the likelihood that the content has been artificially generated. This layer also integrates the outputs of the preceding five layers to improve overall accuracy and reduce false positive rates, and deploys detectors trained specifically to identify output from known generative tools, including generative adversarial networks and platforms such as DeepFaceLab.

What Are the Main Types of Deepfake Detection Tools

Deepfake detection tools vary in form and function depending on organizational objectives. The primary categories and their respective applications are as follows:

- Standalone software consists of dedicated deepfake detection tools installed locally. These solutions analyze media directly without transmitting data to external systems. They tend to be sophisticated and require significant user training, as their primary purpose is forensic analysis rather than routine detection. This category is best suited for cybersecurity teams.

- Cloud-based services represent the most widely adopted model. They support integration into existing workflows with minimal operational disruption.

- Browser extensions function similarly to cloud-based services but with a narrower capability scope, as they are limited to browser-accessible applications.

- Mobile detection applications enable users to analyze media directly on smartphones, making them well-suited for individual use cases.

- Real-time video call detection tools are designed to identify synthetic media during live video calls, with integrations available across major communications platforms.

For most organizations, a combination of a cloud-based service and targeted standalone software for high-risk personnel provides sufficient coverage. Some vendors also offer unified solutions that consolidate multiple detection types into a single platform.

How to Pick the Right Deepfake Detection Tool

Selecting an inappropriate deepfake detection tool can result in misallocated resources or, more critically, a false sense of security that diminishes reliance on complementary protection layers.

The selection process follows an inside-out approach, beginning with a clear definition of organizational needs before evaluating available solutions. Organizations should assess the following:

- Which assets require protection

- Who is the most likely attacker

- What forms of synthetic media present the greatest exposure, or more precisely, what synthetic media could be introduced most seamlessly into routine workflows

- What is the projected cost of a successful deepfake attack

- What would the cost of a false positive be, as this is frequently overlooked despite carrying measurable operational consequences

Once the organizational threat profile is defined, tools should be evaluated against it. For example, organizations that rely heavily on live video calls involving senior personnel should treat real-time deepfake video detection as a core requirement.