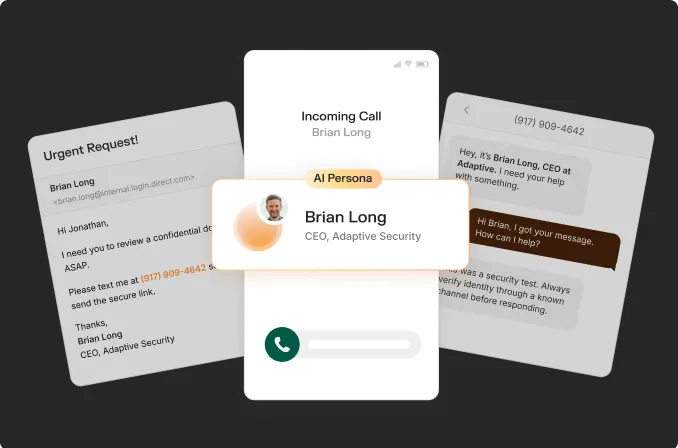

AI voice cloning scams represent an active and rapidly escalating enterprise threat. According to Vectra AI's AI Scams in 2026: How They Work and How to Detect Them report, AI-powered scams surged 1,210% in 2025; AI voice cloning scams were identified as a top enterprise risk. Cyber threat actors are now capable of cloning a trusted individual's voice within seconds, contacting a finance team under false pretenses, and extracting significant funds before the fraud is detected.

This guide covers how organizations can defend against deepfake vishing attacks, including AI voice cloning and phishing threats, with actionable measures across the following areas:

- How AI voice cloning works

- The most common AI voice cloning scam scenarios and how they are executed

- Methods for detecting cloned voices in real time

- Approaches to building security awareness training programs that produce measurable changes in employee behavior

Strengthen organizational resilience against AI voice cloning by implementing structured, behavior-driven defenses with Adaptive Security before incidents occur.

What Is AI Voice Cloning and How Does It Work?

AI voice cloning is the process of using machine learning models to analyze the recordings of a real person's voice and generate a replica that closely resembles the original. Technology that was once confined to film studios and research laboratories is now accessible to any individual with an internet connection.

Modern AI voice cloning systems are trained on audio samples to learn the unique acoustic characteristics of a person's voice, including pitch, cadence, accent, tone, and speech patterns. Once trained, these models can generate new speech in that voice from any text input through text-to-speech synthesis. The result is an audio output that is nearly indistinguishable from the genuine voice.

According to McAfee's Scammers Use AI Voice Cloning Tools to Fuel New Scams 2025 report, AI voice clones trained on real voices are as believable as genuine recordings in listener tests.

What Is the Difference Between AI Voice Cloning and Deepfake Voice Cloning?

AI voice cloning refers to the technical process of replicating a voice using machine learning. Deepfake voice cloning refers to the use of that replica in a deceptive context, most often to impersonate an individual the target trusts. Deepfake voice cloning is frequently paired with synthetic video to produce a fully fabricated audio-visual impersonation, as demonstrated in several high-profile enterprise fraud cases. The underlying technology is the same; the distinction lies in intent and delivery method.

What Are AI Voice Cloning Scams and How Do They Work?

AI voice cloning scams occur when a cloned voice is used to deceive a target into taking a harmful action, such as transferring funds, surrendering credentials, or authorizing system access that benefits the attacker. The cloned voice establishes trust, while urgency, narrative framing, and follow-up communications reinforce the deception.

AI voice cloning scams follow a predictable lifecycle:

- Target selection: The threat actor identifies a high-value target, typically an individual with financial authority or system access.

- Voice harvesting: The threat actor collects audio samples of the individual to be impersonated from publicly available sources.

- Clone generation: The attacker produces a replica voice model using an AI voice cloning tool.

- The call: The attacker contacts the target using the cloned voice, paired with a spoofed caller ID to reinforce the deception.

- Extraction: The target is pressured into transferring funds, sharing credentials, or authorizing access before verification is possible.

The entire process — from audio harvesting to a finished clone — takes under 30 minutes with current tools.

Address the speed and scale of AI-powered attacks with Adaptive Security's proactive simulation and training programs designed for modern threat environments.

How Little Audio Do Scammers Actually Need?

According to McAfee's 2025 report, just three seconds of audio is enough to produce a clone with an 85% voice match to the original. For vishing attackers, three seconds of audio is easily obtained.

Higher-quality clones, however, require greater audio input. Basic AI voice cloning models function with as little as 90 seconds of audio input.

How Scammers Harvest Voices for AI Voice Cloning

Cyber threat actors do not require direct access to an individual in order to harvest voice samples. Publicly available audio is sufficient. Common sources include:

- Social media videos posted on LinkedIn, Instagram, Facebook, or TikTok

- YouTube content such as interviews, webinars, or conference presentations

- Podcast appearances and recorded earnings calls

- Voicemail greetings that play automatically when a call goes unanswered

According to the FTC's Consumer Protection Data Spotlight 2025, cyber threat actors also harvest voice samples from online content posted by family members, particularly for use in grandparent and emergency impersonation schemes. Executives and public figures face the highest exposure due to the volume of audio available about them online; however, any employee with a public digital footprint represents a potential source.

Reduce exposure to AI voice cloning risks by preparing employees to recognize and respond to cyber threats using Adaptive Security's training approach.

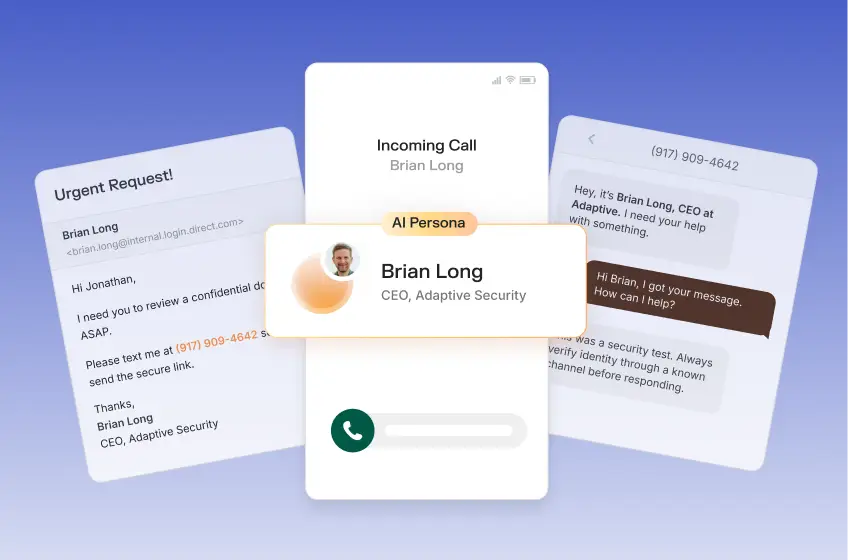

How Scammers Exploit AI Voice Cloning Through Social Engineering

After harvesting various recordings and generating a convincing audio lure, they wait for the victim to fall for it. The cloned voice is only part of the attack. What makes AI voice cloning scams so effective is the psychological pressure applied during the call. Scammers exploit three established psychological triggers: urgency, authority, and familiarity.

A cloned CEO voice demanding an immediate wire transfer activates all three triggers simultaneously. The target recognizes the voice, perceives the request as coming from authority, and feels pressure to act immediately.

Spoofed caller IDs amplify the effect. When the displayed number matches the real person's known contact, the target has two trust signals reinforcing the deception simultaneously. Understanding how attackers layer manipulation techniques is the foundation of effective AI voice cloning prevention.

How AI Voice Cloning Fits into Vishing and Phone Call Phishing

Vishing, also known as voice phishing, is a category of social engineering attack carried out over phone calls. It predates AI voice cloning by decades. AI voice cloning eliminates the most significant vulnerability of traditional vishing: an unfamiliar or unconvincing voice.

Traditional vishing attacks relied on a scammer's ability to sound persuasive. AI voice cloning eliminates that variable entirely. The voice on the line now sounds exactly like someone the target knows and trusts. According to CrowdStrike's Global Threat Report 2025, vishing attacks rose by 442% from H1 2024 to H2 2024, a rate of growth that tracks directly with the wider availability of AI voice cloning tools. AI vishing differs from traditional voice spoofing in significant ways, and the cyber threat has evolved beyond the scope of legacy security awareness programs.

Common AI Voice Cloning Scams Targeting Individuals and Organizations

AI voice cloning scams target both individuals and enterprises, though the attack vectors differ considerably. Individuals are typically targeted through emotional manipulation, while organizations are targeted through financial and operational authority. According to Heimdal Security's Voice Cloning Detection 2025 report, impersonation scams including AI voice cloning spiked 148% between April 2024 and March 2025, representing nearly a 1.5x increase in AI voice cloning scams in less than a year.

CEO Fraud and Business Email Compromise Using AI Voice Cloning

CEO fraud, also referred to as business email compromise (BEC) when conducted over email, represents the highest-stakes AI voice cloning scam targeting organizations. The attack follows a consistent pattern: a scammer clones the voice of a CEO and contacts the CFO or a member of the finance team with an urgent wire transfer request, frequently outside of business hours to reduce the likelihood of in-person verification.

According to the FBI's Internet Crime Report 2025, business email compromise using AI voice cloning generated $3.046 billion in losses in 2025. According to the same report, AI-enabled BEC with voice cloning is increasingly embedded in attacks causing billions in organizational losses year over year.

The attack is effective because it exploits the same dynamics that enable organizations to function: employees trust their leadership and respond to urgency. To identify and prevent modern impersonation attacks at the organizational level, the pattern of authority exploitation must be examined across all phishing attack types.

Grandparent and Family Emergency AI Voice Cloning Scams

In family emergency AI voice cloning scams, phishing attackers clone the voice of a family member, typically a grandchild or younger relative, and contact an older adult claiming to be in danger. The fabricated emergency may involve a car accident, an arrest, or a medical situation requiring immediate financial assistance.

The effectiveness of these scams does not stem from gullibility, but rather the emotional override that occurs when an individual believes a loved one is in immediate danger. The cloned voice removes the final available line of defense, which is recognizing an unfamiliar voice on the other end of the call.

Bank and Financial Institution AI Voice Cloning Scams

In bank impersonation AI voice cloning scams, phishing attackers clone the voice of a financial institution representative and contact targets to request account verification, one-time passwords, or authorization for a transaction reversal. These calls are frequently paired with SMS spoofing, in which a text message from a seemingly legitimate bank number arrives just before or during the call to further establish credibility.

Voice cloning phishing attackers often initiate the call already in possession of partial account details sourced from prior data breaches or credential theft, which substantially increases the effectiveness of the attack. By the time the cloned voice is deployed, the target has already been primed to trust the interaction.

IT Help Desk Impersonation via AI Voice Cloning

In IT help desk impersonation attacks, the attacker clones the voice of an internal IT staff member and contacts an employee requesting a password reset, multi-factor authentication approval, or remote access tool installation. Help desk impersonation is a common AI voice cloning pattern specifically targeting employee credentials.

Prepare high-risk departments with targeted, role-specific training powered by Adaptive Security that reflects real-world attack patterns.

AI vishing scams are particularly effective in mid-to-large organizations where employees do not personally know every member of the IT team. The combination of a recognized department name, a convincing voice, and a routine-sounding request is sufficient to bypass the suspicion of most employees. The help desk vector represents one of the fastest-growing attack surfaces in enterprise environments.

Examples of AI Voice Cloning Scams and CEO Fraud

Documented AI voice cloning scam cases illustrate precisely how these attacks unfold and what makes them exceptionally difficult to identify in a moment of urgency.

The UK Energy Firm CEO Scam (2019) is one of the earliest major documented case of AI voice cloning used in corporate fraud. The CEO of a UK-based energy company received a call from an individual who sounded exactly like the German executive of his parent company, instructing him to wire approximately $243,000 to a Hungarian supplier within the hour. convincing. The funds were immediately forwarded through a series of accounts and were never recovered. Investigators later confirmed that the voice had been synthesized using AI voice cloning technology, marking the first confirmed enterprise-level AI voice cloning fraud.

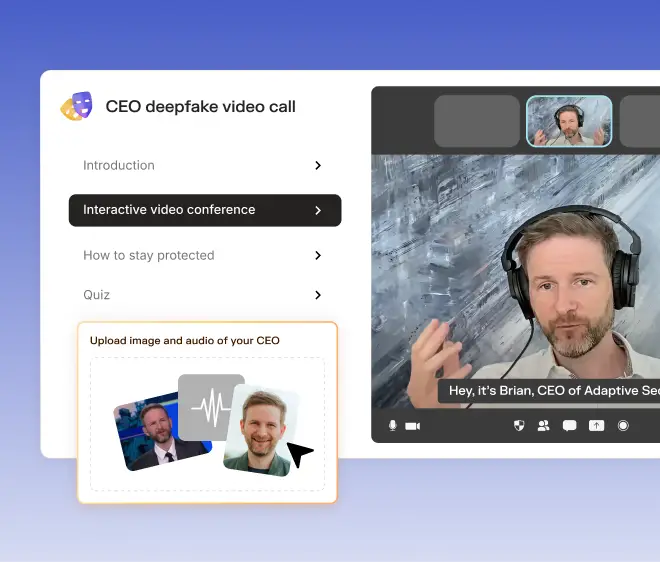

The Hong Kong Deepfake Video Call ($25 million, 2024) represented a significant escalation from audio-only AI voice cloning to full multimodal deepfake fraud. A finance employee at a multinational firm was invited to a video call on which the company's CFO and several senior colleagues appeared to be present. Every participant on the call was a deepfake, with AI-cloned voices synchronized throughout the entirety of the session. The employee authorized 15 transactions totaling $25 million before the fraud was identified. This case demonstrated that deepfake scams have expanded beyond audio impersonation into fully staged interactive environments.

The $35 Million UAE Bank Transfer (2021) involved a bank manager in the United Arab Emirates who received a call from an individual impersonating the director of a company he had previously worked with. The voice was a convincing AI clone. Supported by fraudulent emails that arrived in parallel, the manager authorized a transfer of $35 million. The case involved at least 17 individuals in a coordinated operation and remains one of the largest single AI voice cloning fraud events on record.

These deepfake voice cloning cases are not outliers. According to AllAboutAI's AI Voice Cloning Statistics 2025 report, over 8,400 documented fraud incidents linked to AI voice cloning resulted in $410 million in losses in H1 2025. Documented deepfake examples that have impacted enterprises confirm that the pattern of authority impersonation combined with financial urgency remains consistent across industries and geographies.

Prevent high-impact fraud scenarios by reinforcing employee readiness through continuous simulation with Adaptive Security.

How to Stay Safe from AI Voice Cloning Scams as an Employee

Protecting against AI voice cloning scams requires reducing personal audio exposure and establishing verification practices prior to any attempted impersonation. The most effective defense combines proactive minimization of the digital voice footprint with structured identity verification methods that can be applied in real time. By implementing the following measures, susceptibility to AI voice cloning scams can be reduced significantly.

- Audit individual public audio footprint. Employees should search their own names on YouTube, LinkedIn, and social media platforms. Where substantial audio or video content is publicly accessible, its continued availability should be reassessed on a routine basis. Scammers frequently target employees with sufficient voice samples for model training.

- Never act on urgent financial requests from an unverified caller. Calls requesting immediate financial action should be terminated and verified through a known contact number. This applies regardless of perceived voice authenticity. According to McAfee Beware the Artificial Impostor Report 2025, 35% of people cannot identify an AI-cloned voice from a genuine one, which means auditory recognition alone is not a reliable detection method.

- Report suspected AI voice cloning scams. In the United States, reports should be submitted to the FTC at reportfraud.ftc.gov and to local law enforcement. Where organizational targeting is involved, reports should also be filed with the FBI's Internet Crime Complaint Center (IC3). Early reporting supports identification of active campaigns and reduces exposure for additional potential targets.

Signs That a Call Might Be an AI Voice Cloning Scam

Recognizing an AI voice cloning scam in real time is difficult, though not impossible. Familiarity with the behavioral and contextual indicators provides the critical seconds needed to pause and verify before any action is taken.

The most consistent warning signs of an AI voice cloning scam include:

- Extreme urgency: The caller insists that action must be taken immediately, allowing no time for verification or questions.

- Requests for secrecy: The caller instructs the recipient not to inform others, to bypass standard approval processes, or to handle the request independently.

- Unusual channel or timing: The call arrives outside of business hours, from an unrecognized number, or through a communication method the purported sender would not typically use.

- Pressure to skip verification: The caller resists or discourages the recipient's offer to call back or confirm through a separate channel.

- Requests involving wire transfers, gift cards, or credential sharing: Legitimate authority figures do not request these actions over an unverified phone call.

According to McAfee's Scammers Use AI Voice Cloning Tools to Fuel New Scams 2025 report, 70% of people surveyed said they were not confident they could tell a cloned voice from a real audio clip. This inability to reliably distinguish cloned voices gives attackers a significant advantage. The appropriate assumption, when a call triggers any of these warning signs, is that independent verification must be completed before any action is taken.

Improve detection and response outcomes by combining employee training with Adaptive Security's automated phishing analysis and remediation workflows.

Audio and Behavioral Cues That Reveal AI Voice Cloning

Beyond contextual indicators, acoustic and behavioral signals may suggest that a voice is AI generated. Current AI voice cloning technology, while highly convincing, still produces detectable artifacts in a number of cases:

- Unnatural pauses: Synthetic voices may hesitate at irregular points within a sentence, particularly before proper nouns or numbers. As AI voice cloning technology has advanced, these inconsistencies have become more difficult to identify without training, though they remain perceptible to a trained listener.

- Flat or uniform cadence: AI-cloned voices may lack the natural variation in pace and emphasis characteristic of human speech.

- Audio artifacts: These may include clipping at the start or end of words, background silence that appears unusually clean, or subtle robotic undertones.

- Absence of ambient sound: Authentic phone calls from an office or home environment contain background noise, even when partially reduced by noise-cancelling technology. Synthetically generated audio is often unnaturally silent by comparison.

Behavioral indicators are equally significant in detecting AI voice cloning scams. The individual being impersonated may not typically initiate calls requesting urgent wire transfers, instruct recipients to bypass established verification processes, or decline to confirm their identity through a secondary channel. When caller behavior does not align with the known conduct of the individual being impersonated, that discrepancy warrants immediate verification. Voice authenticity alone is not a reliable basis for trust.

How to Verify the Identity of a Caller During an AI Voice Cloning Attempt

When a call raises suspicion, out-of-band verification methods should be applied before any action is taken. The recommended process is as follows:

- End the call politely: Inform the caller that a return call will be placed immediately using a number already on file, not one provided during the call.

- Use a known contact number: Contact the individual directly using a number stored in organizational records or a verified directory.

- Use a pre-established safe phrase: A code word or phrase should be established in advance with family members and close colleagues, known to both parties. A caller unable to produce the agreed phrase cannot be confirmed as the person they claim to be.

- Ask a verifiable personal question: Request information that only the legitimate individual would know and that is not publicly accessible.

- Confirm via a separate channel: Send a message to the individual's known email address or verified messaging account to confirm whether the call was genuinely placed by that person.

No legitimate authority figure will object to a verification step on a high-stakes request. Resistance to verification is itself a significant indicator of an AI voice cloning attempt.

Scale verification practices across the organization by embedding consistent training into workflows with Adaptive Security.

How Organizations Can Prevent AI Voice Cloning Scams With Policies and Controls

Individual awareness is necessary but insufficient in organizational environments. Preventing AI voice cloning scams at the enterprise level requires structural controls that prevent authorization of high-risk actions through a single communication channel. According to Right-Hand AI's The State of Deep Fake Vishing Attacks in 2025 report, deepfake vishing attacks increased by 1,633% in Q1 2025 compared to Q4 2024. Security policies must be calibrated to the scale and sophistication of current AI voice cloning threats.

- Implement cybersecurity awareness training for employees. Organizations should deploy structured, role-based cybersecurity awareness training that specifically addresses AI voice cloning scams, ensuring employees understand verification procedures, recognize social engineering patterns, and respond appropriately under pressure.

- Implement mandatory out-of-band verification for all financial requests. No wire transfer, credential change, or access authorization should be executable based solely on a phone call. A secondary verification step through an independent, trusted channel should be required before processing any such request. This policy significantly reduces exposure to AI voice cloning fraud targeting financial operations.

- Establish a no-exceptions callback policy. Any phone-based request involving money, credentials, or access must be verified by returning the call using a number from the organization's verified contact directory. The callback must not use contact details provided during the original communication.

- Apply zero-trust architecture to voice-based requests. Zero-trust security architecture assumes no communication channel is inherently trustworthy. Voice communications, regardless of perceived authenticity or caller ID presentation, should be treated as unverified until independently validated.

- Regularly conduct phishing simulation tests. Continuous phishing simulations, including voice-based scenarios, should be executed to identify strengths and weaknesses across departments. Phishing simulations help measure behavioral readiness, reveal procedural gaps, and validate whether employees consistently follow verification protocols in simulated vishing attack conditions.

- Deploy call authentication technology. STIR/SHAKEN is a framework designed to authenticate caller ID information on VoIP networks. Organizations should coordinate with telecom providers to implement call authentication and detect spoofed numbers at the network level. While not fully comprehensive, this introduces additional friction for phishing attackers using caller ID spoofing.

- Review voice biometrics carefully. Voice biometric authentication systems are directly impacted by AI voice cloning capabilities, since high-quality synthetic voices may bypass such controls. Where voice biometrics are used, they should function as one component within a multi-factor authentication framework rather than as a standalone mechanism.

Reinforce policy effectiveness by validating employee behavior through Adaptive Security's realistic simulations and performance tracking.

Building a Verification-First Culture Against AI Voice Cloning

Policies are effective only when consistently applied under operational pressure. AI voice cloning scams exploit authority perception and urgency, particularly when phishing attackers impersonate executives. In such scenarios, employees may bypass established procedures despite prior awareness of policy requirements.

A verification-first culture requires that verification steps are consistently applied regardless of the identity or seniority of the requester. Leadership endorsement is a critical factor in establishing this behavior to prevent AI voice cloning scams. Executives should explicitly support verification procedures and reinforce that adherence to verification protocols will not result in negative consequences, even when delays occur.

Clear escalation pathways are also essential. Employees should have predefined procedures for reporting suspicious calls, eliminating the need for ad hoc judgment during high-pressure interactions. This reduces response variability and strengthens organizational consistency in handling potential AI voice cloning incidents.

Incident Response: What to Do If an AI Voice Cloning Scam Is Suspected

When AI voice cloning scams are suspected, structured incident response is required to limit potential damage. The following steps apply during and after suspected AI voice cloning phishing attempts.

During the suspected AI voice cloning scam call:

- Maintain composure and do not comply with any requests.

- Reference established verification policy to delay action, such as indicating that the request requires formal verification before execution.

- Avoid confirming or denying any information referenced by the caller.

- Terminate the call without providing additional information.

After the suspected AI voice cloning scam call ends:

- Report the incident immediately to internal security or IT teams.

- Preserve all available call data, including number, timestamp, and a written summary of the interaction.

- Notify the individual whose voice may have been impersonated to ensure awareness of potential compromise.

- Where financial transfers have occurred, contact the relevant financial institution immediately to request transaction recall procedures.

- File reports with the FBI IC3 and applicable national cybersecurity authorities.

Rapid reporting significantly improves containment outcomes by reducing the time available for phishing attackers to extend the campaign.

Accelerate incident response and reduce analyst workload with Adaptive Security's automated phishing classification and remediation.

How to Detect AI Voice Cloning Scams with Advanced Technology

Human detection of AI voice cloning is inherently limited. Employees are often deceived by deepfake voices even in controlled simulation environments. As a result, technological detection systems provide an additional layer of defense that does not depend on individual judgment.

AI voice detection tools analyze audio signals to identify characteristics associated with synthetic speech generation. Common deepfake voice cloning indicators include:

- Intonational irregularities: Disruptions in rhythm, stress patterns, or speech cadence that deviate from natural human speech

- Residual artifacts: Frequency distortions or anomalies introduced during synthetic voice generation

- Metadata inconsistencies: Misalignment between call metadata and audio signal properties

- Absence of natural background noise: Lack of environmental audio signatures typically present in legitimate communication channels

These systems can be integrated into enterprise communication platforms, call center infrastructure, and real-time monitoring systems to flag suspicious audio before employees respond to a request.

Current AI Voice Detection Tools and Their Limitations

AI voice detection technology continues to improve; however, it remains an incomplete control. In deepfake voice detection benchmarks, leading deepfake voice detection systems exhibit equal error rates above 13%, indicating measurable rates of both false positives and false negatives.

The primary challenge is that AI voice cloning models and detection systems evolve concurrently. Detection systems trained on earlier synthetic speech patterns may fail to identify outputs generated by newer models with improved realism. In several cases, cloning capabilities currently outpace detection accuracy.

For organizations, AI voice cloning detection tools should be treated as a supplementary control within a broader security framework. They should not replace procedural safeguards or verification policies. A missed AI vishing detection that could have been prevented through mandatory verification procedures represents a process failure rather than a purely technical limitation. Deepfake video call scams involving simultaneous audio and visual manipulation further increase detection complexity across communication channels.

Strengthen human-layer defenses by training employees to recognize AI-generated cyber threats with Adaptive Security before relying solely on detection technologies.

Training Organizations Against AI Voice Cloning Scams

Technical controls and formal policies address procedural vulnerabilities, while training addresses human behavioral risk. Employees who have not encountered realistic AI voice cloning scenarios are less likely to respond appropriately under operational pressure compared to those exposed to structured simulations. According to Korshunov and Marcel's Deepfake detection: Humans vs. machines (2020), human accuracy in identifying high-quality audio deepfakes falls to 24.5%, proving that the threat exploits a perceptual limitation that traditional, non-specialized cybersecurity awareness training cannot bridge.

The objective of AI voice cloning awareness training is measurable behavioral change. Knowledge retention alone is insufficient if employees continue to comply with fraudulent requests under pressure. Effective programs prioritize pause, verification, and escalation behaviors.

Build a proactive security posture by implementing continuous, behavior-driven training with Adaptive Security tailored to evolving AI-powered cyber threats.

What an Effective AI Voice Cloning Phishing Simulation Looks Like

Effective AI voice cloning simulations utilize synthetic audio generated through AI systems to replicate real-world attack conditions. Traditional role-play simulations using human actors do not accurately represent the cyber threat environment created by modern AI-generated voices.

An effective AI voice cloning simulation includes:

- Role-specific scenarios: The CFO receives a simulated CEO voice requesting urgent financial transfer approval, while IT personnel receive simulated internal requests for credential resets. Scenario design should reflect role-based exposure risk.

- Realistic urgency and social pressure: Simulations should replicate time-sensitive pressure conditions consistent with real attack patterns rather than simplified test environments.

- Immediate post-simulation feedback: Employees who comply with simulated requests should receive structured feedback outlining the vulnerability demonstrated and correct response procedures.

- Tracked metrics: Compliance rate, reporting rate, and time-to-report should be measured to evaluate training effectiveness over time.

AI voice cloning simulations should be positioned as learning mechanisms rather than regulatory evaluations, as behavioral adaptation improves when employees are able to safely experience realistic scenarios.

Validate employee readiness with hyperrealistic simulations delivered by Adaptive Security that replicate AI voice cloning attacks across channels.

Role-Specific AI Voice Cloning Awareness Training for Employees

AI voice cloning risk exposure varies significantly across organizational roles. Generalized training programs may fail to address specific attack vectors relevant to different teams.

- Finance and accounts payable teams receive training focused on business email compromise (BEC) scenarios involving fraudulent wire transfer requests and executive impersonation using AI voice cloning.

- IT and help desk staff receive training focused on credential reset manipulation, internal authority impersonation, and service desk exploitation techniques.

- Executives and senior leadership receive training on voice exposure risk, since executive recordings are frequently used to generate AI voice cloning models. Their communication behavior also influences organizational urgency culture.

- General employees receive baseline training on recognition, verification protocols, and escalation procedures.

According to Deloitte's AI Training Stats 2026 Report, AI-driven personalized learning increased employee engagement in AI voice cloning awareness training by 30%. Role-based segmentation aligns training content with operational relevance, improving retention and behavioral application. For comparative analysis of deepfake awareness training platforms, capability differences across simulation systems vary significantly.

Equip employees with the skills to identify deepfake and voice-based cyber threats before they result in financial or data loss through Adaptive Security.

How to Measure Whether an Organization-Wide AI Voice Cloning Awareness Training Is Working

AI voice cloning awareness training effectiveness must be assessed through behavioral and operational metrics rather than completion rates alone.

- Simulation compliance rate over time: A declining rate of compliance with simulated fraudulent requests indicates improved behavioral resistance to AI voice cloning attacks.

- Voluntary reporting rate: Increased proactive reporting of suspicious calls indicates stronger security awareness and organizational trust in escalation pathways.

- Time-to-report: Reduced reporting latency decreases attacker dwell time and limits potential impact.

- Post-simulation assessment scores: These measure understanding of response procedures and reinforce correct behavioral patterns.

According to VirtualSpeech's Top 40 AI Training Stats in 2026 report, learners improved skills by 25.9% using AI roleplay simulations relevant to AI voice scam training. Simulation-based approaches demonstrate stronger behavioral outcomes compared to passive training formats. Organizations should conduct refresher AI voice cloning simulations quarterly and immediately following significant AI voice cloning incidents within their industry.

How Adaptive Security Addresses AI Voice Cloning Scams

"When a new type of attack pops up, Adaptive allows us to deploy relevant content immediately." — Katie Ledoux, CISO

Organizations that deploy Adaptive Security achieve measurable improvements in their ability to detect, resist, and respond to AI voice cloning scams. The platform is designed to produce behavioral change at the employee level while providing security teams with continuous visibility into human risk.

Employees become more effective at identifying AI voice cloning scams through repeated exposure to highly realistic, multi-channel simulations that mirror under-pressure attack conditions. These training simulations replicate voice phishing, deepfake video calls, and coordinated social engineering scenarios, enabling individuals to recognize patterns, pause-and-verify under pressure, and follow assigned protocols. Over time, AI voice cloning simulation compliance rates decrease, and reporting rates and time-to-report improve, indicating stronger behavioral resilience.

At the organizational level, security teams gain access to actionable data that supports targeted intervention. Dynamic risk scoring highlights high-risk individuals and departments, allowing for automated enrollment in role-specific training. Phish triage capabilities reduce manual workload by automating classification and remediation, enabling analysts to focus on complex or high-impact cyber threats, which strengthens the organization's overall defense posture against AI voice cloning scams.

Success is reflected in consistent employee verification behavior, reduced susceptibility to simulated attacks, and improved incident response speed across the organization.

Deliver targeted, role-based training aligned with risk profiles using Adaptive Security across finance, IT, and leadership teams.

Future Trends in AI Voice Cloning and Deepfake Scams

AI voice cloning is advancing faster than most organizational defenses. Understanding where the technology is heading allows security teams to prepare before the next generation of attacks arrives.

The next critical vulnerability is voice cloning at sub-second latency. Current real-time systems still carry measurable delays (typically 200–500ms) that create micro-pauses in conversation. Based on current development trajectories in edge AI inference and perceptual studies of synthetic speech, cloning systems could feasibly achieve latency under 100ms within 12–18 months, closely matching natural human speech patterns, to the point where even trained listeners struggle to detect synthetic origins via audio cues alone.

The threshold matters, because at that point detection shifts entirely from listening to the call to verifying the caller's identity independently before the conversation even begins.

Multimodal attacks introduce a new vulnerability: targets assess audio and video together rather than independently, making them susceptible to coherence-based deception even when neither medium is individually flawless. Audio deepfakes can be detected through spectral analysis; video deepfakes through eye-gaze inconsistency or lip-sync errors. But when audio and video are generated by the same AI model, they're optimized for coherence, not necessarily realism.

This means phishing attackers gain a secondary advantage: they can exploit the target's cognitive bias toward internal consistency rather than absolute realism. A slightly uncanny video paired with perfect audio might be more convincing than either medium alone, because the brain accepts the combination as a coherent whole.

The convergence of audio and video cloning represents the next frontier of enterprise cyber threats — one that organizations must prepare for now, before it becomes the norm.

Regulatory frameworks are forming, but are not yet sufficient. The EU AI Act includes provisions governing synthetic media and AI-generated content. The FTC has taken enforcement action related to AI-generated voice impersonation. Several U.S. states have passed or are advancing legislation specifically targeting deepfake voice cloning. These frameworks will eventually impose compliance requirements on organizations, but enforcement is still catching up to the technology.

The AI voice cloning detection race will continue to accelerate. AI voice detection tool providers are developing models trained on the latest cloning outputs. AI voice cloning tool developers, including those building tools used by phishing attackers, are simultaneously improving output quality to evade detection. Organizations that rely exclusively on detection technology without procedural and training controls are exposed whenever detection lags behind generation quality, which is frequent.

Take the first step toward reducing human risk by implementing Adaptive Security as a unified platform for simulation, training, and cyber threat response.

AI voice cloning attacks are simultaneously becoming more convincing, accessible, and frequently combined with other synthetic media. The organizations that build layered defenses now will be significantly better positioned than those waiting for technology alone to close the gap.

Frequently Asked Questions About AI Voice Cloning Scams

Is AI Voice Cloning Legal?

AI voice cloning is legal for commercial and personal use only when the creator has obtained explicit, documented consent from the voice owner. However, using cloned voices without authorization for fraud, commercial gain, or impersonation violates growing legal frameworks like the Tennessee ELVIS Act and the EU AI Act. Organizations should treat consent as a mandatory requirement for any internal AI voice use and implement strict controls and recordkeeping to remain compliant. Unauthorized use can lead to severe criminal charges for identity theft and costly civil litigation.

How Do Scammers Use AI Voice Cloning to Steal Money?

Vishing attackers use AI voice cloning to execute highly convincing vishing (voice phishing) attacks by mimicking the vocal characteristics of executives or family members. By harvesting as little as 10 seconds of audio from social media or public speeches, phishing attackers create synthetic replicas to bypass traditional verbal authentication.

What Should Be Done If an AI Voice Cloning Scam Is Suspected?

If an AI voice cloning scam is suspected, the most effective defense is to immediately hang up and break the attacker's momentum. Never share sensitive data or authorize financial transactions based on a voice call alone. Instead, verify the request through a secondary, trusted channel, such as calling the person back on a pre-saved number or using an internal encrypted messaging app.

How Are Organizations Training Employees Against AI Voice Cloning Scams?

Organizations are training against AI voice cloning by integrating vishing simulations into mandatory security awareness training. Employees are trained to identify technical red flags, such as unnatural pacing or robotic artifacts, and to resist the artificial urgency common in social engineering. Beyond behavioral training, firms are implementing out-of-band (OOB) verification protocols, requiring a secondary approval method for all financial requests.

Key Takeaways: Protect Organizations from AI Voice Cloning Scams

AI voice cloning scams require surprisingly little raw material. A one-shot clone — used for a single interaction — can be generated from as little as 3 seconds of publicly available audio, while a reusable voice replica typically requires around 10 seconds. In practice, this means any employee or executive with a digital presence is a potential impersonation target. Key characteristics that make this cyber threat particularly difficult to defend against:

- Human detection is unreliable. People cannot consistently identify deepfake voices, making a zero-trust approach to all voice communications essential — regardless of how familiar or authentic the caller sounds.

- Attack vectors have expanded. Vishing is no longer limited to financial fraud; attackers increasingly target IT help desks to steal credentials and gain unauthorized access.

- Out-of-band verification is the strongest control. Any urgent or high-pressure request delivered by phone should be verified through a separate, pre-established channel before action is taken.

- Training must reflect real conditions. Awareness programs need to go beyond basic modules and incorporate hyper-realistic voice simulations that build resilience under genuine pressure.

- The threat is evolving beyond audio. Multimodal deepfakes combining cloned voice with synthetic video mean visual confirmation is no longer a reliable fallback for identity verification.

Organizations that operationalize these controls can significantly reduce their exposure by combining procedural safeguards, behavioral training, and continuous simulation-based reinforcement.

Stay ahead of evolving AI voice cloning threats by continuously training employees with Adaptive Security to detect advanced social engineering tactics.

As experts in cybersecurity insights and AI threat analysis, the Adaptive Security Team is sharing its expertise with organizations.

Contents

.webp)

.webp)