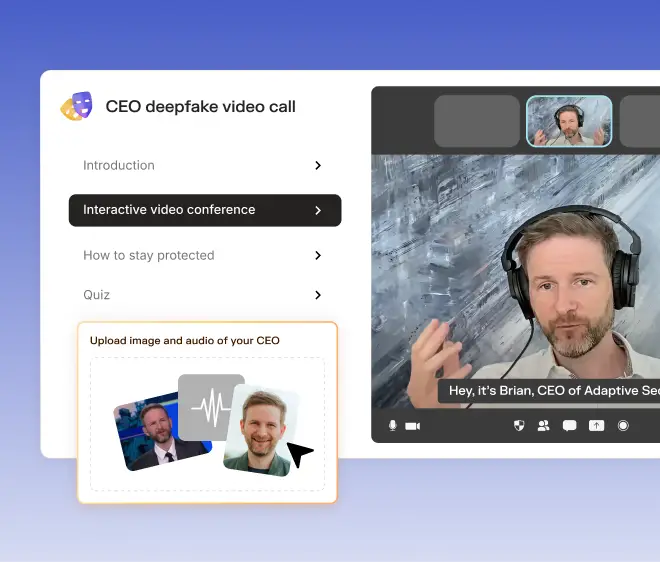

In early 2025, a multinational firm lost nearly $25 million after an employee received a voicemail from what sounded like their CEO, authorizing an immediate fund transfer. The voice was a perfect match. However, it turns out that it wasn’t their CEO at all.

This incident is an example of AI vishing (voice phishing) in action, one of the fastest-rising threats in cybersecurity today. In order to bypass digital defenses and trigger real-world damage, AI vishing exploits our most human vulnerability: trust in a familiar voice.

In this article, we’ll break down how AI voice spoofing works, why it’s escalating so quickly, and the concrete steps organizations can take to protect their teams from this new era of deception.

What is AI vishing?

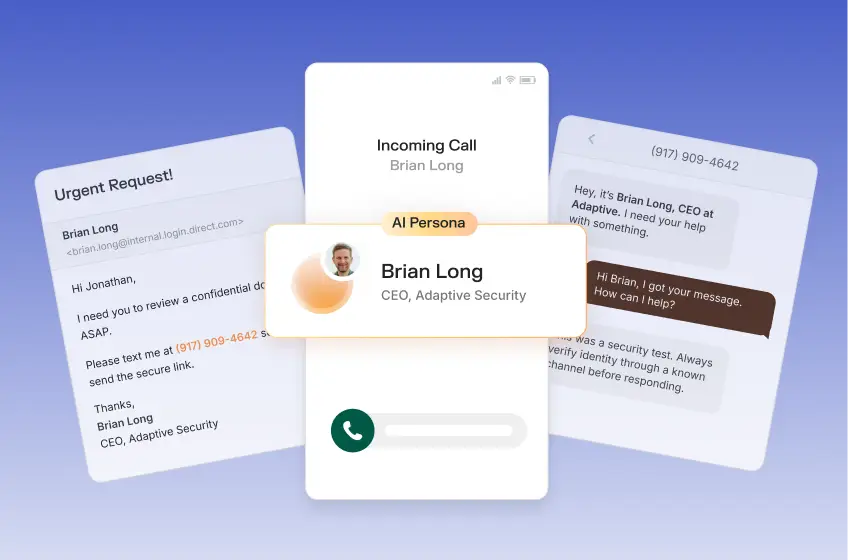

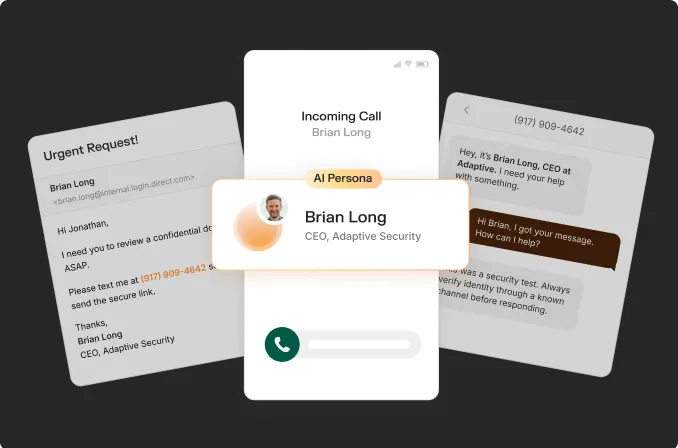

AI vishing uses artificial intelligence to recreate someone’s voice from just a few seconds of audio, such as a YouTube clip, podcast, or recorded meeting. They’ll call or leave a message that sounds like a colleague or boss and ask you to transfer money, share credentials, or approve something urgent.

Traditional vishing was often clumsy, with bad accents, robotic recordings, or generic threats that were easy to spot. Today’s scammers use off-the-shelf voice cloning software that can build a convincing fake in minutes.

A 2024 study by researchers at UC Berkeley found that participants mistook an AI-generated voice for the real person 80% of the time, and correctly identified synthetic voices as fake only 60% of the time.

AI vishing calls are showing up everywhere, from fake “bank fraud alerts” to CEO frauds where scammers pose as company executives. Group-IB reports that AI-powered voice fraud attempts jumped 194% in 2024. Global losses tied to synthetic voice scams could reach $40 billion by 2027.

AI vishing is effective because it exploits something we instinctively trust (a familiar voice) and turns it into a weapon.

Why AI vishing is so dangerous

AI has supercharged traditional vishing, making voice scams far harder to detect. Here are the reasons why AI vishing is so dangerous now:

- Uncanny realism: Cloned voices can now sound almost identical to the real person, mimicking their tone, accent, and rhythm.

- Smarter targeting: Attackers no longer rely on generic scripts. AI tools scrape public data to build personalized stories.

- Scale and accessibility: With off-the-shelf tools, scammers can launch thousands of calls in minutes.

- Real-world impact: Deepfake voice scams have already resulted in significant financial losses worldwide, including AI-powered executive impersonation attacks.

- Emotionally adaptive delivery: AI models can adjust pitch, pace, and word choice in real time.

AI vishing thrives because it attacks human instinct, not technology.

How to protect employees against AI vishing and voice spoofing

Organizations taking a stand against AI vishing employ strategies that emphasize vigilance among employees, ultimately strengthening the human firewall.

Adaptive Security simulates real AI-voice attacks to help teams practice these exact scenarios. Request a demo today.

As a technology reporter-turned-marketer, Justin's natural curiosity to explore unique industries allows him to uncover how next-generation security awareness training and phishing simulations protect organizations against evolving AI-powered cybersecurity threats.

Contents