Real or fake? Everything digital raises the question, and it's all due to threat actors creating deepfakes that achieve a level of realism taking AI phishing to an unprecedented level.

Deepfakes are highly realistic, AI-generated or manipulated video and image content designed to deceive. While the technology itself has fascinating creative potential, deepfakes also serve as a weapon for cybercriminals. The fuel for their AI-generated illusions is often the vast amount of images, videos, and voice recordings readily available online through open-source intelligence (OSINT), which leads to hyper-realistic attacks.

IT and security leaders recognize that deepfakes are only growing more dangerous. The line between real and fake is becoming increasingly blurred, and awareness and preparations — strengthening the human firewall — are the strongest defenses against these emerging threats. Everyone should be familiar with deepfake examples of AI-generated videos and images, even though we've all likely encountered them knowingly or not.

How Do Deepfakes Work?

Deepfake technology utilizes artificial intelligence, specifically machine learning (ML) models such as generative adversarial networks (GANs). Think of it like an apprentice forger trying to create a fake so perfect that an art critic can't tell it apart from the original. It's a process repeated millions of times until the AI produces compelling fake video, audio, or images.

The vast amounts of data necessary to train AI models are often meticulously gathered from publicly available sources. Cybercriminals typically scour:

- Corporate Websites: 'About Us' pages with staff photos and bios, promotional videos, recorded webinars, and executive interviews provide high-quality source material.

- Social Media: Profiles on LinkedIn, Facebook, Instagram, X, TikTok, and YouTube are treasure troves of images, videos, and audio recordings.

- Public Appearances: Keynotes, conference presentations, news interviews, and podcasts available online offer extensive audio-visual data.

Any digital asset an individual or organization puts into the public domain can be used to create a deepfake, which makes understanding and managing one's digital footprint more critical than ever.

In terms of the types of deepfake techniques most common today, here's what to expect:

- Face Swapping: Replacing one person's face with another's in a video.

- Voice Cloning: Replicating a person's voice from audio samples to produce new speech.

- Lip Syncing: Altering mouth movements in a video to match different audio.

- Full Body Puppeteering: Controlling the movements and actions of a person in a video.

Only a decade ago, this level of sophistication was a dream for cybercriminals. However, they're now able to easily access everything needed to craft a believable deepfake in minimal time.

5 Deepfake Examples in the Wild

Spend any time online? AI videos and images will appear, guaranteed. Therefore, it's important to be proactive and familiarize yourself with deepfake examples to understand the various forms they take and the tactics cybercriminals employ. Let's explore a few real-world examples of deepfakes, their multifaceted nature, and how publicly available information contributes to creation.

1. Deepfake in Politics

Elections around the world see their fair share of deepfakes. In the United States, a political consultant used AI to create robocalls of President Joe Biden during the 2024 primaries. Indonesians, meanwhile, saw a deepfake of Suharto, the country's longtime leader who died in 2008, endorse a slate of candidates.

Deepfakes in politics are particularly dangerous because they can rapidly spread misinformation, sway public opinion, and erode trust in democratic processes. The speed at which a convincing fake can go viral far outpaces the ability of fact-checkers and journalists to debunk it, and by then the damage is done.

2. Deepfake on Social Media

Oscar-nominated actor Tom Cruise isn't on TikTok, but @deeptomcruise is. In 2021, Chris Umé began posting videos that appeared to show Cruise engaging in a variety of activities that seemed unusual. Well, it wasn't Cruise at all. Umé, a visual effects artist with expertise in AI, teamed up with actor Miles Fisher to utilize deepfake technology, allowing a fake version of Cruise to be the star of a TikTok account with over 5 million followers and 19.5 million likes.

While this example was created for entertainment, the same technology in malicious hands can be used to spread disinformation, damage reputations, or deceive followers into fraudulent schemes.

3. Deepfake in Financial Fraud

Cybercriminals are using the name, image, and likeness of one of the world's wealthiest people to commit fraud. CBS News reported that ads on several social media platforms use deepfakes of Elon Musk to peddle investment opportunities. In one case, a 62-year-old woman followed through on the pitch and opened an account with more than $10,000 — all under the illusion that Musk himself was in the ads.

This type of deepfake fraud preys on trust and authority. When a well-known, credible figure appears to endorse an opportunity, victims are far less likely to question it, especially when the video is convincing enough to pass casual scrutiny.

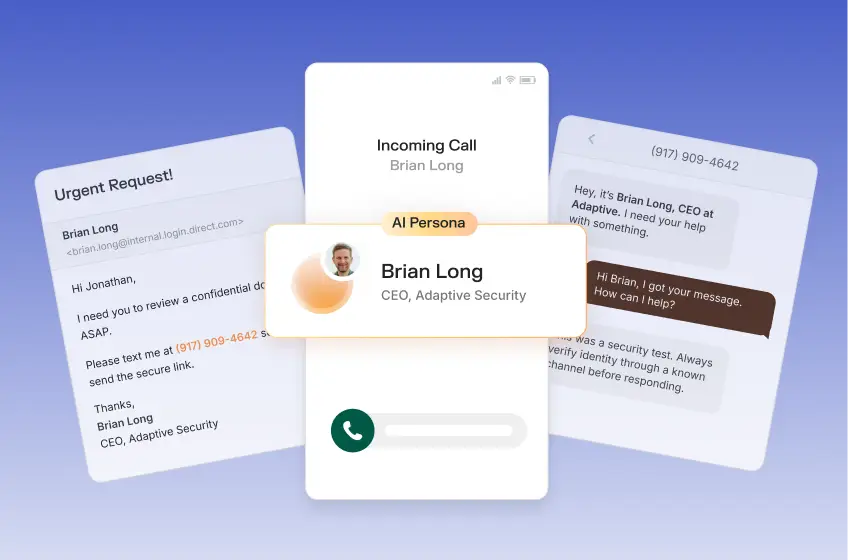

4. Deepfake Video Call Fraud

In early 2024, a finance employee at a multinational firm in Hong Kong was duped into transferring $25.6 million after attending a video conference call where every other participant — including the CFO — was a deepfake. The attackers had researched their target, gathered public video and audio of the company's executives, and used that data to generate convincing real-time deepfakes during the call.

This incident underscores that deepfake attacks are no longer theoretical. They are active, sophisticated operations capable of bypassing human judgment and causing catastrophic financial damage.

5. Deepfake for Identity Fraud

Deepfake technology is increasingly being used to bypass identity verification systems. Fraudsters create synthetic faces or manipulate live video feeds to fool facial recognition software used in KYC (know your customer) checks at financial institutions. By presenting a high-quality deepfake instead of their actual face, attackers can open accounts, access services, or impersonate victims entirely.

This represents a fundamental challenge to digital identity verification — a cornerstone of modern security — and illustrates how quickly AI is outpacing the defenses built to stop it.

Detecting Deepfakes Is Challenging

Detecting deepfakes is challenging and only becoming more difficult as the technology evolves. Endless personal and corporate data already lives online, so the focus must shift from solely trying to prevent data availability to preparing for its misuse.

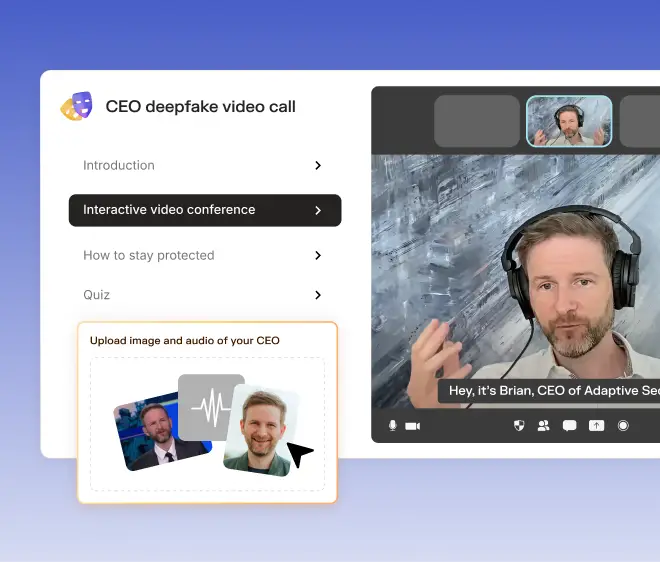

This is why security awareness training is critical for an organization. Adaptive Security understands that the only true defense is a prepared, skeptical, and well-trained workforce. Our next-generation platform for security awareness training and phishing simulations is designed to prepare organizations and their employees for emerging, AI-powered threats.

Organizations training employees with Adaptive Security's platform go far beyond static modules. Company OSINT allows IT and security teams to create their own deepfakes of senior leadership, and the entire content library is fully customizable — and includes AI Content Creator.

As a result, employees engage with tailored training modules and then face real-world deepfake attack simulations for phishing training that actually test their knowledge.

As a technology reporter-turned-marketer, Justin's natural curiosity to explore unique industries allows him to uncover how next-generation security awareness training and phishing simulations protect organizations against evolving AI-powered cybersecurity threats.

Contents

.avif)

.webp)

.webp)