This guide provides security teams with a comprehensive framework for identifying, preventing, and responding to a phishing email incident. Email phishing attacks represent a persistent and evolving threat vector that can function as a standalone attack or as an initial access mechanism within larger incidents, such as ransomware campaigns. The following topics are addressed:

- What is a phishing email?

- Why does an email phishing attack matter in 2026?

- How does a phishing email work?

- The most common phishing email attacks used against companies

- How to spot phishing emails: 10 common red flags

- How to conduct a phishing email security training for employees?

- How to use technical controls and processes against phishing emails

- How to create a phishing email incident response playbook

- Future trends in phishing emails

Schedule an Adaptive Security demonstration to strengthen the human layer of defense against email phishing attacks.

A phishing email is a fraudulent message used by cybercriminals to deceive employees into taking an action that benefits the attacker. This type of social engineering attack involves cybercriminals impersonating a brand, an individual, or a scenario that appears credible to the intended target.

In early 2026, for example, the Federal Motor Carrier Safety Administration (FMCSA) issued a warning regarding fraudulent emails targeting motor carriers that impersonated the FMCSA or the United States Department of Transportation (USDOT). Similar incidents have been documented across industries.

The term "phishing" originates from the early days of this attack type, reflecting the practice of casting a wide net in the expectation that at least a subset of targeted employees will engage with the malicious message.

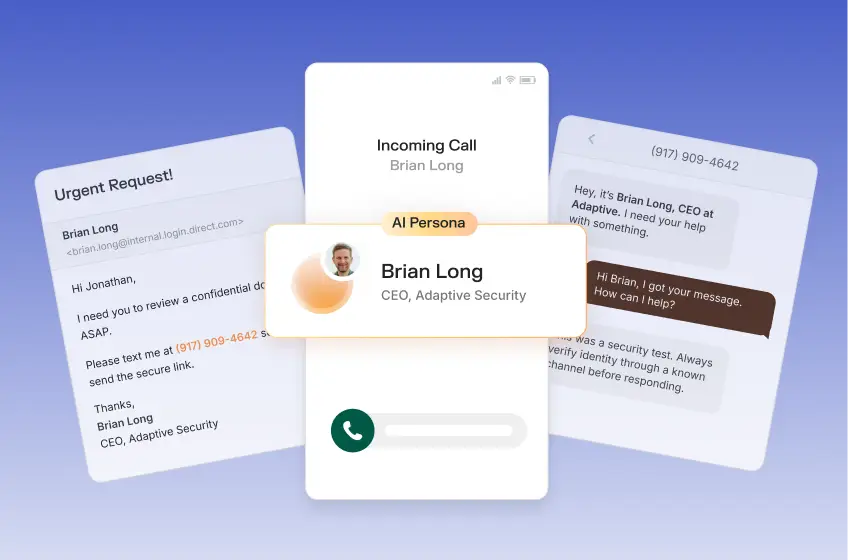

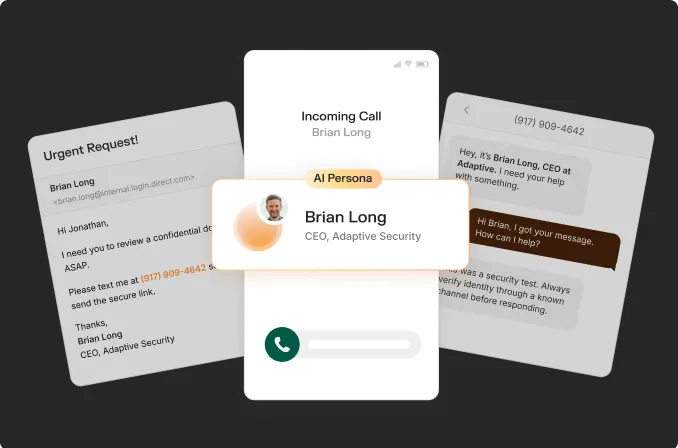

An AI-powered phishing email attack represents a significant evolution in this threat, as cybercriminals increasingly leverage AI to produce more sophisticated, targeted messages capable of bypassing both technical security controls and the intuitive recognition of suspicious emails that many employees have developed.

Phishing Email vs. Spam

Spam and phishing are similar, but represent distinct threats. A phishing email is a form of social engineering aimed at stealing sensitive information, whereas spam refers to unsolicited bulk email with a commercial objective, such as promoting a product, driving traffic to a website or application, or supporting an affiliate program.

The primary distinction lies in the level of danger each presents. Spam is generally disruptive but not harmful. Phishing, by contrast, poses a considerably greater risk, as the unauthorized acquisition of sensitive information can result in severe consequences for the targeted organization.

Volume represents another differentiating factor. Spam operates at scale, distributing messages to large recipient pools. While phishing campaigns can also be deployed in bulk, they tend to be more targeted, a characteristic that has become more pronounced with the rise of AI-powered phishing.

There is some overlap between the two concepts, which contributes to confusion, as cybercriminals may conduct broad credential-harvesting campaigns that impersonate widely recognized brands. Additionally, spammers can use bounce rates and read receipts to validate email addresses before launching a more precise email phishing attack. While a spam email checker provides a foundational layer of protection, it is insufficient as a standalone defense against sophisticated phishing attacks.

Key takeaway: Spam seeks the employee's attention, while phishing seeks access or direct financial gain.

Phishing Email vs. Spoofing

Another term that warrants clarification is "spoofing," which is often used alongside a phishing email to deceive employees. Spoofing is a technique cybercriminals use to falsify common security indicators.

Email header spoofing, for example, is a technique commonly employed in phishing attacks, enabling cybercriminals to forge the sender's name or address so that the message appears to originate from a legitimate domain.

When combined with spoofing, an email phishing attack becomes considerably more convincing. A cybercriminal may send an email that appears to originate from an organization's internal IT department, lending the message a false sense of legitimacy. Spoofing is also frequently observed in Business Email Compromise (BEC) attacks.

Key takeaway: Phishing emails and spoofing can coexist in a single attack, but each technique can operate independently.

Phishing Email vs. Email Scams

Email can also serve as a direct vehicle for fraud without constituting an email phishing attack. The primary objective of phishing is information harvesting, enabling cybercriminals to obtain sensitive data such as login credentials and passwords. Email scams, by contrast, are oriented toward direct financial gain.

A CEO email scam, for example, involves impersonating an executive to deceive employees into transferring funds to an account controlled by the cybercriminal. Although such schemes do not strictly qualify as phishing, given that the objective is financial rather than informational, phishing emails and email fraud are generally treated as equivalent threats, as both employ similar attack methods and are addressed through comparable security controls.

Why It Matters: Email Phishing Attack Statistics for 2026

Phishing emails represent a persistent threat across both enterprise and personal contexts. This threat is particularly acute in the United States, as the ThreatLabz 2025 Phishing Report reveals that the US remains a top target, with protocols like DMARC and Google's sender verification blocking 265 billion unauthenticated emails.

Cybercriminals continue to exploit this defensive gap, especially on the human front. Verizon's 2025 Data Breach Investigations Report found that 60% of all breaches involve the human element, and email serves as an initial attack vector in 27% of all breaches, indicating that a substantial proportion of enterprises remain inadequately prepared to address this threat and its consequences.

The financial impact is also considerable. IBM's 2025 Cost of a Data Breach Report puts the average total breach cost at $4.4 million, encompassing remediation, recovery, reputational damage, and lost business.

AI-generated phishing emails are also becoming more prevalent. According to Sift's 2025 Q2 Digital Trust Index, 82% of phishing emails are created with the help of AI, while IBM's 2025 Cost of a Data Breach Report indicates that 37% of all breaches involve AI-generated phishing as an attack method.

The effectiveness of such campaigns is well-documented. A 2024 study titled Evaluating Large Language Models' Capability to Launch Fully Automated Spear Phishing Campaigns: Validated on Human Subjects, conducted by Bruce Schneier and fellow researchers, found that fully automated AI-generated emails achieved a click rate of 54%, comparable to those produced by human experts, while reducing production costs by approximately 97%.

The 2026 Global Cybersecurity Outlook, published by the World Economic Forum, provides a concise characterization of this trend, noting that recent developments in generative AI are reducing the barriers to executing phishing attacks while simultaneously increasing their sophistication and credibility.

Phishing Email Examples Against Companies

Phishing email campaigns have targeted organizations across industries for years, producing consequences ranging from substantial financial losses to severe reputational damage. A representative case involves employees at several of the world's largest corporations being deceived into transferring more than $100 million to cybercriminals.

This case illustrates that no organization is immune to even rudimentary attacks. Large organizations face heightened exposure due to their expansive attack surfaces, as organizational scale and complexity introduce additional vulnerabilities. A greater number of employees and third-party relationships also expand the opportunities available to cybercriminals.

A second notable case involves a toy manufacturer that transferred more than three million dollars to a criminal group. This incident is particularly instructive in the level of reconnaissance it required. The perpetrators identified a natural point of organizational vulnerability, the transition between executives, and conducted detailed research into the communication patterns of the individuals involved.

One of the world's largest social media platforms also suffered a phishing incident in which a single employee was directed to a fraudulent intranet page, exposing source code and internal data. The employee self-reported the incident, enabling the security team to investigate promptly and contain the damage. This case is notable for a secondary reason: a strong organizational security culture was instrumental in limiting the impact of the breach.

Smaller organizations are equally at risk. A targeted email phishing attack against a real estate firm resulted in losses of hundreds of thousands of dollars. In that incident, cybercriminals impersonated an executive's assistant and requested payment for a specific project. The fraudulent email spoofed the legitimate address by a single character, with no additional technical exploit, demonstrating that even rudimentary phishing methods can compromise employees.

Among the most thoroughly documented phishing email examples is one involving cybersecurity professional Troy Hunt, who publicly detailed how a phishing email compromised his mailing list. His account describes the mechanics of the attack, its operational impact, and an analysis of the phishing lure itself. He further notes that the process was fully automated, which prevented timely intervention once the data loss was identified.

A particularly significant observation from his account concerns the human factors that enabled the attack. Hunt noted that prior exposure to similar phishing attempts had not resulted in compromise, and attributed his vulnerability in this instance primarily to fatigue and diminished alertness. He acknowledged that the attacker had no means of knowing his condition and that the timing appeared coincidental rather than targeted.

This account underscores that even highly experienced cybersecurity professionals are susceptible during periods of reduced vigilance and that automated phishing campaigns can exploit such moments effectively.

How Does a Phishing Email Work?

Email phishing attacks vary considerably in their objectives, complexity, and intended targets. Such attacks may be designed to achieve direct financial gain or to harvest credentials. Campaigns targeting executives and other senior personnel tend to be more precisely crafted and involve a greater degree of prior research.

Despite these variations, every fake email generally shares a common structural framework, which can be organized into four components. The effectiveness of each component is substantially enhanced through the application of AI.

Step 1: Phishing Email Target Research

Phishing email campaigns invariably begin with a reconnaissance phase conducted before any fake email is composed. The primary objective of this stage is to collect as much information as possible about the intended target, with the scope of that research varying according to the nature of the attack. Generic bulk campaigns require considerably less preparation than targeted operations such as email spear phishing.

For generic bulk phishing, this stage is minimal, typically involving compiling a validated email list and identifying a brand to impersonate. These campaigns operate on a probabilistic basis: a bulk attack reaching one million recipients at a 0.1% success rate still yields a thousand compromised targets.

Targeted attacks, by contrast, demand substantially greater preparation. Cybercriminals collect information across multiple sources to construct credible, well-tailored campaigns, including:

- Organizational information gathered from LinkedIn, corporate websites, and related sources

- Personal information drawn from LinkedIn and other social media platforms, where each additional data point increases the plausibility of the attack

- Leaked data sourced from the dark web, deep web, and other channels, including verified email addresses that are frequently sold by other cybercriminals to facilitate subsequent attacks

- Technical information such as DNS records and WHOIS data

This information is aggregated to build a target profile, enabling cybercriminals to identify individuals with financial authority, access to sensitive information, or the ability to fulfill or facilitate a fraudulent request.

Two of the phishing email examples outlined above illustrate this dynamic. In the manufacturing incident, reconnaissance identified a period of organizational vulnerability during an executive transition. In the real estate case, it revealed that impersonating an executive's assistant, rather than the executive directly, represented a more effective attack vector.

AI-powered email phishing attacks accelerate this reconnaissance phase without sacrificing accuracy. By processing large volumes of data from the sources described above, AI systems enable cybercriminals to generate precise target profiles that can be acted upon immediately.

Step 2: Asset Preparation for a Phishing Email Attack

Conducting an email phishing attack also requires dedicated infrastructure, with the level of sophistication determined by the target's nature. Key infrastructure components include:

- Domains: Lookalike domains employ techniques such as character substitution (for example, replacing an uppercase "I" with a lowercase "l"), top-level domain swaps (such as substituting ".com" for ".net"), and similar methods designed to deceive recipients

- Email infrastructure: This component encompasses a broad range of options, from compromised legitimate accounts to phishing-as-a-service platforms that provide fully pre-built infrastructure for immediate use

- Credential harvesting pages: Cloned login pages replicating legitimate services are used to deceive employees into submitting sensitive information

- Testing: Cybercriminals routinely test fake email content against widely used detection tools, iteratively refining each message until it bypasses security controls

- AI: AI substantially affects every aspect of this preparation phase, enhancing the speed, scale, and precision with which these infrastructure components can be developed and deployed.

Step 3: Craft the Phishing Email Lure

Every phishing email is constructed around a central lure, the element designed to initiate the deception. While the specific scenarios employed vary considerably, as illustrated in the phishing email examples outlined above, effective lures consistently leverage a defined set of psychological parameters:

- Urgency: A fake email typically pressures recipients to act immediately, with the intent of disrupting deliberate decision-making processes and circumventing verification procedures

- Authority: A fake email is designed to invoke a trusted sender, such as a senior executive, a recognized brand, or an established third-party partner

- Context: A fake email is constructed to be contextually credible, tailored to the recipient's role, and informed by prior reconnaissance. It frequently references known events or circumstances to reinforce plausibility

- Fear: A fake email exploits employees' concerns about professional consequences, whether arising from a perceived error or from failure to act

- Social proof: A fake email frequently indicates that other employees or internal stakeholders have already complied with or approved the request, reducing the recipient's perceived need for independent verification

Whether the lure succeeds depends almost entirely on human judgment, assuming the fake email bypasses existing security controls.

Step 4: Data Usage After a Phishing Email Attack

Once a recipient activates a malicious link or payload, the primary objective of the phishing email campaign moves within reach. Data exfiltration typically occurs through a credential harvesting page closely replicating a legitimate service. However, the attack does not conclude at the point of initial compromise; cybercriminals generally have defined plans for the exfiltrated data.

The immediate objective, credential theft, is achieved at this stage. Subsequent actions typically involve verifying the integrity of the stolen credentials and modifying email security controls to suppress or eliminate security alerts.

Beyond credential theft, the exfiltrated data may serve as the foundation for several subsequent attack vectors, including:

- Secondary phishing campaigns informed by the additional intelligence gathered during the initial compromise

- Lateral movement across connected systems to broaden access

- Privilege escalation to obtain higher levels of system control

- Ransomware deployment, with phishing serving as the initial intrusion vector in 35% of cases, according to SpyCloud's 2025 Identity Threat Report

Most Common Phishing Email Attacks

Phishing email attacks present a diverse and evolving threat landscape. A clear understanding of their operational mechanics, objectives, and psychological triggers is essential for security teams tasked with detection and prevention. Given the considerable variation in sophistication across attack types, security teams cannot limit their focus to traditional phishing methods, as the threat has evolved substantially over time.

Bulk Email Phishing or Traditional Phishing

Bulk email phishing represents the more traditional form of the attack, prioritizing volume over personalization. Distributed simultaneously to large recipient lists, often numbering in the millions, bulk campaigns operate on a straightforward probabilistic model: even a marginal response rate generates profitable outcomes for the attacker.

While bulk phishing is more commonly associated with consumer-facing contexts, it is also used in corporate environments with similar logic. Cybercriminals impersonate widely recognized brands, relying on the probability that a sufficient proportion of recipients will use those services and engage with the email. The Troy Hunt case exemplifies this approach.

Bulk phishing campaigns do not always begin without prior intelligence. When a platform's email list is compromised, for example, cybercriminals gain access to information that, while insufficient to support personalization, improves the cost-effectiveness of the campaign by ensuring greater relevance among recipients.

Email Spear Phishing

Email Spear phishing represents a fundamentally different approach to bulk email phishing, prioritizing precision and personalization over scale. By targeting specific individuals or groups, email spear phishing campaigns typically achieve substantially higher success rates and generate significantly larger returns for cybercriminals.

Personalization is the defining characteristic of spear phishing, making the reconnaissance phase the most critical component of the attack. Attackers invest considerable time and resources into developing a detailed understanding of their targets, aggregating data from LinkedIn, corporate websites, press releases, social media platforms, and leaked data sources.

This intelligence enables the construction of emails that reference the target by name and role, identify colleagues by name, and incorporate relevant organizational context such as internal news or recent events.

A particularly significant capability introduced by AI is the ability to replicate individual writing styles. By processing LinkedIn posts and other written material attributed to the person being impersonated, AI models can generate communications that closely mirror the individual's linguistic patterns, substantially increasing the attack's credibility.

Given the targeted nature of email spear phishing, it is useful to examine susceptibility by organizational role. The following personnel categories represent the most frequently targeted groups:

- Executives: Targeted both as the intended victims of the attack and as individuals to be impersonated, due to their level of access and organizational authority

- Finance personnel: Represent a direct pathway to financial fraud, including the authorization of improper payments

- IT personnel: Control access credentials and cloud environments, both of which are of considerable value to attackers

- HR personnel: Occupy a central position within organizational information flows, particularly regarding employee data. Communications originating from HR also tend to generate lower levels of suspicion

- Legal personnel: Handle sensitive and frequently confidential information, and routinely operate under conditions in which urgency is standard, making them susceptible to time-pressured requests

- New hires: Present an accessible target, as recently onboarded employees are typically unfamiliar with established processes and inclined to comply with requests from perceived authority figures

AI is rapidly altering the economics of email spear phishing, as it has with bulk campaigns. Historically, developing accurate spear phishing emails required substantial time and resources, driven largely by the demands of the reconnaissance phase. AI systems are now capable of executing the majority of this preparatory work while producing communications that are both thoroughly researched and linguistically refined.

Whaling Phishing Emails

Whaling is a more specialized form of email spear phishing that targets the most senior and high-value individuals within an organization, including CEOs, CFOs, CISOs, board members, and legal counsel. The analogy extends naturally to this attack category: while traditional phishing casts the widest possible net and spear phishing targets specific individuals, whaling focuses on the highest-value targets available.

The distinction between whaling and email spear phishing extends beyond the seniority of the target. The level of investment required is the most significant differentiating factor. Given the considerably higher value of a successful whaling attack, cybercriminals are prepared to commit substantially greater resources to ensure the campaign's credibility.

This investment is reflected in the depth of the reconnaissance phase. Cybercriminals construct highly detailed target profiles by aggregating information from sources including:

- LinkedIn and professional networks

- SEC filings and earnings calls

- Press releases and other media coverage

- Conference appearances and recorded interviews

- Social media platforms

- Leaked or commercially available data broker records

Beyond the research and time investment, several additional characteristics distinguish whaling attacks from other phishing methods:

- Personal targeting: Whaling attacks are not necessarily confined to a professional context. Cybercriminals may impersonate tax authorities or legal processes directed at the executive's personal affairs

- Low volume: Whaling campaigns typically involve a single, precisely crafted email, bypassing security controls designed to detect high-volume activity

- Cultural resistance: Executives may resist security controls perceived as impediments to productivity, creating exploitable gaps in organizational defenses

- Executive assistants: Assistants authorized to act on behalf of an executive represent an expanded attack surface and are frequently targeted as an alternative entry point

- Operational conditions: Executives routinely manage multiple concurrent responsibilities, travel frequently, and communicate across non-standard channels, and on non-business hours, creating conditions of persistent time pressure that cybercriminals are positioned to exploit

Standard security controls provide a baseline level of protection for executives, but this profile requires additional layers of defense. Security teams should monitor executive travel schedules to anticipate and prepare for potential whaling attempts during periods of heightened vulnerability.

Executives and executive assistants also warrant dedicated attention within phishing awareness training programs, with explicit coverage of whaling as a distinct attack category. A targeted whaling email phishing test is a valuable practice, with equal emphasis on demonstrating the extent of executive exposure and reinforcing applicable security behaviors.

Business Email Compromise (BEC)

Business Email Compromise (BEC) is a form of email phishing attack in which cybercriminals leverage legitimate accounts, or closely spoofed equivalents, to deceive employees into taking actions that serve the attacker's objectives. BEC involves no technical exploits, relying entirely on human vulnerability to achieve its goals.

A defining characteristic of BEC is the absence of conventional attack artifacts. These campaigns typically do not deploy malicious links, downloadable attachments, malware, or suspicious files. The attack is generally executed through a plain-text request, indistinguishable in format from legitimate internal communication.

This poses a significant defensive challenge, as BEC attacks can bypass most technical security controls that rely on analysis of malicious artifacts. When compromised legitimate accounts are used, the attack may also circumvent human verification processes, even where the email is effectively spoofed.

Reconnaissance is therefore the foundational element of BEC. Generic or bulk BEC campaigns are largely absent from the threat landscape; instead, attackers typically monitor target inboxes over extended periods to understand vendor relationships, standard transfer amounts, and the identities of relevant personnel. This intelligence enables the construction of requests that are nearly indistinguishable from legitimate communications. Common BEC attack categories include:

- CEO fraud: Cybercriminals impersonate a chief executive or other senior leader, issuing a direct instruction, typically to a finance team member, requesting an urgent fund transfer

- Vendor fraud: Cybercriminals impersonate suppliers or vendors to notify the target of a pending payment or to redirect future payments to a fraudulent account. Attacks leveraging compromised accounts from legitimate vendors are not uncommon

- Legal fraud: Cybercriminals impersonate legal counsel or representatives to obtain sensitive information or authorize payments. Attacks of this type are particularly effective when the target organization is navigating a sensitive period, such as an acquisition or regulatory deadline

- Payroll fraud: Cybercriminals impersonate an employee and contact HR personnel to request modifications to payment information

- IT fraud: Cybercriminals impersonate a team member with existing account access to deceive IT personnel into granting access to a more privileged account

- Real estate fraud: Cybercriminals impersonate buyers, sellers, agents, or other parties involved in property transactions, intervening at critical closing stages to redirect funds

The combination of contextual sophistication and resistance to technical detection makes BEC one of the most consequential attack categories available to cybercriminals. According to the FBI's 2025 Internet Crime Report, BEC generated losses exceeding $3 billion, establishing it as the most financially destructive threat facing organizations, a 10% year-over-year increase from 2024.

Defending against BEC presents considerable challenges given its resistance to technical detection and its potential for substantial financial damage. Email security training for employees and rigorous verification procedures, particularly those that address the common fraud categories outlined above, are among the most effective available countermeasures.

Clone Phishing Email

Clone phishing is a targeted attack technique in which cybercriminals replicate legitimate emails previously delivered to the intended recipient, introducing only minor modifications to achieve the attack's objective. The replication process encompasses all relevant content elements, including layout, structural formatting, and the sender's display name, while replacing original links or attachments with malicious equivalents.

The cloned email is subsequently delivered to the original recipient, accompanied by a message indicating a broken link or missing attachment, intended to direct the employee to interact with the replica rather than the legitimate original.

The attack chain follows a pattern consistent with other phishing methods; however, the reconnaissance phase is particularly important, as the attacker must first identify and obtain a legitimate email address to replicate.

Once a suitable candidate is identified, the original email is cloned with the highest possible fidelity, preserving all content and branding elements while making minimal header adjustments and inserting the malicious payload, whether a link or an attachment.

In most cases, the cloned email is distributed from a spoofed address or domain. When sent from a compromised legitimate sender account, however, the attack becomes considerably more difficult to detect and defend against, as it can bypass standard security controls.

The principal danger of clone phishing lies in its exploitation of established trust. Unlike attacks that rely on unfamiliar senders or implausible scenarios, clone phishing presents recipients with a known email from a known sender, grounded in a prior legitimate interaction. This approach systematically undermines the instinct to treat unfamiliar communications with suspicion.

AI further enhances the effectiveness of this attack type by accelerating both the monitoring and cloning phases, reducing the time required to execute the attack, and substantially decreasing the likelihood of a missed opportunity due to timing constraints.

A well-integrated combination of technical security controls, rigorous operational processes, and comprehensive email phishing awareness training represents the most effective defensive posture against this threat.

Thread Hijacking

Thread hijacking is an attack technique in which a cybercriminal injects malicious content into an existing, legitimate email thread, leveraging the established context of the exchange to deceive the intended recipient into taking an unintended action.

An important distinction exists between clone phishing and thread hijacking. In clone phishing, the attacker replicates an existing email and redistributes it to the recipient. In thread hijacking, the attacker operates as an active participant within the conversation, waiting for an opportune moment to compromise it.

The most dangerous form of this attack involves compromised mailboxes, in which the attacker gains access to a legitimate account and replies directly from within it, bypassing most technical security controls by default.

In cases where direct account access is unavailable, cybercriminals may obtain thread history through alternative means, including observing previously compromised mailboxes, deploying malware designed to harvest email data, or exploiting data breaches that expose email content.

The effectiveness of thread hijacking derives substantially from contextual credibility. The legitimacy of the sender's identity, the active nature of the conversation, and the alignment of the malicious request with the existing thread collectively reduce the recipient's capacity to identify the attack.

As with the attack types outlined above, thread hijacking follows a consistent operational framework, with particular emphasis on thorough reconnaissance and precise timing.

Other Types of Phishing Attacks and How They Enhance Email Phishing

According to the Verizon 2025 Data Breach Investigations Report, email was present in 27% of all reported breaches. The same report from the previous year established that email was featured in nearly 100% of the top action vectors associated with social engineering breaches.

While email remains prevalent, other types of phishing attacks in 2026 are increasingly multi-channel by design, with cybercriminals combining platforms and strategies to craft a more compelling, cohesive deception that extends beyond the initial email. Complementary phishing techniques include:

- Quishing: A technique that substitutes a conventional hyperlink with a QR code to direct the recipient toward a malicious destination

- Vishing: Voice phishing in which cybercriminals impersonate a known colleague via phone call or voice message to reinforce the credibility of the primary message

- Deepfake video phishing: A technique in which cybercriminals fabricate video content, or engage the target in a live call, using the synthetic likeness of a known colleague or executive to enhance deception

- Smishing: SMS-based phishing in which a malicious link or reinforcing message is delivered via text message or messaging application

- Pharming: A more advanced technique in which cybercriminals corrupt the path between a URL and its corresponding IP address, redirecting users to fraudulent websites even when the correct address is entered directly. Pharming payloads can be delivered through phishing and are capable of compromising employees who bypass links and navigate directly to the source

- Watering hole attacks: Attacks in which cybercriminals inject malicious code into a website frequently visited by the target, which executes on the next visit. A phishing email may be used to direct the target to the compromised site

The overarching objective is to combine as many channels as possible to construct a narrative sufficiently compelling to deceive employees across multiple points of contact. A prominent recent case involved an engineering firm in which an employee received a spear phishing email purportedly from the organization's chief executive officer, directing the employee to join a video call featuring deepfake representations of senior executives and requesting authorization for a financial transfer. The employee complied, resulting in losses exceeding $25 million.

Explore the complete Adaptive Security phishing guide for a comprehensive understanding of multi-channel phishing threats and the strategies required to address them.

What Can Security Teams Learn from Common Phishing Email Types?

A close examination of the most prevalent email phishing attack types reveals several recurring characteristics. These patterns provide security teams with concrete guidance that can inform a comprehensive defensive strategy:

- Technical controls: Many phishing techniques can bypass traditional security controls. This does not diminish the importance of deploying such controls; however, relying exclusively on them represents a significant and potentially costly vulnerability

- Context and reconnaissance: The most sophisticated phishing campaigns invest heavily in contextual intelligence and narrative credibility, especially in a corporate setting. This presents a meaningful defensive opportunity, as disrupting the reconnaissance phase is critical to mitigating the most impactful attacks

- Awareness and processes: Robust verification protocols and well-structured email security training for employees are effective countermeasures against the human vulnerabilities that phishing campaigns are designed to exploit

- Compromised accounts: The use of compromised accounts substantially increases attack success rates, as they can bypass both technical controls and human verification. Continuous monitoring for compromised accounts, therefore, constitutes a critical component of an effective defensive strategy

- Artificial intelligence: AI enhances both the quality and the speed of phishing attack execution, improving the operational viability and precision of campaigns

- Expanding the trust paradigm: Contemporary phishing attacks extend the established principle of distrusting the unknown, requiring organizations to adopt a broader standard of verification that applies even to apparently known and trusted sources

These observations form the foundation of an effective organizational defense against phishing. Each of these areas will be examined in greater detail in the subsequent sections.

Advanced Phishing Email Techniques

While the preceding section examined the most prevalent and impactful phishing email attack types, cybercriminals employ additional advanced techniques and strategies to further enhance their campaigns' effectiveness.

Phishing-as-a-Service

Phishing-as-a-Service (PhaaS) refers to the commercialization of phishing toolkits that enable cybercriminals with limited technical expertise to deploy effective campaigns within a compressed timeframe. The model mirrors the software-as-a-service structure, with access typically obtained through a subscription fee or a share of the campaign's proceeds.

A broad range of PhaaS providers operate across the threat landscape, offering toolkits that encompass all the components required to execute a phishing campaign. These include infrastructure components, campaign management capabilities, and operational security features, such as filters that block security scanners.

The most significant implication of PhaaS is the fundamental shift it introduces to the economics of phishing. With technical proficiency no longer a prerequisite, the barriers to launching a phishing attack are reduced to motivation and a modest initial investment.

For security teams, this development represents a considerable increase in phishing activity, further amplified by the convergence of PhaaS platforms with AI capabilities.

Adversary-in-the-Middle and Phishing Emails

Adversary-in-the-Middle (AiTM) is a technique in which cybercriminals position a malicious proxy infrastructure between the target and the legitimate service the target is attempting to reach. It is commonly deployed within email phishing campaigns to bypass traditional multi-factor authentication (MFA) controls and capture valid session credentials. The attack typically proceeds through the following sequence:

- The attacker delivers a phishing email containing a malicious link, using a recognized brand as the lure

- The recipient clicks the link and is directed to the attacker's proxy, which simultaneously establishes a connection to the legitimate website

- The legitimate website is rendered for the employee through the proxy, bypassing both technical and human verification

- The employee submits credentials, including the MFA token, which the proxy forwards in real time to the legitimate website

- The legitimate website validates the credentials and MFA token, generating a post-authentication session cookie that is captured by the proxy

With a valid session cookie, the cybercriminal can maintain access for the duration of the cookie's validity. The defining danger of AiTM lies in its operational approach: rather than harvesting credentials for offline use, it proxies a live authentication session to capture the authenticated result directly. This grants the attacker immediate and complete access to the compromised account, with the potential for substantial damage.

Supply Chain Phishing Emails

Supply chain phishing exploits the established trust relationships between an organization and its third-party partners, including vendors, suppliers, and other external stakeholders. Rather than targeting the organization directly, cybercriminals compromise or spoof a third-party account and leverage that trusted identity to facilitate the attack.

This approach is particularly dangerous because the communication originates from a recognized and expected external source, yet lacks the degree of familiarity that would enable employees to identify anomalies in tone, language, or behavior. The combination of external trust and limited interpersonal familiarity creates a significant detection gap that cybercriminals can exploit.

How to Spot Phishing Emails: The 10 Red Flags

Understanding how to spot phishing emails has become increasingly difficult as cybercriminals continuously refine their tactics and as AI enhances the sophistication and linguistic quality of malicious communications, rendering many traditional detection methods less reliable. The FBI provides guidance on protective measures against phishing.

As outlined in the preceding section, cybercriminals operating in corporate environments are increasingly focused on targeted email phishing attack campaigns, a shift that has substantially diminished the reliability of conventional phishing indicators. This section examines ten traditional phishing red flags and provides contextual analysis of how each manifests in the modern corporate threat landscape.

The objective is to equip security teams with the knowledge necessary to extend this awareness across the broader organization, fostering a security-conscious culture grounded in informed vigilance.

Key takeaway: Employees are not expected to monitor all ten indicators simultaneously. The objective is to develop the capacity to identify red flags intuitively and to apply a systematic verification process from that point forward.

How to Spot Phishing Emails Red Flag #1: The Sender

The sender field represents the most immediately visible indicator of a phishing attempt and is among the first elements employees examine when reviewing an email. Cybercriminals are fully aware of this behavior and devote considerable effort to manipulating the entire sender header.

The display name visible in an inbox is entirely arbitrary and subject to no technical constraints. Cybercriminals can populate this field with any content, making face-value acceptance of the display name an unreliable verification method.

The more meaningful indicator is the sender's email domain, which employees can inspect with a single click. Security teams should not assume this practice is universal, as it is not an instinctive habit for all personnel.

Cybercriminals refine this technique by constructing spoofed domains that closely resemble legitimate ones, substituting visually similar characters such as a lowercase "l" for an uppercase "I," or "rn" to approximate "m." Spoofed domains of this nature present a heightened risk in mobile environments, where reduced screen size makes character-level inspection more difficult.

Key takeaway: Verifying the sender's email address should become a standard and instinctive response to any communication that raises suspicion.

How to Spot Phishing Emails Red Flag #2: The Greeting

Generic greetings have historically been a reliable indicator of phishing emails, with broad campaigns commonly employing impersonal salutations such as "Dear User" in place of the recipient's name. This pattern was widely incorporated into security awareness training and served as an effective detection signal for a considerable period.

However, the increasing prevalence of personalized and targeted attacks has diminished this indicator's reliability. AI-generated phishing has further accelerated this shift by fundamentally altering the economics of personalization.

Crafting individualized emails at scale previously required significant time and resources; AI systems can now process lists of thousands of recipients and generate compelling, grammatically precise, contextually relevant, and fully personalized communications at minimal cost.

For security teams, this evolution necessitates an additional layer of guidance. The generic greeting remains a useful indicator and should continue to feature in awareness programs. Equally important, however, is communicating clearly to employees that a personalized greeting does not constitute evidence of legitimacy.

Key takeaway: Generic greetings retain value as a detection signal across a meaningful portion of the phishing landscape, as bulk campaigns remain prevalent. However, their relevance is reduced in corporate environments and continues to decline as AI-generated phishing becomes more widespread.

How to Spot Phishing Emails Red Flag #3: The Signature

Email signatures represent an additional email phishing indicator, as legitimate organizational communications typically adhere to established signature conventions. For employees accustomed to seeing colleague signatures on a daily basis, deviations from expected formats may be more readily identifiable. Common signature-related indicators include:

- Incomplete signatures: Corporate signatures typically include the sender's name along with contact details, such as an email address and phone number. Missing elements may indicate a phishing attempt

- Inconsistent signatures: Discrepancies between information presented in the signature and other elements of the email, such as the sender's name or email address, may signal a fraudulent communication

- Incorrect contact details: Signatures may contain inaccurate contact information, including incorrect area codes or erroneous office addresses

- Broken formatting: Images embedded in HTML signatures may fail to render or display incompletely, producing minor but detectable visual inconsistencies

- Absent legal disclaimers: Organizations frequently append standardized legal footers to outbound communications, which cybercriminals may find difficult to replicate accurately

A significant complication for this indicator is that inconsistencies in email signatures are common even in legitimate internal communications, as standards frequently vary across teams within the same organization.

Sales personnel typically maintain polished, detailed signatures given their external-facing roles, while IT teams often use minimal or no signatures. Executives, who are frequent targets of impersonation, commonly send communications from mobile devices, with signatures reduced to a device identifier.

This reality establishes a relatively low threshold for cybercriminals: signatures need not be accurate, only sufficiently plausible to avoid triggering suspicion. AI further reduces the effort required to produce convincing signatures by enabling the rapid generation of contextually accurate content from available reference material.

Attack techniques such as thread hijacking, BEC, and clone phishing compound this challenge further, as these methods can render signature-based detection largely ineffective.

How to Spot Phishing Emails Red Flag #4: The Writing

Poor grammar and writing quality are perhaps the most widely recognized phishing email red flags, requiring no technical expertise to detect and prompting an immediate, intuitive response that something is wrong.

Many phishing emails have historically been authored by individuals for whom English is not a primary language, compounded by the need to produce large volumes of content rapidly, resulting in consistently low writing quality.

However, AI-generated phishing emails have substantially neutralized this indicator. AI-produced emails are grammatically precise, fluent, and adaptable to professional contexts. In targeted attacks such as email spear phishing, cybercriminals can match writing style to a specific professional role and can replicate an individual's writing style and linguistic patterns.

These advancements do not, however, render writing quality an entirely redundant signal. AI-generated content carries recognizable patterns, and employees can submit suspicious communications to AI detection tools as an additional verification step. This approach has limitations, as a growing volume of legitimate organizational communication is AI-assisted, potentially producing false positives.

The more meaningful gap in AI-generated phishing lies not in grammar but in context. AI systems are prone to producing subtle inconsistencies in content, such as references to internal processes that differ from those actually used by the organization.

Additionally, AI-generated communications tend toward over-formalization, which becomes particularly conspicuous in the context of urgent requests. Internal communications typically adopt a less formal register, particularly when immediate action is required. An unusually formal, linguistically polished email paired with an urgent request now functions as a red flag in its own right.

Key takeaway: Writing assessment extends well beyond grammar. Employees should be trained to identify content inconsistencies, recognize indicators of AI generation, and evaluate whether the nature and tone of the request align with established organizational norms.

How to Spot Phishing Emails Red Flag #5: The Links

Links are a persistent and relatively objective indicator of email phishing. Unlike many of the signals examined above, a link either corresponds to a legitimate organizational domain or it does not. Hovering over a link prior to clicking to verify the destination URL remains a sound verification practice. Employees should be trained to identify the following indicators:

- Lookalike domains: Characters are substituted or inserted to pass a superficial visual inspection

- Subdomain abuse: The positioning of domain and subdomain elements is manipulated to create the appearance of legitimacy. Domain structure should be read from right to left to identify the authoritative domain

- URL shorteners: Link shortening services can obscure the true destination entirely

- Protocol anomalies: The absence of HTTPS or other protocol irregularities may indicate a fraudulent destination

- Unusual parameters: Excessively long URL strings warrant suspicion, as they may be used to facilitate credential harvesting

AI-assisted phishing compounds this challenge by enabling cybercriminals to build the infrastructure needed to create more convincing links more efficiently. Processes such as domain registration, SSL certificate acquisition, and website cloning are considerably more accessible with AI assistance.

AiTM attacks present an additional complication, as this technique can bypass link inspection entirely.

Key takeaway: Observe who initiated the interaction. Links embedded in unsolicited requests for sensitive actions should be treated with a high degree of suspicion. A reliable guideline for employees is to respond to any suspicious link by navigating directly to the relevant service rather than following the provided URL.

How to Spot Phishing Emails Red Flag #6: The Attachments

Attachments represent persistent high-risk artifacts and a reliable email phishing indicator for security teams. While cybercriminals continue to refine their approach, attachments share several objective characteristics with malicious links that can serve as detection signals.

According to Vipre's 2025 Q4 Email Threat Trends Report, the most frequently observed phishing attachment formats are .pdf, .jpg, .png, .docx, .eml, .gif, .xlsx, .svg, .pptx, and .html. Additional attachment-related indicators include:

- Macro-enabled office documents: While macro execution is disabled by default, organizations continue to use these documents in legitimate workflows, and employees may be conditioned to enable macros upon request

- Password-protected archives: Password protection creates a false impression of legitimacy and security while simultaneously preventing automated security scanning of the attachment's contents

- Double extensions: File names such as "file.pdf.exe" are designed to obscure the true file type and warrant immediate suspicion

- Unexpected sensitive attachments: Any unsolicited attachment containing or requesting sensitive information should be treated as a potential threat

A significant challenge for security teams is that document-based workflows are integral to most organizational operations, and the ongoing shift toward cloud-based environments has increased reliance on both attachments and link-based documents.

Effective employee guidance in this area is primarily contextual. Employees should be trained to identify attachment-to-action mismatches, where the content of an attachment does not align with the accompanying request.

An invoice document that directs the recipient to an identity verification page before rendering its contents represents a clear example of such a mismatch. Solicitation context is equally relevant; employees should consider whether the attachment was generated through an established and expected workflow.

Attachments and links share an important characteristic in the broader threat landscape: many phishing techniques can bypass both technical security controls and human verification, and a considerable proportion of attacks do not even employ such artifacts.

How to Spot Phishing Emails Red Flag #7: The Request

The request in a phishing email is its most consequential element and the most actionable red flag for employees. Security awareness efforts focused on request evaluation offer defenders a significant advantage, as certain categories of requests should reliably trigger verification procedures:

- Financial requests: Any financial request should automatically trigger additional verification, whether it is a standard component of established workflows or part of an ongoing conversation. The growing prevalence of BEC and thread hijacking attacks makes this vigilance particularly important

- Process bypass requests: Sensitive organizational actions are typically governed by established procedures. Any request that seeks to circumvent standard processes should be treated with heightened suspicion

- Credential requests: Emails directing recipients to pages that solicit login credentials, passwords, or similar authentication information represent a clear phishing indicator

- Attachment interaction requests: Requests to open or interact with attachments should trigger a verification response, even when consistent with an expected workflow

- Sensitive data requests: Any communication requesting the direct transmission of sensitive information via email should be treated as a potential threat

The fundamental challenge is that cybercriminals craft these requests around scenarios that routinely arise in organizational settings. Financial requests, vendor changes, updated payment details, and urgent approvals are all legitimate occurrences, making it considerably more difficult to distinguish a fraudulent request from a genuine one. This difficulty is compounded by the contextual sophistication of modern phishing campaigns.

An effective response to this challenge lies in combining security awareness with clearly defined procedural controls. Certain verification requirements should be treated as non-negotiable, regardless of the apparent urgency of the request. Financial transactions exceeding a defined threshold should always be subject to independent verification through an established channel.

Contextual evaluation represents an equally important defensive layer. Employees should assess whether a given request aligns with established workflow patterns and whether the nature of the interaction between the parties involved warrants behavioral scrutiny.

Key takeaway: Phishing emails invariably involve a request. Employees should be trained to instinctively evaluate whether any request aligns with known processes and controls before taking action.

How to Spot Phishing Emails Red Flag #8: The Processes

Phishing emails are frequently accompanied by indicators of process violations, as established organizational procedures exist precisely to prevent such attacks from succeeding. Cybercriminals actively seek to disrupt or circumvent these controls, and the following indicators are commonly observed across attack types:

- Channel violations: A request arrives via a channel that differs from the one through which such requests are normally communicated

- Authorization bypasses: Requests that seek to circumvent established approval steps or verification requirements

- Timing anomalies: Requests that fall outside the organization's standard operational calendar, particularly financial requests, which typically adhere to defined timelines

- Scope violations: Requests that exceed the normal parameters of what an employee would be expected to ask for or act upon in the course of their responsibilities

- Identity-process mismatches: The appropriate individual initiating a request through an incorrect channel, or an inappropriate individual initiating a request through the correct channel

In large organizational environments, the procedural complexity intended to strengthen security may introduce additional vulnerabilities. Organizations operating across multiple subsidiaries or regional branches frequently maintain distinct processes for each entity, creating opportunities for cross-organizational email phishing campaigns.

The simultaneous use of informal communication channels, primarily messaging platforms, alongside formal channels such as email further blurs the boundaries of what constitutes the appropriate channel for a given request, creating additional ambiguity that cybercriminals can exploit.

Shadow processes pose an additional challenge for security teams. These are informal workarounds developed by employees to bypass procedures perceived as too complex for routine operations. Shadow processes are particularly attractive targets during the reconnaissance phase, as cybercriminals who identify them can craft requests that mimic employee behavior rather than official protocol, eliminating the need to engineer an overt process violation.

Third-party and partner relationships introduce an additional external attack vector. Organizations that maintain external relationships with entities operating under different procedural frameworks expand the opportunity surface available to cybercriminals. A case involving an Irish state agency that sustained nearly $6 million in losses due to a phishing attack originating from a third party illustrates this risk.

For security teams, the primary objective is to establish rigorous, clearly documented, and consistently enforced procedural controls and to systematically identify and eliminate shadow processes across the organization.

How to Spot Phishing Emails Red Flag #9: The Timing

Timing operates as both a defensive signal and an offensive instrument in phishing attacks, as the Troy Hunt case illustrates. Even highly knowledgeable cybersecurity professionals remain vulnerable when targeted under adverse conditions, underscoring the role circumstances play in determining an attack's success.

In a corporate context, standard business hours provide a degree of natural protection, as sensitive requests arriving outside normal operating windows can function as an immediate red flag. However, cybercriminals can exploit this same dynamic to apply additional pressure within business hours.

Finance teams operating under well-defined cyclical constraints, such as period-end closing procedures, are particularly attractive targets, as the combination of high transaction volume and clear time pressure creates conditions that reduce scrutiny.

Cybercriminals also leverage publicly observable events to inform attack timing, as demonstrated by the toy manufacturer case involving an executive transition. Time zone complexity introduces an additional variable: organizations operating across multiple time zones, including those within a single country, may experience verification and response gaps that cybercriminals can exploit.

Reconnaissance remains the most significant timing-related factor. Through prior intelligence gathering, cybercriminals can construct an accurate picture of an organization's operational calendar and align attacks with moments of maximum plausibility. A 2024 case involving a commodity firm illustrates this capability: a fraudulent payment request was timed to coincide precisely with the moment such a payment would ordinarily be expected.

Timing is a particularly complex indicator, as it can serve both defensive and offensive purposes. The most successful phishing attacks are those in which the timing appears entirely natural. Security teams should therefore prepare employees to treat unexpected timing as a red flag, even when a request's timing appears perfectly aligned with organizational expectations.

How to Spot Phishing Emails Red Flag #10: The Triggers

Psychological triggers are mechanisms deployed by cybercriminals to circumvent the judgment that would otherwise prevent a successful attack. Every email phishing campaign incorporates some form of trigger, and the ability to recognize these mechanisms represents one of the most reliable defensive capabilities employees can develop.

Even well-trained, experienced, and knowledgeable professionals remain susceptible to these techniques, not because of ignorance or inattention, but because cybercriminals deploy precisely calibrated triggers at moments of maximum vulnerability.

In corporate environments, triggers are particularly effective because they can be tailored to an employee's specific role. Cybercriminals exploit the fear of professional consequences, whether stemming from an error or perceived non-compliance, to exert pressure that compels action.

Whether that pressure reflects a genuine threat is immaterial; the employee's perception of risk is sufficient to drive the desired response. Fear of appearing obstructive, disrespectful, or incompetent can be as effective a trigger as an explicit threat, even in its absence.

The most commonly deployed psychological triggers include:

- Fear of authority: Cybercriminals frequently exploit hierarchical dynamics by impersonating senior executives and applying pressure on employees to comply without question or verification

- Urgency: Time pressure is introduced to suggest that inaction will lead to adverse consequences, thereby compelling employees to bypass standard verification procedures. This trigger is particularly effective in corporate environments where urgency is a routine operational condition

- Loss aversion: This trigger exploits the cognitive bias whereby potential losses are perceived as more significant than equivalent gains. It is commonly applied through notifications of compromised accounts

- Social proof: Cybercriminals suggest that other employees or stakeholders have already validated or complied with the request, reducing the recipient's perceived need for independent verification. This mechanism underpins attacks such as thread hijacking

- Familiarity: Impersonation is effective because familiarity is instinctively associated with legitimacy. This trigger is further reinforced when the attacker has obtained specific intelligence about the relationship between the parties involved, an increasingly achievable outcome given the capabilities of AI-assisted reconnaissance

- Additional triggers: Mechanisms such as curiosity, reciprocity, and scarcity are also applicable in corporate contexts, though they are more frequently deployed in consumer-facing attacks

Key takeaway: Psychological triggers are rarely deployed in isolation. Cybercriminals combine multiple triggers to craft a more compelling, action-oriented narrative. Employees should be trained to identify these mechanisms, interpret their presence as an intentional signal of malicious intent, and treat them as a reason to slow down and verify rather than to act immediately.

How to Conduct Phishing Email Security Training for Employees

Email security training for employees extends well beyond exercises on how to spot phishing emails. A comprehensive email phishing awareness training encompasses measuring outcomes, assessing organizational maturity, developing continuously updated content that reflects the current threat landscape, and systematically evaluating employee engagement.

As the preceding sections demonstrate, phishing attacks present in a wide variety of forms, driven largely by the degree of personalization cybercriminals apply during campaign development. Modern attacks are targeted, operationally sophisticated, and, with the assistance of AI, increasingly well-crafted and precisely timed.

Established phishing indicators are also less dependable than they once were. No single red flag is entirely reliable, and cybercriminals continuously identify and exploit the limitations of each. The following section outlines a framework for developing an email phishing awareness training program that addresses both current and emerging threats, informed by the strategies and techniques employed by cybercriminals.

Establish a Continuous Phishing Email Training Program

Training effectiveness can deteriorate rapidly due to emerging threats or the progressive obsolescence of existing content without ongoing revision. The most effective approach is a continuous awareness training program that evolves in response to the changing threat landscape.

A practical framework for structuring such a program is a four-stage cycle, comparable in structure to a sprint or project development methodology:

- Measure: Quantifying employee performance in identifying email phishing attempts should be a foundational element of the program from inception. Where baseline data is unavailable, an initial email phishing test should be conducted to establish a starting point. Security teams should maintain a defined set of key performance indicators to be tracked across every training cycle

- Plan: Drawing on available data, training content should be designed to address the organization's specific needs at both the general and individual levels. This stage should identify employees who require additional attention and the areas where performance gaps are most pronounced. It also provides an opportunity to monitor emerging trends and incorporate recent incidents to maintain relevance and engagement

- Teach: Content should be delivered in an engaging format that aligns with employees' daily responsibilities. Extended video sessions or lengthy training modules are likely to result in reduced attention and retention

- Test: An email phishing test is essential not only for reinforcing and consolidating learning, but also for generating the performance data that informs subsequent training cycles

Training frequency should be calibrated to the organization's maturity level. As a baseline, high-risk personnel should receive monthly training, while general employees should receive quarterly training.

High-risk categories include executives, finance, HR, and IT personnel. In addition to increased training frequency, these groups should receive adaptive content tailored to their specific roles and the phishing techniques most relevant to their daily responsibilities.

In practice, the teaching and testing stages are not conducted in a strict sequence; security teams should integrate email phishing tests throughout the instructional process to maintain the program's dynamism.

Immediate feedback following an email phishing test represents a particularly high-value intervention, as the moment immediately following an interaction, whether the outcome is positive or negative, is among the most effective for learning. Simulation feedback should adhere to the following principles:

- Immediate: Feedback should follow the moment of interaction without delay. There is a direct correlation between learning effectiveness and the temporal proximity of feedback to the triggering event

- Specific: Feedback should clearly identify which indicators were present in the test, what verification steps should have been taken, and what the appropriate response would have been. Generic responses are insufficient and should be avoided

- Brief: Feedback should be concise to respect employees' time and to ensure that attention levels remain high during the learning moment

- Actionable: Feedback should conclude with clear guidance on the actions the employee should take in response to a suspected phishing attempt, whether reporting to the security team, verifying through an alternative channel, or following another defined procedure

Measure the Correct Email Phishing Test Metrics

Measuring the outcomes of email phishing awareness training is essential not only for evaluating individual performance but also for generating organization-wide human risk assessments. Aggregate data enables security teams to direct training resources toward the areas of greatest need, whether specific employees demonstrating consistent underperformance or threat categories for which the organization lacks sufficient defensive capability.

Personalized security awareness training is an equally important dimension of performance measurement, particularly in the context of risk assessment. Underperformance among high-risk personnel, including executives, finance teams, and other elevated-risk groups, warrants considerably greater attention and intervention than equivalent results among the general employee population.

Longitudinal measurement also serves a critical reporting function. Performance data tracked over time provides security teams with a structured and credible basis for communicating training progress and organizational improvement to leadership.

Key takeaway: Identify the most impactful key performance indicators, extending beyond the most commonly tracked metrics while recognizing that foundational measures remain essential.

Email Phishing Test Metric #1: Completion Rates and Time to Complete

Completion rates measure how many employees complete the full training program and are the most fundamental metric available to security teams. This indicator is essential for compliance purposes and establishes the baseline against which all other performance data is evaluated. Low engagement renders all subsequent metrics less meaningful, as they reflect only a partial picture of organizational readiness.

Time to completion provides an additional layer of insight. Employees who progress through simulations and training modules at an unusually rapid pace are unlikely to retain the material, treating the program as a requirement rather than as a genuine learning exercise.

Where completion rates or time to completion fall below acceptable thresholds, security teams should consider implementing an internal awareness campaign to communicate the importance of active participation to employees.

Email Phishing Test Metric #2: Click Rate

Click rate measures the proportion of simulated phishing emails that elicit recipient interaction and is one of the most direct indicators of training progress. The granularity with which this metric is reported directly determines its analytical value; segmentation by role, team, risk classification, and individual employee provides security teams with the most actionable insight.

A common question in this context concerns what constitutes an acceptable click rate. The answer is context-dependent and is largely determined by the organization's risk tolerance. A government security agency, for example, may operate with near-zero tolerance, while most organizations, including those with high-risk personnel, can benchmark strong performance at a click rate of approximately 5%, with a long-term target of below 2% as best-in-class.

A 0% click rate, while seemingly desirable, is neither a realistic target nor necessarily beneficial. Pursuing it may generate frustration or a perception of failure, and may actually be counterproductive by eliminating valuable learning opportunities.

Security teams should expect and accept that employees will fail an email phishing test; as noted above, the moment of failure represents the highest-value learning opportunity available, and security teams should ensure that opportunity is not lost.

Based on observed training outcomes, organizations typically see a click rate of approximately 25%-30% during initial simulations, which declines to approximately 5% within the first year of sustained training.

Key takeaway: Click rate should not be evaluated as a static figure. Its primary value lies in tracking the rate of change over time, with a consistent reduction in percentage points serving as the most meaningful indicator of program effectiveness.

Email Phishing Test Metric #3: Repeater Clicker Rate

Repeated clicker rates measure the proportion of employees who interact with multiple simulated phishing emails across different attack types. These individuals warrant heightened attention and, in some cases, individualized intervention.

Consistent simulation failures are a meaningful signal that a specific employee may require a different instructional approach, whether through an alternative delivery method, a different content format, or more targeted one-on-one guidance.

In certain cases, repeated failure may indicate insufficient engagement with the program rather than a knowledge deficit. Where this is suspected, a direct conversation with the employee's manager represents a constructive step toward understanding how to make the program more effective for that individual.

Email Phishing Test Metric #4: Report Phishing Email Rate

Report Phishing Email rate measures the proportion of simulated phishing emails that employees actively flag through the organization's designated reporting mechanism. This metric is particularly significant because reporting represents the primary behavioral outcome that a well-designed training program seeks to reinforce. An employee who identifies and reports a phishing attempt delivers greater defensive value than one who simply does not click.

The relationship between report rate and click rate is a particularly meaningful indicator of organizational security culture maturity. The optimal outcome is a low click rate combined with a high report rate, reflecting a workforce that not only avoids interacting with phishing attempts but consistently escalates them to the security team upon identification.

Email Phishing Test Metric #5: Time to Report Phishing Email Rate

Time to report measures the interval from an employee's receipt of a simulated phishing email to its escalation to the security team. This metric carries considerable weight, as the defensive value of a report diminishes significantly over time. The ideal outcome is at least one employee reporting a suspicious email within minutes of its arrival.

The practical implications of rapid reporting become clear when applied to a live phishing attack. When employees report a phishing email, it enables the security team to initiate an organization-wide awareness alert, notifying the broader workforce or the most relevant teams that an active phishing campaign is underway. In this way, the most security-aware employees become an active early warning system for the broader workforce.

Reports submitted after a longer delay retain forensic value. Security teams should systematically review every reported email, as each submission provides intelligence relevant to the organization's threat exposure and can inform subsequent training priorities.

The accelerating pace of AI-powered attacks adds further urgency to this metric. A complete phishing campaign or ransomware attack can now be executed within hours, making the decision to report a phishing email a potentially decisive factor in preventing a full organizational compromise.

A well-developed reporting culture will inevitably generate false positives. This represents a worthwhile trade-off, and reducing false-positive rates can serve as a secondary objective for subsequent training cycles.

AI is a valuable resource for security teams managing high volumes of reports, facilitating triage by classifying submitted emails by risk level or confidence score and flagging likely false positives, thereby reducing the immediate burden on security personnel.

The performance data generated across these metrics provides the foundation for the planning stage of the training cycle, enabling security teams to develop content that is precisely aligned with the organization's current needs.

Create the Phishing Email Training Content

Security awareness education builds the foundational knowledge employees require to protect themselves and the organization from email phishing attacks.

A significant proportion of successful phishing attacks exploit a fundamental knowledge gap; employees simply do not know that a given technique or scenario exists. Cybercriminals rely on this knowledge gap as a primary enabler of their campaigns.

Effective training is structured around three core objectives: awareness, recognition, and action. Both instructional content and simulation exercises contribute to achieving each of these outcomes. In an environment increasingly shaped by AI-generated phishing, training must also ensure that employees remain current with emerging attack trends. Key components of an effective educational framework include:

- Core content: A baseline curriculum should be distributed across the entire organization, covering foundational topics such as phishing identification, the psychological triggers deployed in phishing campaigns, the mechanics of phishing attacks, the consequences of engaging with a malicious email, the reporting process, and the appropriate response when an employee suspects they have interacted with a phishing attempt

- Core content frequency: Foundational content should be completed during onboarding and revisited at least annually. Core concepts evolve slowly, making a longer interval between sessions appropriate. Excessive repetition of foundational material risks disengaging employees