Top tactics in social engineering training

In early 2024, an employee at UK engineering firm Arup joined what appeared to be a routine video call with senior leadership. The faces were familiar, and the voices matched, so a request about authorizing a wire transfer tied to an ongoing business matter didn't seem unusual.

Within a short time, the employee approved transfers totaling $25 million. There was no system breach. No malware. No compromised credentials. But the "executives" on the call were AI-generated deepfakes.

As Arup's Chief Information Officer later explained, this wasn't a traditional cyberattack but a technology-enhanced social engineering incident. Attackers exploited human trust using realistic audio and video, bypassing technical controls entirely. Incidents like this happen far more often than most organizations realize, but they rarely make headlines.

In modern social engineering attacks, people are the target, not systems. Employees aren't acting carelessly; they're responding to believable authority, realistic context, and time pressure. Technical controls may work as designed, but attackers bypass them by exploiting human behavior.

That's why these attacks are often called "preventable," but only in hindsight. Detection now depends less on spotting obvious red flags and more on how people respond under realistic, high-pressure conditions.

This shift is forcing social engineering training to evolve. Let's look at why traditional programs fall short, what behavior-based training looks like today, and how organizations can reduce human risk using AI-based, realistic simulations.

What is social engineering in cybersecurity?

Social engineering is a class of cyberattacks that targets human psychology rather than technical vulnerabilities. Instead of breaking systems, threat actors manipulate employees to gain access to credentials, money, or other confidential data.

Social engineering exploits predictable human behaviors, such as trust in authority, fear of consequences, urgency, curiosity, and a desire to be helpful. This reliance on human vulnerabilities allows attackers to bypass even the strongest technical controls.

While phishing emails remain the most visible form, types of social engineering span multiple channels and techniques.

- Phishing: Emails that try to trick employees into clicking links, sharing passwords, or approving actions. These aren't the "Nigerian prince" scams of years past. Today's messages use real names, job roles, and ongoing work, which is why security awareness training needs AI-based phishing simulations that mirror the emails people actually receive at work.

- Vishing: Voice-based attacks where scammers impersonate executives, IT staff, or vendors over phone calls.

- Smishing: SMS-based phishing that invokes urgency and familiarity, often impersonating delivery services or internal alerts.

- Pretexting: Carefully crafted scenarios where attackers pose as trusted parties to extract sensitive information over time.

- Business email compromise (BEC): Highly targeted attacks focused on finance and operations teams, often resulting in direct financial loss.

What's changing is how believable these attacks are in day-to-day work. Attackers now use AI to write emails that sound like your leadership team, clone executive voices for phone calls, and reference real projects, vendors, or deadlines. In some cases, employees hear what sounds like their CFO asking for an urgent transfer or receive emails that match how their manager actually writes.

The old warning signs no longer hold up. As a result, employees aren't just spotting "suspicious emails." They now need social engineering training to decide whether to slow down, verify, or comply with requests that appear operationally normal.

Detection now depends less on visual cues and more on behavioral judgment—recognizing urgency, process deviations, or attempts to bypass regular checks.

Why social engineering training must evolve

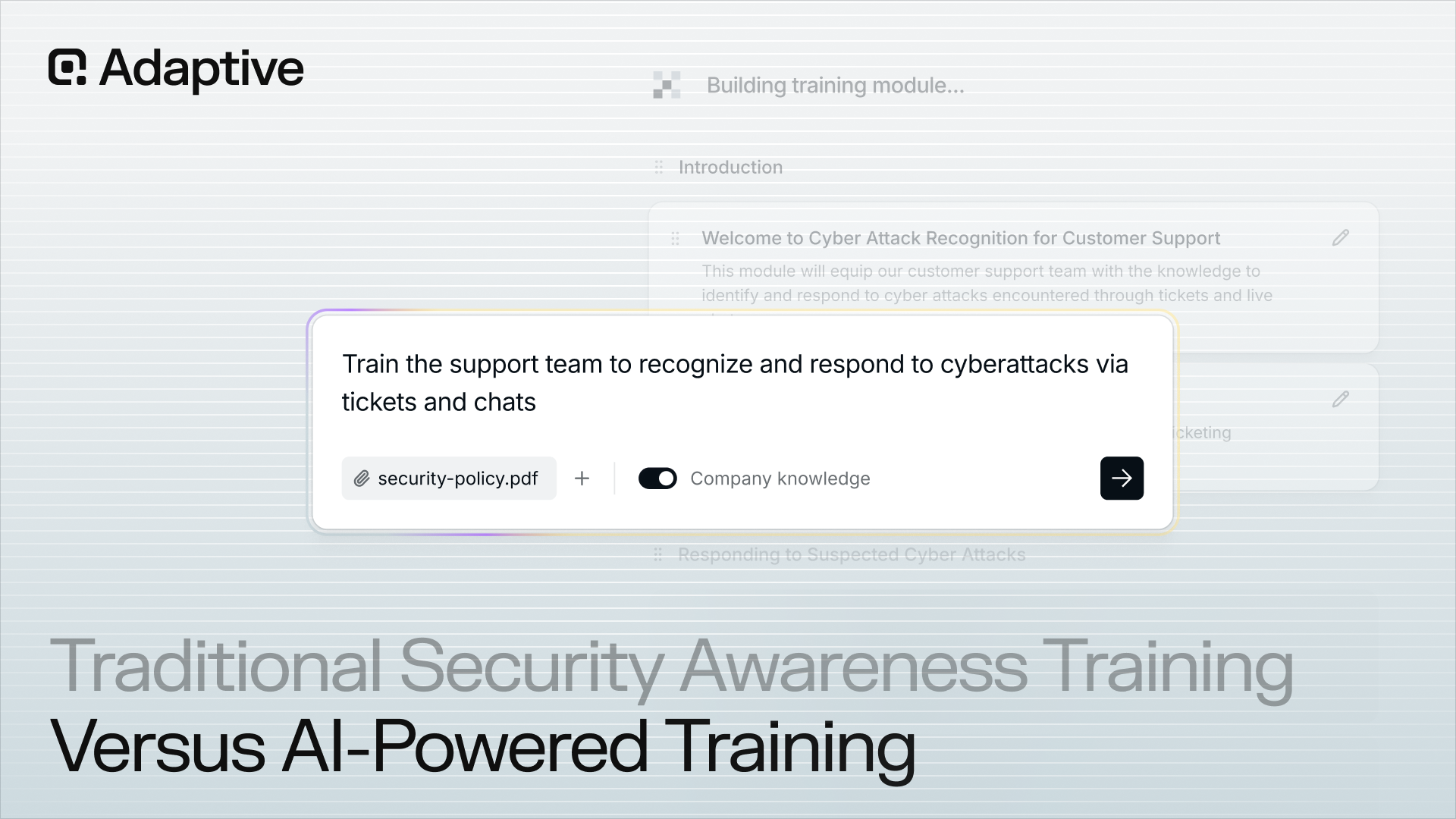

Traditional security awareness training programs rely on static content, annual refreshers, and simple phishing-simulation guides that measure whether an employee clicked a malicious link. While useful as a baseline, this approach falls short against modern social engineering attacks that are dynamic, personalized, and increasingly AI-driven.

Today's attackers don't just send generic phishing emails. They adapt in real time, switch channels, and leverage realistic voices, faces, and scenarios to create pressure. In this environment, you need to train your team to detect and remain cautious under realistic conditions.

That's why social engineering training today needs to evolve in three key ways:

- From awareness to behavior: Training should focus on how employees respond in real situations, not just what they know in theory.

- From one-size-fits-all to risk-based: Not every employee faces the same level of social engineering risk. Programs need human risk management and visibility into where that risk actually exists across the organization.

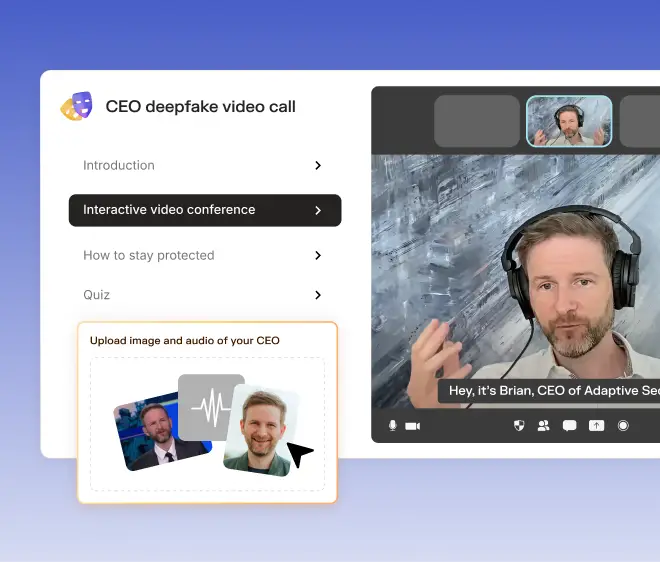

- From static simulations to adaptive scenarios: Real attackers move across channels, often using AI-generated content like voice spoofing or deepfake video. Training that includes realistic, evolving scenarios (such as simulated executive calls or AI-driven impersonation attempts) helps employees build decision-making muscle memory for modern attacks.

As social engineering attacks grow more sophisticated, a new generation of AI-driven security awareness training platforms is emerging to close this gap. These platforms simulate realistic, high-risk scenarios, such as executive impersonation, voice spoofing, or deepfake-style requests, so employees can practice responding in conditions that mirror real attacks.

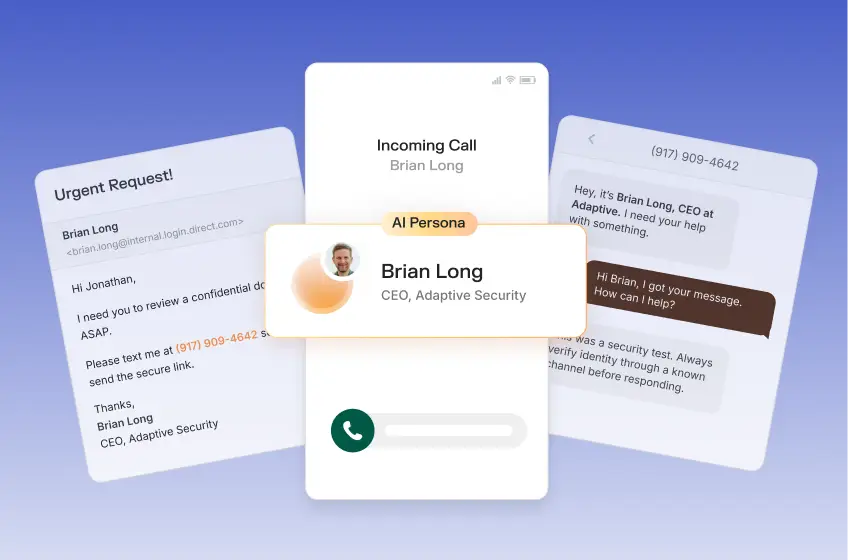

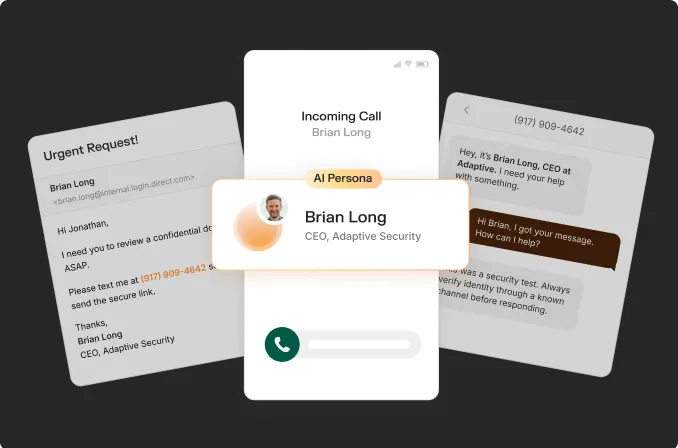

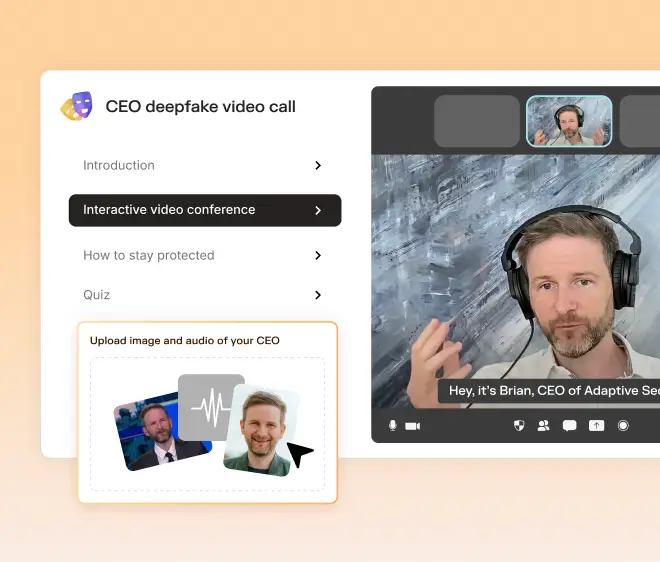

Adaptive Security exposes employees to deepfake-style scenarios in which a familiar face or voice conveys urgency or authority. Security teams can create industry-tailored deepfake simulations that laser-target specific departments, training them to slow down, verify requests, and recognize manipulation signals before real attackers exploit them.

Key components of effective social engineering training

When security leaders evaluate a social engineering risk playbook, they're not looking for more content. They're looking for signals. Does this program change behavior? Does it surface real risk? Does it reflect how attacks actually occur today?

Effective programs tend to share three core components:

Realistic simulations that mirror modern threats

Social engineering training only works when employees see the scenario and think, "This could happen to me at work."

Training that relies on obviously fake phishing emails, with misspelled messages, generic senders, or unrealistic requests, teaches people how to spot training exercises, not how to respond to real attacks.

Today's threats look more like:

- A rushed video call from a "VP" asking for help before a meeting

- A familiar voice requesting an exception to process

- A multi-step interaction that escalates pressure over time—for example, a casual email from a "vendor," followed by a deepfake call to clarify details, and ending with an urgent request to approve payment before a deadline

Data backs this up. The 2024 Verizon Data Breach Investigations Report found that 68% of breaches involved a non-malicious human element, such as an error or social engineering.

This is precisely why security awareness training has to change. If your employees have never practiced handling a fake video call, a cloned executive voice, or a believable vendor follow-up, the first time they encounter it shouldn't be during a real incident.

Newer AI-driven training platforms like Adaptive Security are helping teams by using realistic scenarios that show what modern social engineering actually looks and sounds like, so people learn when to pause, verify, and detect even when the CFO's voice and tone appear too real to be a scam.

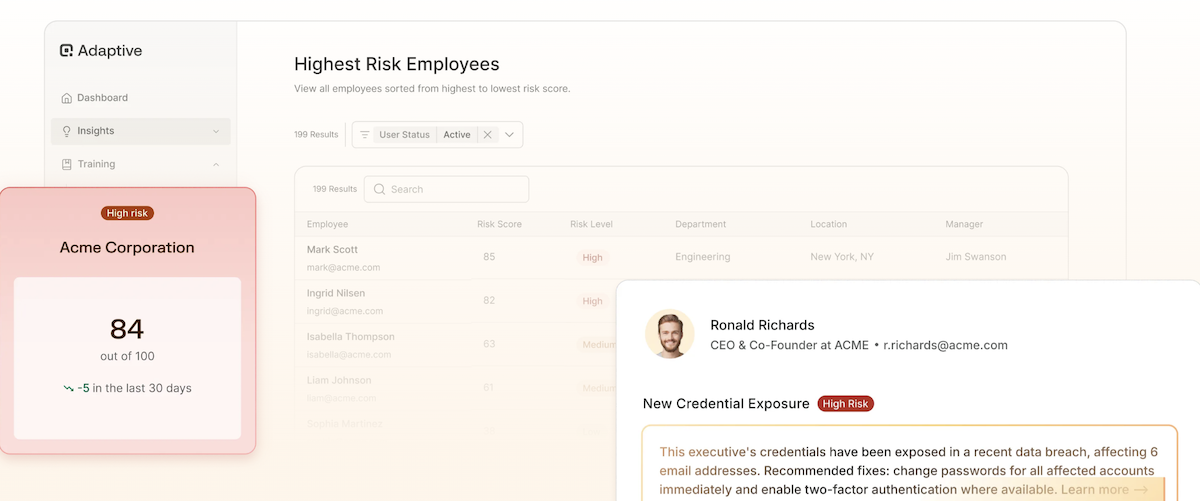

Behavioral risk scoring

If you're responsible for security, the real question isn't "Did someone fail a simulation?" It's "Who is most likely to cause the next incident and why?"

Behavioral risk scoring helps answer that by turning employee behavior and exposure into actionable insights. Instead of treating every mistake the same, it looks at signals like:

- How often does someone respond under urgency or authority

- Whether risky behavior is improving or repeating

- Which roles, teams, or individuals would attackers realistically target

This matters because human risk changes quickly. A new role, leaked credentials, public visibility, or a recent failure can quietly increase someone's exposure, especially for executives and finance teams.

Adaptive Security takes this approach by continuously updating risk scores based on real behavior and exposure, not just training completion. You can see which departments carry the highest risk, whether risk is trending up or down, and where targeted follow-up training will be most effective.

Just-in-time nudges and microlearning

Most social engineering failures don't happen because someone forgot a policy. They happen because the moment felt routine, urgent, or familiar, and no one slowed down. That's why timing matters.

What works is immediate, situational feedback. Short nudges show employees exactly how a real social engineering attempt went wrong and what a safer response would have looked like.

Here are some examples of just-in-time nudges and microlearning you should incorporate in your social engineering program:

- An employee responds to a suspicious activity: Teach them immediately why it was risky and what a safer response would've been.

- Someone hesitates but still complies: Send them a brief reminder to reinforce the verification step they almost completed.

- A team repeatedly reacts under urgency: Send them a focused, bite-sized training on slowing down and escalating.

Instead of asking employees to remember lessons from a yearly module, just-in-time nudges reinforce the exact behavior that needs fixing right when it happens, so the next time a deepfake call or urgent request comes in, pausing and verifying feels natural.

How to evaluate social engineering training providers

When looking for a social engineering platform, the goal isn't just "better awareness." It's risk reduction. The right question to ask vendors isn't "What features do you have?" but "What problems will this actually help us catch before an incident?"

Here are some caveats and tips to help you distinguish modern platforms from legacy ones that may not be sufficient to defend against sophisticated cyberattacks.

Must-have capabilities

If a provider can't do these well, it's unlikely to hold up against today's threats.

- Multi-channel coverage: Attacks don't arrive neatly in one inbox. A single attempt often starts as an email, escalates to a voicemail "from the CEO," and ends with a follow-up text asking you to act fast. Hence, the need for multi-channel phishing training coverage.

Adaptive Security supports this by running realistic phishing simulations across email, SMS, and voice simultaneously, so employees practice spotting the same multi-channel tactics attackers actually use, not just email-only lures.

- Behavior-focused analytics: You should be able to see who is at risk, why, and whether risk is improving. Click rates alone won't tell you where to intervene.

- Support for AI-driven threats: Providers should provide more than traditional email templates. They need to account for modern tactics such as voice cloning, executive impersonation, and deepfake videos and images.

- Role and exposure awareness: Attackers target roles differently—for example, finance for payments, executives for impersonation, IT for unauthorized access, and new hires for onboarding scams. Training should not treat every employee the same, but adapt by role and exposure.

When platforms get this right, security teams can target training and controls where risk is highest, rather than spreading effort evenly across the organization.

Avoid these red flags

These are common signs that a platform was built for yesterday's threat model.

- Email-only simulations: If the focus is solely on phishing emails, the training won't prepare employees for voice- or video-based attacks.

- One-size-fits-all programs: The same content, cadence, and scoring for everyone usually means no one is getting what they actually need.

- Static content and annual refreshes: Threats evolve constantly. Training that doesn't adapt between cycles leaves a long gap that attackers can exploit.

- Metrics that don't drive action: Instead of static reports, look for training that shows you who has exposed credentials, which executives are easy to impersonate, and which employees make the same risky decisions. Then automatically step in with fixes before an attacker does.

How Adaptive Security approaches social engineering training

Instead of running training once a year and hoping it sticks, Adaptive treats security awareness as an ongoing practice.

Employees regularly face realistic scenarios that show where risk is building, how people act under pressure, who skips verification steps, and whose credentials and public data make them easy to impersonate. This helps security teams step in early and address the behavior before a real attack succeeds.

Modern security awareness platforms must train employees the same way attackers test them today, using realistic scenarios, repeated practice, and signals about where risk is building.

Built for modern, AI-enhanced threats

Attackers now use AI to generate convincing phishing emails, clone voices for vishing, and create deepfake videos that impersonate leaders. Traditional security awareness training rarely accounts for these tactics.

Adaptive's platform was designed from the ground up to address these AI-powered attacks. Its training modules include interactive simulations that mirror real multi-channel threats, including email, SMS, voice calls, and even executive deepfakes, so employees don't just read about these attacks, but experience them.

Personalized, risk-aware training

Not all employees face the same risk. Rather than generic "one-size-fits-all" content, Adaptive tailors training to individuals based on their behavior and exposure.

For example, someone with recent credential exposure or high OSINT visibility (such as executives who speak at events, post frequently on LinkedIn, or appear in press coverage) might receive more targeted simulation scenarios than others.

This risk-driven personalization helps teams focus their efforts where it actually reduces human risk rather than just checking compliance boxes.

Continuous tracking and human risk visibility

Adaptive's approach ties behavioral insights, real exposure signals (like credential leaks or executive impersonation risk), and ongoing simulations together so you can see where human risk is right now, not just what happened in last quarter's report.

This continuous visibility lets teams see precisely who is slipping and why—for example, an executive whose credentials have recently been compromised, or a finance employee who keeps approving urgent requests. This allows you to intervene with targeted coaching or simulations instead of retraining everyone blindly.

Success metrics: How to measure training effectiveness

When social engineering training is doing its job, you can point to measurable changes, not just completion certificates. The right metrics show whether risk is decreasing, not just whether people have finished a module.

Here are the core signals to look for:

- Click reduction over time: Don't look at one campaign in isolation. Track whether the same employees make fewer mistakes as scenarios become more realistic. For example, moving from clicking generic phishing emails to correctly handling messages that reference real vendors, executives, or active projects.

- Employee engagement: Check whether employees are actively participating beyond assigned training. This includes reporting suspicious messages independently, pausing before acting on urgent requests, and avoiding the same mistakes across multiple simulations.

- Reduction in high-risk behaviors: Look for fewer repeat patterns that attackers rely on, such as finance teams approving requests without verification, employees bypassing the process for executives, or the same individuals repeatedly failing authority-based scenarios.

- Reporting and compliance readiness: Make sure you can quickly answer basic questions (who is improving, where risk is highest today, and what actions were taken) without manually stitching together reports or guessing during audits.

Make people your strongest defense

Today's social engineering attacks don't arrive as obvious phishing emails. They appear as video calls from "executives" and voice messages that match familiar accents and tone, catching employees at moments when the request feels routine rather than suspicious.

When employees practice realistic scenarios, they're more likely to question an unexpected executive call, verify a payment request instead of approving it, or flag a suspicious follow-up before money or data leaves the organization.

Adaptive Security is built around that idea. Its training focuses on realistic, AI-driven scenarios and behavior-based feedback so teams can see where risk actually exists and fix it before attackers take advantage. The goal is fewer costly mistakes and a workforce that knows how to avoid scams, even when they feel real.

See how Adaptive simulates real-world social engineering threats like deepfakes and vishing. Request a demo to experience behavior-based training in action.

FAQs about social engineering training

What is the goal of social engineering training?

The goal isn't to catch employees making mistakes but to reduce the chances of a costly one. Good cybersecurity training helps people recognize when something feels off, slow down under pressure, and verify requests before acting.

When training works, fewer people approve risky requests, fewer messages slip through unreported, and attacks are stopped earlier, before causing financial loss or compromising confidential data.

How often should we run social engineering simulations?

More often than once a year. Most organizations run simulations monthly or quarterly, so training stays relevant as cyber threats change.

Short, frequent training sessions are more effective than large annual campaigns because employees learn through repetition and feedback, not by rote memorization of manuals or by taking quizzes on security policies.

Cybersecurity awareness training should also be tied to real behavior. For example, let's say someone clicks a spear-phishing message. They should immediately receive a brief explanation of what they missed and the exact steps to take next time they encounter a similar request.

What are the most common social engineering tactics?

Email phishing attacks are still common, but hackers now rely heavily on impersonation. That includes fake executives, vendors, or IT staff using email, phone calls, text messages, or video.

Multi-step attacks, where an urgent request follows a harmless message, are especially effective because they build trust over time.

Can social engineering training reduce cyber insurance premiums?

It can help. Many insurers view security awareness training programs as part of their risk assessment, particularly programs that demonstrate measurable improvement over time.

While training alone won't guarantee lower premiums, demonstrating reduced risky behavior, ongoing simulations, and transparent reporting can strengthen your position during renewal conversations.

As experts in cybersecurity insights and AI threat analysis, the Adaptive Security Team is sharing its expertise with organizations.

Contents