A security awareness program maturity model is a structured framework that benchmarks an organization's human risk program, spanning the full range from compliance-based training to embedded security culture, and maps the concrete steps required to reach the next level.

Security leaders who apply a maturity model gain a shared language for diagnosing program gaps, prioritizing investments, and demonstrating measurable risk reduction to the board.

This guide covers the five recognized stages of security awareness maturity, from reactive annual training to real-time behavioral analytics, with specific attention to the metrics that distinguish a compliance-driven program from one that produces genuine behavioral change.

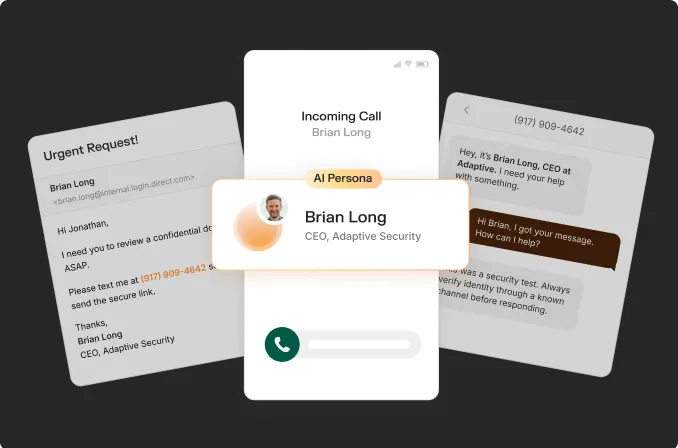

It also addresses how low-maturity programs create structural exposure to AI-generated phishing, deepfake impersonation, and multi-channel social engineering attacks that email-only simulations cannot prepare employees to detect.

With the global average cost of a data breach in 2025 at $4.44 million, according to the IBM Cost of a Data Breach Report 2025, the financial weight of human risk is no longer abstract. Organizations that treat training completion as the primary objective consistently underestimate what a mature program can prevent.

This guide enables security leaders to assess their current maturity level, identify the specific gaps impeding progress, and build a business case to address them.

Explore an Adaptive Security Demo to see how organizations are advancing beyond compliance-based training and building measurable resilience against modern social engineering threats.

What Is a Security Awareness Program Maturity Model?

A security awareness program maturity model is a structured framework that benchmarks an organization's security awareness efforts across progressive stages, spanning from compliance-based training to an embedded security culture in which safe behavior becomes the default. The model enables security leaders to:

- Diagnose current program state

- Identify gaps in training quality

- Evaluate program governance and employee behavior

- Prioritize improvements most likely to reduce human-layer risk

Maturity models do not assess technical controls such as firewalls or endpoint detection. They evaluate exclusively the human layer, encompassing how employees recognize threats, how training is designed and delivered, and how security behavior is measured over time.

Multiple frameworks exist, including NIST CSF-aligned approaches and vendor-developed models, but the most widely adopted standard in the field is the SANS Security Awareness and Culture Maturity Model. The SANS model was developed through practitioner research with hundreds of security awareness officers and formalized by the Institute over more than a decade of annual reporting.

Why Maturity Models Matter for Security Leaders

Most security awareness programs are measured by completion rates, which can only confirm whether employees watched a video, and not that they changed how they behave under pressure.

According to the SANS Embedding a Strong Security Culture 2025 Report, which analyzed data from nearly 2,700 security awareness professionals globally, a significant portion of organizations still operate at the lowest maturity levels, relying on compliance-driven programs without behavioral measurement. That gap between being 'trained' and truly resilient is where social engineering attacks thrive.

Maturity models also give security leaders a shared vocabulary for explaining program gaps to executive stakeholders and justifying investment in capabilities that extend beyond annual training cycles. Without a framework, programs stagnate at whatever level the last budget cycle funded, rather than at the level the threat environment demands.

How the Model Differs From Technical Security Frameworks

NIST, ISO 27001, and SOC 2 assess whether controls exist, whereas a security awareness maturity model assesses whether personnel apply them correctly. The distinction is significant, as the 2025 Verizon Data Breach Investigations Report found that 60% of breaches involved a human element, including credential misuse, phishing, and social engineering, none of which technical controls alone can prevent.

The model's five-stage progression from reactive to proactive to transformational provides security awareness managers a human risk management roadmap grounded in behavioral outcomes rather than checkbox evidence.

Each stage demands more rigorous evaluation, shifting the question from whether employees completed training to whether that training measurably changed the decisions employees make when confronted with an attack.

The Five Stages of a Security Awareness Program Maturity Model

A security awareness program maturity model is a structured framework that maps the progression of organizational security awareness efforts from reactive, compliance-driven basics to a fully instrumented culture of continuous risk reduction.

It functions as both a diagnostic tool and a strategic roadmap, helping security leaders identify where their program currently stands and what investments are required to advance.

This guide is based on the SANS Security Awareness and Culture Maturity Model, which defines this progression across five stages, and the gaps between them are where most programs stall, not from lack of effort, but from misaligned priorities.

Security Awareness Program Maturity Model Stage 1: Compliance-Focused

At Stage 1, the program exists because an auditor required it. Training runs once a year, typically a single module pushed to all employees before a compliance deadline. Completion rates are the only metric tracked, and the security team treats the program as a compliance obligation rather than a risk management tool.

The organizational mindset at this stage is defensive, oriented toward satisfying an external requirement rather than changing employee behavior. There are no phishing simulations, no role-specific content, and no feedback loop between training and actual incident data. The gap blocking advancement is measurement. Without behavioral data, there is no evidence of risk reduction to justify further investment in the program.

Regulated industries such as financial services and healthcare often begin at this stage despite higher compliance pressure. Small and medium-sized businesses frequently remain at Stage 1 for extended periods due to limited security staffing. When audit requirements expand, or a phishing incident occurs, advancement becomes urgent.

Ideally, organizations should advance beyond Stage 1 proactively, without requiring external motivation, whether from an auditor or, more critically, a security incident.

Security Awareness Program Maturity Model Stage 2: Engagement-Focused

Stage 2 programs run training more frequently, on a quarterly or monthly basis, and introduce varied content formats to reduce fatigue. Phishing simulations are introduced at this stage, though almost exclusively via email. Click rates become the primary performance metric, giving security teams their first behavioral signal beyond completion logs.

The organizational mindset shifts from training all employees once to maintaining ongoing engagement. Content becomes more varied and interactive, but remains largely generic. The same simulation templates are distributed to the finance team and the IT help desk alike.

The critical gap at this stage is depth: click rates measure exposure, not behavioral change, and email-only simulations fail to address vishing, smishing, and deepfake vectors that now drive a significant share of social engineering attacks.

A notable case illustrating the importance of multi-channel awareness involves a hospitality company that experienced a multi-channel attack spanning email, vishing, and smishing simultaneously.

Advancing from Stage 2 requires connecting simulation outcomes to training content, so that a failed phishing test triggers a relevant learning module rather than a generic reminder.

Security Awareness Program Maturity Model Stage 3: Behavior Change-Focused

At Stage 3, the program becomes role-specific, with finance teams receiving invoice-fraud scenarios and executives encountering impersonation simulations, for instance.

Simulation results feed directly into content decisions, and microlearning modules trigger automatically when an employee fails a test. Phishing click rates and reporting rates are both tracked, and repeat clickers are identified for targeted intervention.

The prevailing mindset at this stage is diagnostic. Training is treated as a signal-driven process rather than a scheduled event, and the program begins to function as a feedback loop in which simulation, measurement, and training are applied iteratively.

The gap separating Stage 3 from Stage 4 is organizational scope. Security awareness remains within the security team, as HR, legal, and finance have not yet been established as partners, and security culture has not yet been measured as an organizational property.

Security Awareness Program Maturity Model Stage 4: Culture-Focused

The security awareness training program maturity model stage 4 extends beyond the security team. HR integrates behavioral expectations into onboarding and performance frameworks, while legal and finance participate in scenario design. Security champions are established across departments, creating peer-to-peer reinforcement that centralized training calendars cannot replicate.

Security culture surveying begins at this stage, providing leadership with a baseline measure of whether employees feel empowered to report suspicious activity and whether they trust the security team's guidance. Human risk monitoring becomes a shared organizational responsibility rather than a security-only metric. The gap separating Stage 4 from Stage 5 is instrumentation. Most Stage 4 programs lack the technical infrastructure to produce real-time, employee-level risk data that can drive automated responses and board-level reporting.

Security Awareness Program Maturity Model Stage 5: Metrics-Driven Culture

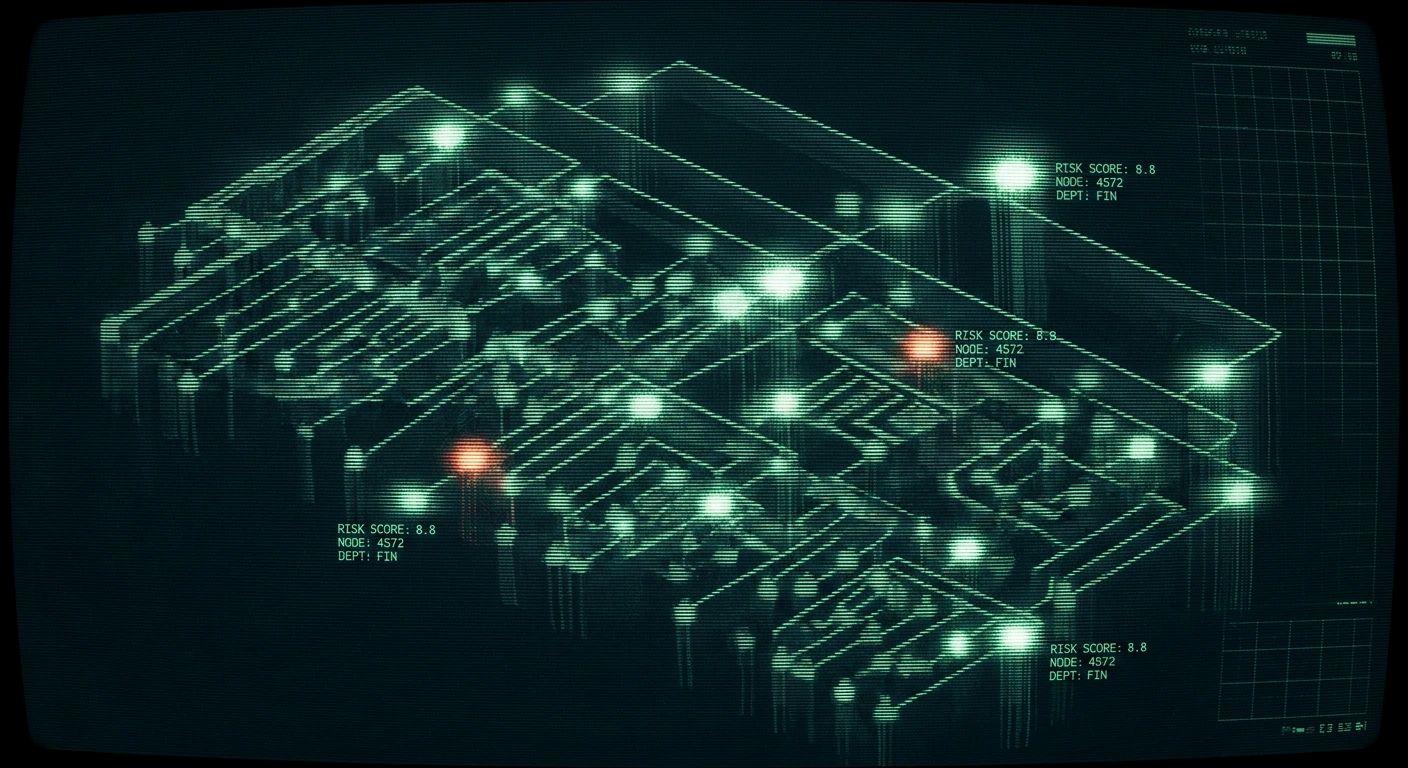

Stage 5 is full-program instrumentation, with every employee carrying a dynamic risk score updated by simulated behavior, training completion, credential-breach exposure, and open-source intelligence (OSINT) signals.

Department-level dashboards show risk concentration in real time, allowing the security team to identify which roles are trending higher in susceptibility, trigger automated training enrollment for high-risk individuals, and present board-ready risk-reduction data tied to measurable outcomes.

The organizational mindset at Stage 5 treats security awareness as a continuous improvement system rather than a program that runs on a schedule. The gap for most organizations trying to reach this stage is data architecture. Legacy SAT platforms do not produce the behavioral signals required to build real-time human risk scores. Reaching Stage 5 requires a platform built to measure behavior continuously, not just log completions.

How to Assess an Organization's Current Maturity Level

Benchmarking a security awareness program maturity model begins with four concrete steps:

- Establishing behavioral baselines through phishing simulations and human risk surveys

- Auditing existing training content

- Reviewing the metrics currently being captured

- Mapping current activities against the five-stage maturity definitions.

Once this assessment is complete, Culture Maturity Indicators (CMIs) are calculated from simulation outcomes, survey data, and behavioral signals to produce a numeric score that locates the program on the maturity curve.

The final step involves formalizing assessment findings in a Program Charter, the document that defines scope, goals, stakeholders, and advancement milestones, and formally marks the transition from one stage to the next.

Run a Phishing Baseline and Human Risk Survey to Assess Security Awareness Program Maturity Model

Measurable behavioral data should be established before any other action is taken. A phishing simulation should be deployed across the entire employee population, recording click rates, repeat-click rates, and reporting rates, rather than relying on training module completion percentages alone.

This should be paired with a short human risk survey that captures self-reported security behaviors, confidence levels, and department-specific threat exposure.

Audit the Existing Training Content to Assess Security Awareness Program Maturity Model

All active training assets should be reviewed and evaluated for recency, format, and role-specificity. If the most recent module predates AI-generated spear phishing, or if finance, legal, and IT teams receive identical content, the program is functioning below Stage 2 regardless of completion rates.

The SANS 2025 Security Awareness Report, drawing on data from more than 2,700 practitioners across 70 countries, found that influencing employee behavior requires 3 to 5 years of sustained, targeted effort, and embedding security culture requires 5 to 10 years. Progress stalls when content is generic.

Review the Metrics Against Behavioral Signals to Assess Security Awareness Program Maturity Model

Most programs track completion logs, while mature programs track behavioral change. Organizations should audit whether current dashboards capture phishing click rates, repeat click rates, reporting rates, and triggered microlearning participation alongside completion data. Completion logs confirm attendance; behavioral signals confirm whether training is actually reducing risk.

Calculate Culture Maturity Indicators to Assess Security Awareness Program Maturity Model

Culture Maturity Indicators (CMIs) are composite scores derived from three data streams:

- Simulation outcomes (click rates, reporting rates, repeat offenders)

- Behavioral survey responses

- Training engagement signals, such as microlearning completion following a failed simulation.

A security culture score below 60 reflects low organizational maturity, typically Stage 1 or 2, characterized by high click rates, low reporting rates, and minimal cross-functional involvement. Scores above 80 indicate embedded cultural norms, where reporting rates are high, click rates are low, and employees treat simulations as a skill-building exercise rather than a compliance obligation.

Map Activities to the Five Stages and Identify Stakeholder Gaps to Assess Security Awareness Program Maturity Model

Organizations should map current program activities against the five-stage maturity definitions and identify where execution stops. Documentation should capture which cross-functional stakeholders, HR, legal, IT, GRC, and finance, are actively involved versus absent. A program in which only IT security participates is structurally limited to Stage 2, regardless of the frequency of simulations, because cultural change requires organizational co-ownership.

Write or Update a Program Charter to Assess the Security Awareness Program Maturity Model

Assessment findings only drive progress when formalized. A Program Charter defines program scope, measurable goals, named stakeholders across every function, and the specific behavioral and CMI milestones that mark advancement from one stage to the next. Without a charter, stage transitions occur informally or not at all.

The charter translates assessment data into organizational commitment and should be revisited whenever a CMI score crosses a threshold or the threat landscape materially changes.

The five-stage framework delivers its most actionable guidance in defining what each stage concretely demands from the organization.

Metrics a Mature Security Awareness Program Should Track

A security awareness program maturity model is defined by the metrics used to measure progress. Most programs track what is easy to count, including training completion rates, policy acknowledgment records, and audit pass/fail outcomes. What these programs fail to capture is what actually predicts whether employees will make safer decisions under pressure. That gap separates a compliance-driven program from a mature one.

Compliance Metrics vs. Impact Metrics

Compliance metrics and impact metrics answer fundamentally different questions.

Compliance metrics measure whether activity occurred, including whether employees completed the module, signed the policy, or passed the audit.

Impact metrics measure changes in behavior, including whether employees are clicking phishing links less frequently, reporting more suspicious emails, and adopting controls such as MFA and password managers at higher rates.

A program reporting only compliance metrics measures inputs rather than outcomes. A 95% training completion rate does not guarantee that employees will recognize a spear phishing email.

Impact metrics require tracking:

- Phishing simulation click rates over time

- Repeat click rates among previously trained employees

- Phish report rates

- MFA adoption

- Password manager usage

MFA adoption and password manager uptake function as lagging indicators, confirming that behavioral change has taken hold sufficiently to produce durable, measurable action. Combined with credential breach exposure monitoring, these signals provide security teams with a composite picture of actual human risk.

How Attacker Dwell Time Connects to Security Awareness Program Maturity

Attacker dwell time is the period between initial compromise and detection, it serves as a downstream maturity indicator when correlated with phishing simulation data and incident response timelines.

The Mandiant M-Trends 2025 report recorded a global median dwell time of 11 days, meaning attackers spend nearly two weeks undetected inside networks after gaining access. Organizations whose employees report phishing faster and whose security teams are trained to act on those reports compress this window. Shorter dwell time signals a more mature human layer, not just better tooling.

Why Boards Need Impact Metrics, Not Completion Logs

Boards cannot act on training completion percentages; risk reduction data expressed in business terms is required. CISOs who quantify that reduction in dollar terms earn budget authority that completion logs cannot provide.

Behavioral analytics and real-time human risk scoring per employee transform static training records into dynamic intelligence. These tools identify which individuals, teams, and roles present the highest risk, and make the return-on-investment case for continued investment concrete and defensible. Whether a program reports outputs or demonstrates measurable outcomes determines whether security awareness earns a permanent position in budget planning.

How to Advance Through Each Security Awareness Program Maturity Model Stage

Advancing a security awareness program maturity model requires navigating four distinct transitions, each demanding a specific shift in methodology, investment, and organizational alignment. The path from reactive compliance to predictive risk reduction runs through multi-channel simulation, role-based training, cross-functional culture-building, and behavioral analytics tied to board-level reporting. Each transition also requires a shift in how program leaders communicate value to leadership, from completion rates to measurable risk reduction.

Stage 1 to Stage 2: Replace Annual Modules With Active Measurement

Stage 1 programs operate on a single annual training cycle with no baseline data to act on. Replacing the annual module with multi-format content delivered in short, spaced intervals is recommended, including video scenarios, knowledge checks, and policy reminders distributed across the year.

Phishing simulations should be launched immediately, even before the curriculum is fully developed, as simulation data establishes the baseline click rate against which all future phishing metrics are measured.

A simple metric dashboard tracking click rate, report rate, and training completion should be established, as these three indicators are necessary to demonstrate progress to leadership and justify continued investment.

Stage 2 to Stage 3: Expand Channels and Personalize by Role

Email-only simulation leaves the most dangerous attack surfaces untested. Entering Stage 3 requires adding vishing and smishing simulations to the program, since finance teams and executives encounter voice-based social engineering attacks as frequently as email-based ones.

Channel expansion should be paired with role-specific training paths:

- Finance employees train on invoice-fraud scenarios

- Developers practice credential-reset manipulation

- Executives complete executive-impersonation drills

- HR personnel learn about fraudulent applicant schemes

Microlearning should also be triggered automatically within 24 hours of a simulation failure, as in-the-moment feedback produces stronger behavioral retention than scheduled refreshers.

Stage 3 to Stage 4: Build the Culture Infrastructure

Cultural maturity does not emerge from training volume; it requires deliberate organizational architecture. Security culture surveys should be conducted twice annually to measure whether employees associate security with shared responsibility or compliance obligation.

High-engagement employees in non-security departments should be identified and developed as security champions through structured recognition and clearly defined responsibilities.

Connecting security behavior signals to onboarding checklists and performance review criteria ensures that security transitions from a training event to a professional standard.

Stage 4 to Stage 5: Deploy Behavioral Analytics and Automate Risk Response

Stage 5 requires the program to operate continuously without manual intervention. Human risk monitoring scores each employee in real time based on simulation behavior, training completion, and open-source intelligence (OSINT) exposure signals, then automatically enrolls high-risk individuals into targeted remediation.

Board-ready reporting should translate these scores into projected financial exposure and measurable risk reduction over rolling 90-day periods. Explicit mapping to NIST CSF and ISO 27001 connects security culture outcomes to the governance frameworks recognized by boards and auditors.

What Gets Organizations Stuck and How Behavior Science Fixes It

The four most reliable security awareness program failure points are:

- Absent leadership sponsorship

- No dedicated awareness role

- Budget allocated exclusively to technical controls

- An inability to communicate in terms of business risk.

However, each barrier has a structural resolution:

- Securing a named executive sponsor before requesting a budget

- Staffing a dedicated awareness practitioner rather than assigning the function to an overloaded IT generalist,

- Reframing every budget request around expected breach cost reduction rather than training seat count

BJ Fogg's Behavior Model, developed at Stanford, holds that behavior requires motivation, ability, and a prompt occurring simultaneously, and offers a precise design framework for each stage transition.

Programs stall when they optimize for motivation through awareness content while neglecting ability, which involves making secure actions frictionless, and prompt, which requires timely and context-specific triggers. Microlearning delivered at the moment of simulation failure satisfies all three conditions simultaneously, making the secure behavior automatic rather than effortful.

Why AI-Powered Threats Expose Gaps in Low Security Awareness Training Maturity Model Programs

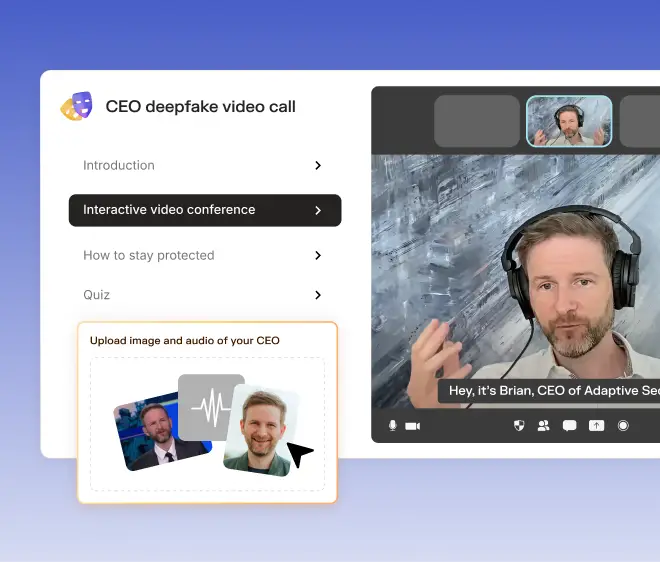

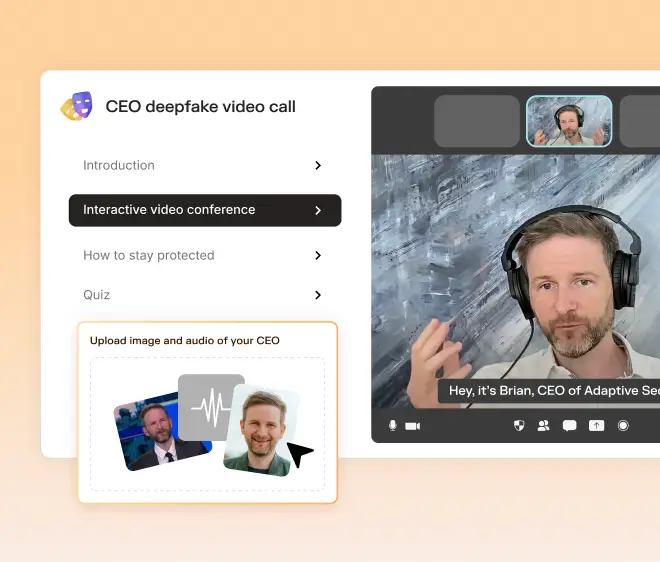

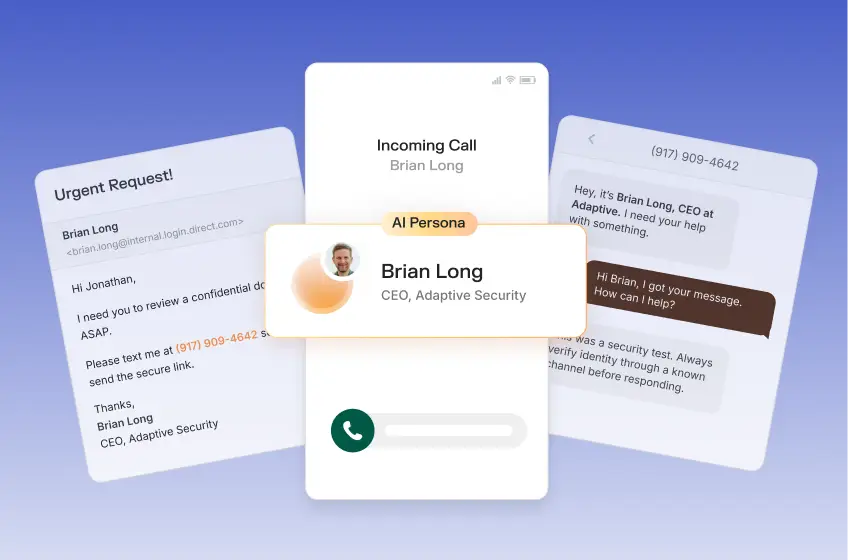

A security awareness program maturity model built on annual email phishing tests cannot defend against an AI-cloned CFO voice deployed in a phone-based social engineering attack. A 2024 study by McAfee found that one in four people reported experiencing an AI voice scam, highlighting how Stage 1 and Stage 2 programs, defined by infrequent, single-channel simulations, leave employees entirely unprepared for the attack surface they now face.

What Does a Low Security Awareness Program Maturity Program Actually Miss?

The structural failure is channel coverage. A Stage 1 or Stage 2 program trains employees to identify suspicious emails, but does not train a finance manager to question an urgent wire transfer request delivered over a video call in which every participant on screen is a deepfake. The resulting loss would not be a technology failure, but a training architecture one.

A deepfake CFO impersonation bypasses every instinct conditioned by email-only training, which emphasizes identifying fraudulent content rather than maintaining skepticism toward seemingly familiar interactions.

What Does a Mature Program Do Differently?

Closing these gaps requires multi-channel phishing simulations spanning email, voice, SMS (smishing), and deepfake video. Legacy security awareness training platforms were architected for 2010s-era threats and lack mechanisms to simulate an AI-cloned executive voice or a synthetic video during a conference call.

A mature program runs role-specific scenarios across the full attack surface, ensuring that employees practice responding to incidents before encountering them in the field. This gap in coverage is precisely what the Five Stages of Security Awareness Program Maturity are designed to measure and close.

How Program Maturity Connects to Compliance Frameworks and Cyber Insurance

A security awareness program maturity model does more than organize training activities. It determines whether compliance requirements serve as a genuine risk-reduction engine or merely a documentation exercise.

Every major framework, including SOC 2, HIPAA, PCI-DSS, GDPR, NIST CSF, ISO 27001, and CMMC, mandates documented security awareness training, yet none prescribe a specific maturity level. Organizations that treat compliance as a ceiling rather than a floor consistently plateau at Stage 1, completing annual training, collecting acknowledgment signatures, and stopping there.

Why Compliance-Only Programs Fail to Reduce Risk

The structural problem with compliance-minimum programs is that they demonstrate activity rather than behavioral change. A training completion log confirms that employees attended a module but provides no indication of whether they can recognize a spear phishing email or a deepfake video request.

Audit-ready documentation from a Stage 1 program satisfies the letter of the law regarding compliance requirements while leaving the organization exposed to the very high risk those frameworks were designed to reduce.

What Higher Maturity Produces as a Byproduct

Advancing program maturity generates compliance documentation as a byproduct. Training completion records, phishing simulation results, policy acknowledgment logs, and behavioral risk score data come from a program focused on measurable behavioral change rather than on producing paperwork.

Continuous human risk monitoring platforms generate role-specific simulation data and dynamic risk scoring that map to NIST CSF control families, support ISO 27001 Annex A, and satisfy HIPAA workforce training requirements with documented behavioral evidence rather than self-attestation.

How Maturity Now Shapes Cyber Insurance Underwriting

Cyber insurers have shifted from checkbox questionnaires to evidence-based underwriting. When pricing policies and setting coverage conditions, carriers now evaluate:

- Phishing simulation frequency

- MFA adoption rates

- Training program cadence

- Incident response capabilities

Self-attestation alone no longer satisfies top-tier underwriters. A higher-maturity program produces the documented behavioral evidence that moves an organization from a high-risk pricing tier to a more favorable one, reducing premiums and expanding coverage terms.

Every stage of a mature security awareness program advances both risk posture and underwriting position simultaneously, meaning the investment in behavioral change yields dividends well beyond the security team.

Security Awareness Maturity vs. Broader Human Risk Management

Security awareness program maturity modeling and human risk management (HRM) are not parallel disciplines. They are the same discipline measured at different levels of precision.

HRM is the practice of continuously monitoring, measuring, and mitigating the risks that human behavior introduces to an organization, including security awareness, susceptibility to phishing, open-source intelligence (OSINT) exposure, history of credential breaches, AI tool usage, and shadow IT behavior.

Why Maturity Stages Map Directly to HRM Development

Advancing through maturity stages is, in practice, the process of building a functioning HRM program from its foundation. Early stages address the baseline, covering training completion and policy acknowledgment as the minimum evidence that employees have been exposed to risk guidance.

Middle stages shift focus to behavioral change, using phishing susceptibility rates and simulation performance as the primary signal. Upper stages achieve what HRM programs ultimately exist to do: deliver continuous, data-driven human risk visibility at the individual, team, and organizational levels.

What a Mature HRM Program Actually Measures

Mature programs assess human risk across ten dimensions:

- Training completion

- Phishing susceptibility

- Credential exposure

- OSINT footprint

- Policy acknowledgment

- Role-based risk

- Executive exposure

- Third-party access

- Incident history

- Behavioral analytics

Each dimension captures a distinct failure mode. Credential exposure reflects passive risk that an employee may not control, while behavioral analytics surfaces active decisions, such as pasting sensitive data into unsanctioned AI tools. Together, these dimensions form the complete picture that early-stage programs focused solely on training completion rates cannot see.

Organizations that treat security awareness maturity as a compliance milestone stop at the middle stages, while those that treat it as an HRM framework reach the upper stages, where risk is quantified, remediation is automated, and security leaders can demonstrate a measurable reduction in breach probability to boards and regulators.

Those upper stages each carry a distinct profile of capabilities, costs, and organizational readiness requirements.

Organizations seeking to achieve the final stage of security awareness program maturity are encouraged to explore the Adaptive Security Demo to understand how the platform supports the full range of capabilities required.

Frequently Asked Questions About Security Awareness Program Maturity

What Is a Security Awareness Program Maturity Model?

A security awareness program maturity model is a structured framework that defines progressive stages of program development, from ad hoc compliance-driven training to a fully embedded security culture in which employees recognize and report threats in real time.

Organizations use it to benchmark their current state, identify specific capability gaps, and build a roadmap with measurable milestones rather than undefined next steps. Most models define between four and six stages, each with distinct characteristics across content strategy, simulation frequency, risk measurement, and behavioral outcomes.

Why Does Maturity Level Matter for Breach Risk?

Organizations at the lowest maturity stages treat training as an annual checkbox, leaving the human layer exposed for months between interventions. Higher-maturity programs close that gap through continuous simulation, role-based content, and real-time risk scoring rather than annual completion logs.

How to Assess an Organization's Current Maturity Stage?

Organizations should begin by auditing four signals:

- The frequency of phishing simulations

- Whether training is role-specific or generic

- Whether behavioral change or only completion rates are tracked

- Whether leadership receives quantified human risk data

Organizations that run annual simulations, assign the same module to every employee, and report completion percentages to the board are operating at Stage 1 or Stage 2. Those running monthly, multi-channel simulations with automated remediation triggered by individual behavioral signals are approaching Stage 4 or Stage 5.

How Long Does It Take to Advance from One Maturity Stage to the Next?

Movement between stages depends on training frequency, simulation variety, and whether remediation is automated or manual. Organizations that shift from annual to quarterly simulations and introduce role-specific content typically observe measurable reductions in click rates within 90 days.

Reaching the highest maturity stages, where security behavior is self-sustaining, and employees proactively report threats, generally requires 12 to 24 months of consistent, data-driven program execution.

What Metrics Signal that a Program Is Ready to Advance?

The clearest advancement signals are:

- Declining phishing simulation click rates

- Increasing employee-reported phishing rates

- A reduction in repeat offenders across simulation rounds

A program ready to advance from Stage 2 to Stage 3 will show consistent completion above 85%, a measurable decline in click rates across at least two simulation cycles, and leadership capable of interpreting human risk scores rather than relying solely on completion percentages. If those metrics are not tracked, the program cannot advance, as no data foundation exists on which to build.

What Is the Difference Between a Compliance-Focused and a Behavior-Change-Focused Security Awareness Program?

A compliance-focused program measures activity, including training completion rates, policy acknowledgments, and audit pass/fail records, but does not change what employees actually do when confronted with a real threat.

A behavior-change-focused program measures outcomes such as phishing click rates over time, repeat clicker rates, and the frequency with which employees report suspicious messages.

The practical difference becomes apparent under real attack conditions, as an employee who has completed annual training is not equivalent to an employee whose behavior has been shaped by repeated, role-specific simulations and timely microlearning. Compliance documents that training occurred. Behavioral change determines whether an attack succeeds.

What Is the Minimum Maturity Level Required to Satisfy SOC 2, HIPAA, Or PCI-DSS Security Awareness Requirements?

SOC 2, HIPAA, and PCI-DSS each require documented security awareness training, but none prescribe a specific maturity level. In practice, a Stage 2 program, with training records, a phishing simulation history, and policy acknowledgment logs, satisfies the documentation requirements most auditors look for across all three frameworks.

PCI-DSS 4.0 requires annual training and role-specific content for personnel who handle cardholder data, aligning with Stage 2 to Stage 3 capabilities. Organizations that treat compliance as a ceiling rather than a floor plateau at Stage 1, where they pass audits on paper but carry measurably higher human risk in practice.

How to Make a Business Case to Leadership for Investing in Advancing Security Awareness Program Maturity?

The most effective business case frames maturity advancement as measurable risk reduction with a financial floor, rather than as a training investment. Present leadership with the current phishing click rate, the repeat clicker count, and the percentage of employees who report suspicious messages. Those three numbers translate directly into breach probability. Pair them with the cost of advancing one maturity stage, and the ROI calculation becomes concrete rather than conceptual.

What Are the Most Common Reasons Organizations Get Stuck Between Maturity Stages?

The four most common barriers are:

- The absence of a dedicated security awareness role, as programs stall without clear ownership

- Lack of leadership buy-in, since advancement requires budget and cross-functional access that security teams cannot secure independently

- Budget allocated entirely to technical controls rather than human risk

- Metrics that never progress beyond completion rates

Organizations stuck between Stage 2 and Stage 3 most often lack the role-specific training and triggered microlearning infrastructure needed to act on simulation data. Those stuck between Stage 3 and Stage 4 typically have strong training programs, but no cross-functional stakeholders, as HR, legal, and finance are not yet involved, making cultural embedding unachievable.

How Should Security Awareness Maturity Be Adapted for Remote or Hybrid Workforces?

Remote and hybrid workforces require multi-channel simulation coverage because the attack surface expands beyond corporate email. Employees working remotely are primary targets for vishing (voice phishing) via personal devices, smishing (SMS phishing) via text messages, and deepfake video attacks during video conferences. A program designed for in-office, email-centric threats leaves hybrid workers underprepared.

Role-specific training must account for the home-office context, including personal-device risks, shadow IT use, and the absence of in-person verification norms that reduce fraud in physical environments. Behavioral analytics should segment risk scores by work location where possible, so that remote-specific susceptibility patterns surface in reporting.

How Can Security Awareness Maturity Data Be Presented to a Board or Executive Team to Drive Budget Decisions?

Boards respond to risk in financial terms rather than training metrics. Security leaders should present three data points in place of completion-rate percentages:

- The current phishing click rate as a proxy for breach probability

- The cost of a successful phishing-initiated breach at the organization's revenue scale

- The trend line that shows improvement in click or report rates over the preceding 12 months.

The maturity stage should be aligned with the insurance posture, as higher-maturity programs produce documented behavioral evidence to support cyber insurance negotiations. A security culture score below 60 reflects measurable organizational risk, while a score above 80 reflects an investment with demonstrable returns. Framing maturity advancement as a risk reduction asset rather than a training budget line shifts the conversation from cost to capital allocation.

How Does an Organization's Size or Industry Affect Which Maturity Stage Is Realistic?

Organization size affects resourcing, and industry affects regulatory pressure, with both factors shaping where a realistic maturity ceiling sits. Large enterprises in financial services and healthcare typically enter at Stage 2 due to regulatory compliance requirements such as PCI DSS and HIPAA, and have the resources to reach Stage 3 or Stage 4 within two to three years.

Small and medium-sized businesses (SMBs) with no dedicated security awareness role often remain at Stage 1 for extended periods, as program ownership falls to an already stretched IT function.

Highly regulated industries face stronger external incentives to advance because audit exposure creates tangible risk at lower maturity levels. For most organizations with fewer than 200 employees, Stage 3 represents a realistic and meaningful target, as achieving a fully metrics-driven culture at Stage 5 typically requires dedicated program staff and behavioral analytics infrastructure.

Does a Higher Security Awareness Maturity Level Reduce Cyber Insurance Premiums?

A higher maturity level strengthens an organization's position in the underwriting process, as insurers are increasingly evaluating behavioral evidence rather than self-attestation. Carriers now assess phishing simulation frequency, MFA adoption rates, training completion records, and incident response capabilities when pricing policies and setting coverage conditions.

A program at Stage 3 or above generates the documented simulation history, behavioral metrics, and role-specific training records that satisfy insurer questionnaires with verifiable data rather than checkbox responses. Whether this translates to lower premiums depends on the individual carrier and policy structure, but higher-maturity programs reduce the factors that trigger premium increases and coverage restrictions.

How Does Advancing Maturity Reduce Susceptibility to AI-Generated Phishing and Deepfake Attacks?

Advancing maturity introduces multi-channel simulation covering email, vishing, smishing, and deepfake video, which represents an effective training mechanism for preparing employees to recognize AI-era attacks.

A Stage 1 program built on annual email phishing simulations does not prepare a finance employee to recognize an AI-cloned executive voice requesting a wire transfer, or a deepfake video call in a video conferencing environment. Stage 3 and above programs address this by deploying role-specific training against the exact attack vectors that high-risk employee groups face.

The data from those simulations feeds behavioral risk scores, creating a continuous feedback loop that improves human detection capability as attack techniques evolve, which is precisely what makes program maturity the organizing framework for defending the human layer at scale.

See How Adaptive Security Supports Every Stage of Program Maturity

Building measurable behavioral change across a workforce requires a platform that supports employees at every stage, from Stage 1 compliance baselines to Stage 5 real-time risk scoring.

Adaptive Security's human risk platform provides security teams with the phishing simulations, security awareness training, and behavioral analytics needed to advance maturity with evidence rather than assumptions. Book a demo to see the full platform in action.

As experts in cybersecurity insights and AI threat analysis, the Adaptive Security Team is sharing its expertise with organizations.

Contents

.png)

.png)