Picture this: One of your employees gets a Microsoft Teams message from someone claiming to be IT support. The profile looks legitimate. The Teams badge is real. The message says there's a problem with their account that needs immediate attention. The employee grants a remote access session through Quick Assist, a built-in Microsoft tool. Forty-five minutes later, attackers are inside your network, moving toward your domain controllers.

Microsoft issued a warning this month about exactly this attack pattern. It is working on enterprise networks across every sector. And most organizations have no idea it's coming.

The Nine-Step Attack Chain

What makes this attack different is the weapon it uses: Microsoft Teams itself.

Attackers create external Teams accounts and reach employees through the platform's cross-tenant collaboration feature, which allows users outside your organization to message your employees directly. Most companies have never turned this off. From that first message, the attack follows a precise nine-step chain.

The attacker poses as IT helpdesk staff, citing an urgent account issue. Once the employee engages, they are convinced to launch Quick Assist and hand over control of their machine. Think of it as giving a stranger the keys to your office and then stepping outside.

From there, the attacker runs reconnaissance using Command Prompt and PowerShell to map the network and understand their level of access. They deploy malware through DLL side-loading, hiding malicious code inside applications your security tools already trust, so it raises no flags. Registry modifications establish persistence, ensuring the attacker's access survives a reboot. HTTPS-based command-and-control traffic then lets them communicate back to their own servers, disguised as ordinary web activity.

Lateral movement follows via Windows Remote Management (WinRM), a legitimate administrative protocol that attackers use to pivot from one machine to others across the network, targeting domain controllers: the servers that control access to virtually everything in your organization. Finally, tools like Rclone transfer sensitive data to external cloud storage, automatically filtering to move the most valuable files first.

Every tool in this attack is legitimate Microsoft infrastructure. The entry point is a Teams chat. The access method is a built-in Microsoft application. The employee was simply trying to be helpful.

Why This Attack Keeps Working

Employees are conditioned to respond to IT quickly, especially when there's urgency around an account issue. That trained helpfulness is exactly what attackers exploit.

Microsoft Teams is perceived as an internal, trusted environment. A phishing email still triggers some learned skepticism for most employees. A Teams message feels different. It feels like it comes from inside the building. The attacker already holds the trust advantage before typing a single word.

When a security team reviews the logs after the fact, everything looks normal, because technically it is. The only variable is who was behind the keyboard. That's what makes this so hard to catch and so easy to repeat.

The gap here is training. Most security awareness programs still focus on email phishing. They have not caught up to the reality that attackers have moved onto collaboration platforms, and employees have no frame of reference for what a suspicious Teams interaction looks like.

The Deepfake Layer That's Already Here

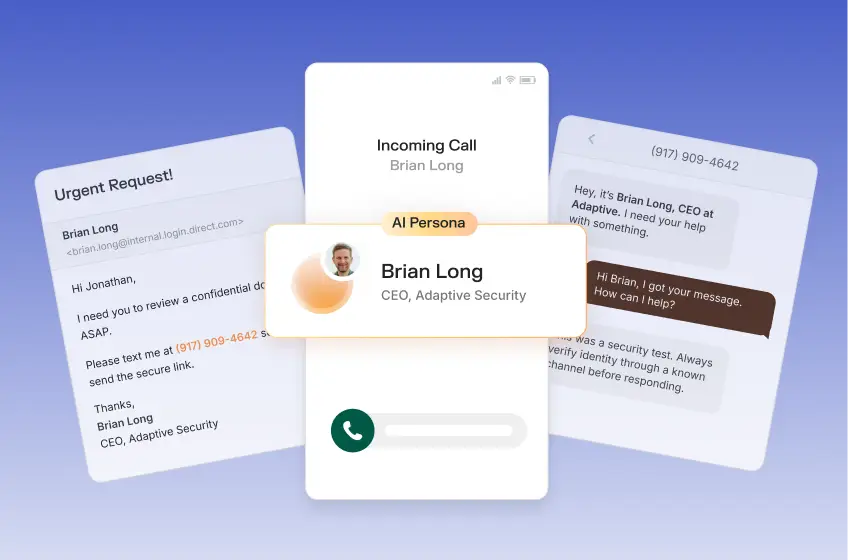

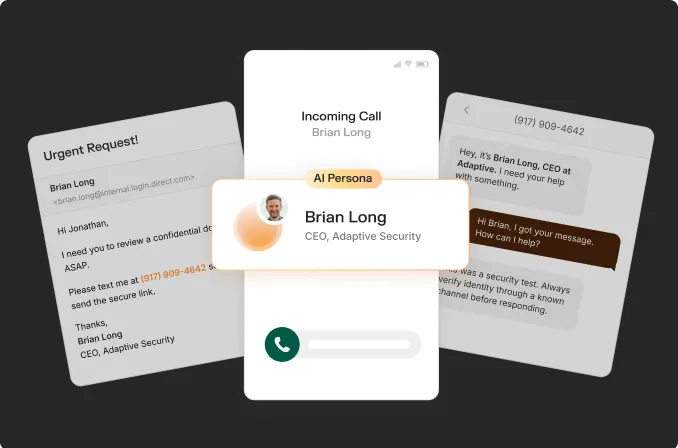

The Teams impersonation attack described above is text-based. The employee reads a message, makes a judgment call in a few seconds, and the attack either succeeds or it fails. That alone is a serious and growing problem.

Now consider what happens when voice enters the conversation.

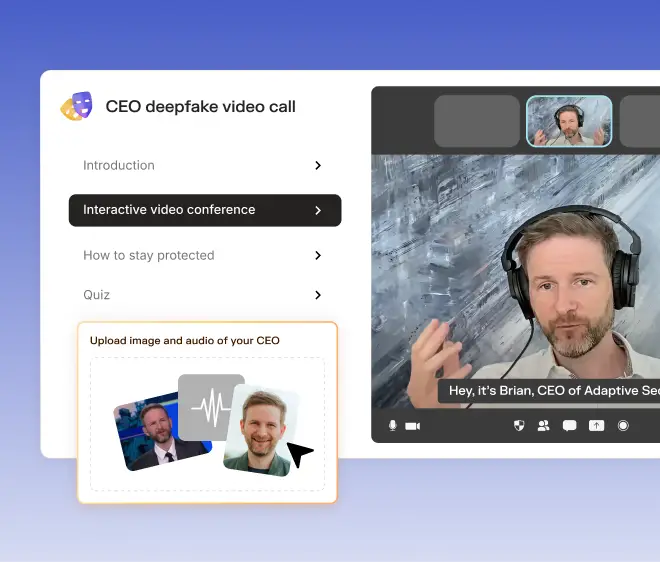

AI voice cloning tools can generate a convincing replica of a person's voice from as little as three seconds of audio. That audio is easy to find: a LinkedIn video, a company webinar, a public voicemail greeting. In more sophisticated versions of this attack, the Teams chat is followed by a phone call from someone who sounds exactly like your CISO or CTO instructing the employee to cooperate. At that point, the employee is not just reading a message from a stranger. They are hearing a familiar voice from a person they trust.

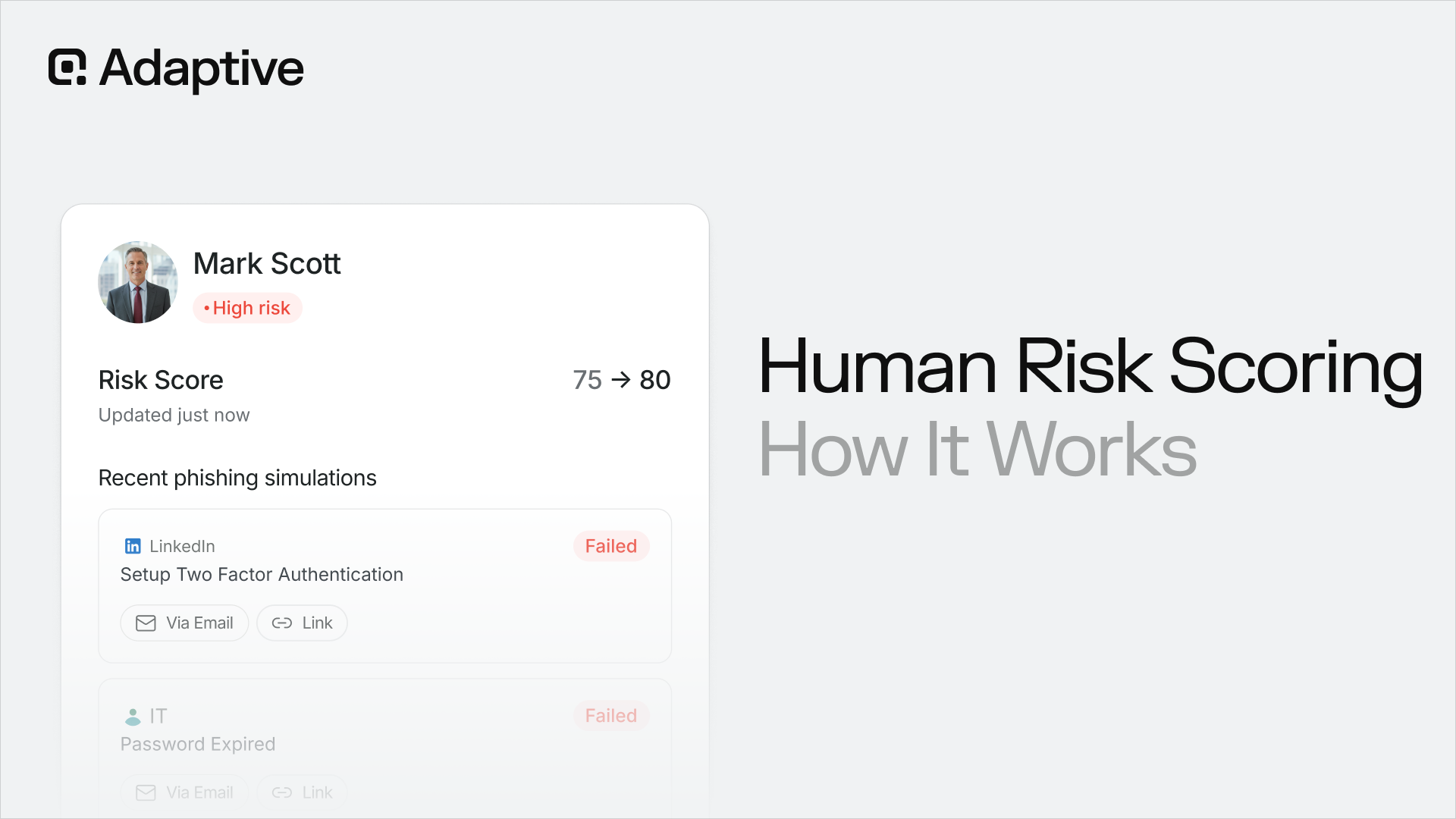

Deepfake voice technology has already been used to impersonate the Ukrainian Foreign Minister in a call with a U.S. Senate committee chairman. Adaptive Security has tracked a 17-fold increase in deepfake attacks in the U.S. from 2023 to 2024, with over 100,000 incidents recorded last year alone. Eighteen months ago, roughly one in ten security executives had encountered a deepfake attack at their company. Today, it is closer to half.

The Teams helpdesk impersonation attack is the entry-level version of AI-enhanced social engineering. The voice-augmented version is already in use. The video version is coming fast.

Six Steps Every CISO Should Take Now

These steps apply whether or not you have seen this attack at your organization yet.

- Review your external Teams access policies. Microsoft Teams allows external users to message your employees by default through cross-tenant collaboration settings. Most organizations have never examined these. Determine which employees genuinely need that access, restrict it for everyone else, and consider disabling it entirely for high-risk roles in finance, legal, and IT.

- Lock down remote assistance tools. Quick Assist does not belong on every machine in your enterprise. Restrict it through group policy so sessions can only be initiated by your own IT team, and audit all remote access tools across your environment. Anything not actively managed is an open door.

- Tighten Windows Remote Management (WinRM). WinRM should only be accessible from designated, monitored systems. If it is open broadly across your network, that is an active attack surface. Restrict it to specific jump servers and admin workstations.

- Train employees on collaboration platform threats. Your current phishing training does not prepare employees to be suspicious of a Teams message. Add explicit instruction on what a real IT interaction looks like at your organization, and establish a clear policy: legitimate IT staff do not initiate remote sessions by cold-messaging an employee.

- Require out-of-band verification for high-trust requests. Any request for system access should be confirmed through a second, independent channel. If an employee receives a Teams message asking for remote access, they should call IT directly using a number they already have. As deepfake voice technology becomes more accessible, that verification habit becomes one of the highest-value behaviors you can build across your workforce.

- Simulate this attack before a real one arrives. Run a controlled helpdesk impersonation exercise: a Teams-based social engineering scenario with a remote access request. The results will tell you exactly where your human risk is highest. Security teams that run these simulations consistently find the gaps before attackers do.

The Human Layer Is the Last Line of Defense

Technical controls reduce your attack surface. Restricting external Teams access, locking down Quick Assist, and tightening WinRM all matter. They should all be in place.

The entry point in every one of these attacks is still a person. An employee reads a message, makes a decision in two seconds, and either the attack succeeds or it fails. That decision happens long before any security tool gets a chance to intervene.

The organizations staying ahead of this threat are training their people to recognize what an attack looks like before they are sitting in one. As deepfake technology makes impersonation more convincing across text, voice, and video, that preparation becomes the most important investment a CISO can make.

The attacks are already happening. The only question is whether your employees are ready.

Contents