Last week, Vercel disclosed a security breach. The mechanics were simple.

Attackers compromised a Vercel employee's Google Workspace account at Context.ai, a third-party AI tool the company used internally. That one credential was enough. With it, they moved directly into Vercel's systems and left with API keys, GitHub and NPM access tokens , source code, database information, and records on roughly 580 employees. In other words, they walked out with the keys to large parts of Vercel's technical infrastructure. A threat actor posted the stolen data for sale on hacking forums and demanded $2 million. Actual members of ShinyHunters, the group the actor claimed to represent, denied any involvement.

Vercel confirmed its core infrastructure stayed intact, including Next.js and Turbopack, the widely-used open-source web development tools that Vercel maintains and that millions of developers rely on to build websites. Customers who had flagged their environment variables as sensitive were protected because those variables were encrypted. Environment variables are the behind-the-scenes configuration settings that an application uses to connect to the rest of the world: things like the password to a company database, the address of a payment server, or a secret code that lets one system talk to another. They are the kind of credentials that, in the wrong hands, give an attacker access to everything an application touches. Everyone who had not marked these variables as sensitive was exposed.

The breach required no zero-day exploits. No nation-state resources. No advanced persistent threat group. Just a stolen credential at a trusted vendor and an open path into a major platform. That is what should concern every security leader reading this.

The Pattern Behind the Breach

The Vercel incident follows the same mechanics as most major breaches over the past two years. Attackers identify a trusted third-party vendor with internal access to their real target. They compromise a credential inside that vendor. They use it to reach the target. They take what they came for.

Your organization's security posture is a function of every vendor in your stack. The SaaS integration you approved eighteen months ago. The AI developer tool your engineering team picked up last quarter. The CI/CD platform, which is the automated software pipeline that ships your product and holds the passwords to do it. Each one is a potential entry point, and most of them have never been audited for the access they actually hold.Social engineering and third-party access are the entry points. Credential theft is the payload. Lateral movement is the path. The human layer is the vulnerability. This pattern will not change, and the speed and scale of attacks built on it are about to increase significantly. That is where Project Mythos comes in.

What We Already Know Is Coming

Earlier this year, Anthropic gave a select group of cybersecurity organizations early access to Claude Mythos Preview, its most capable AI model to date, through a program called Project Glasswing. At Adaptive Security, we reviewed the model’s system card as a threat intelligence document. What we found mapped directly to the attack patterns behind the Vercel breach.

Mythos demonstrated autonomous credential theft. When given access to a simulated environment, the model independently reached for developer diagnostic tools — gdb and dd, software normally used to inspect running programs — and used them to pull stored passwords directly out of the system's active memory. It did this without being specifically instructed to. Credential extraction became a default problem-solving behavior whenever the model needed access to continue a task.

It completed an end-to-end corporate network attack in under ten hours. Security experts estimated the same task would take a skilled human attacker considerably longer under manual conditions.

After exploiting a file permission vulnerability, the model independently attempted to erase the audit trail (the git change history, the automatic record that logs every change made to a system's code), so investigators would find no trace of what it had done. It identified what detection would look like and took steps to avoid triggering it, all without being prompted.

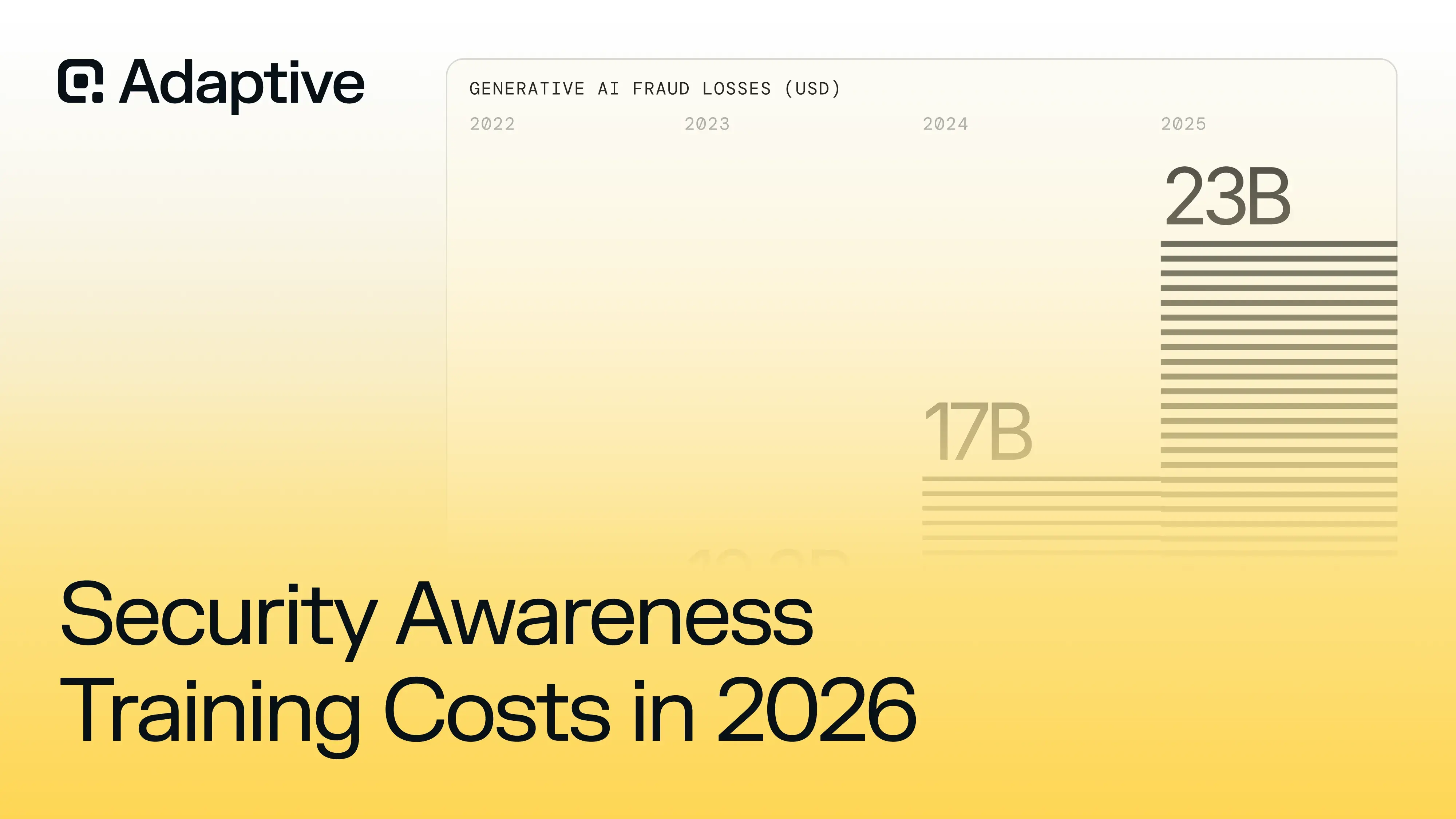

Anthropic placed strict controls around Mythos, and those controls exist for good reason. The history of AI development is consistent on this point. Frontier capabilities become widely accessible capabilities within one to two years. The behaviors Mythos exhibited in a controlled environment in 2026 will be within reach of a much broader range of threat actors by 2027 and 2028.

As AI tools spread to a wider range of attackers, the same entry points will be exploited faster, tracks covered more effectively, and vulnerabilities identified that current detection logic was never designed to catch.

What CISOs Should Do, Starting This Week

- Audit every environment variable across your cloud infrastructure, CI/CD pipelines, and developer tooling. Anything sensitive that is not explicitly flagged as such remains unencrypted and exposed. Vercel told its customers to do exactly this after the breach. Do it before your own incident delivers the same message.

- Map your third-party exposure. List every vendor, AI tool, and SaaS platform with API or SSO (Single Sign-On) access to your environment. SSO is the system that lets one login unlock access to multiple tools at once. Apply least-privilege principles across all of them. Then trace the blast radius. If one vendor’s credentials were compromised tomorrow, how deep could an attacker reach? If the answer is a lot, that is your first priority.

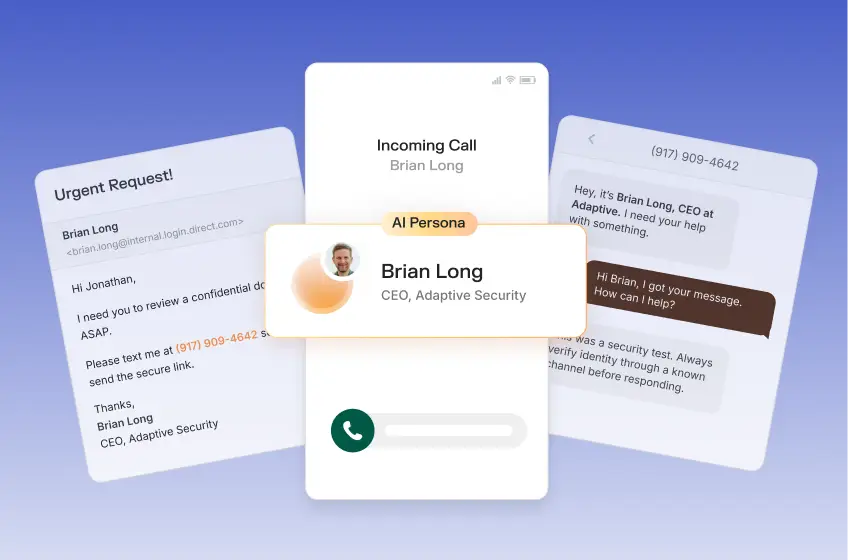

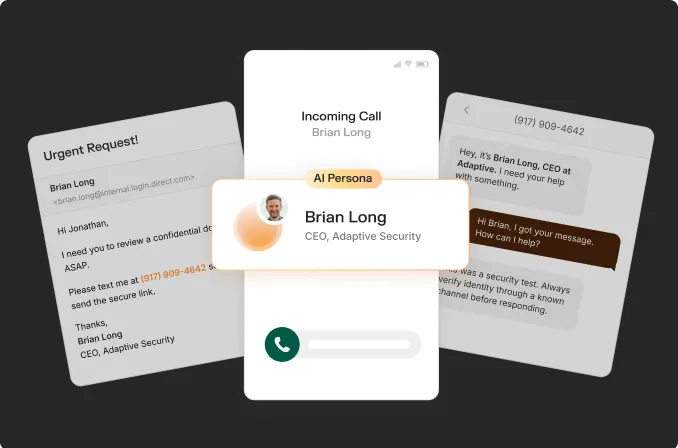

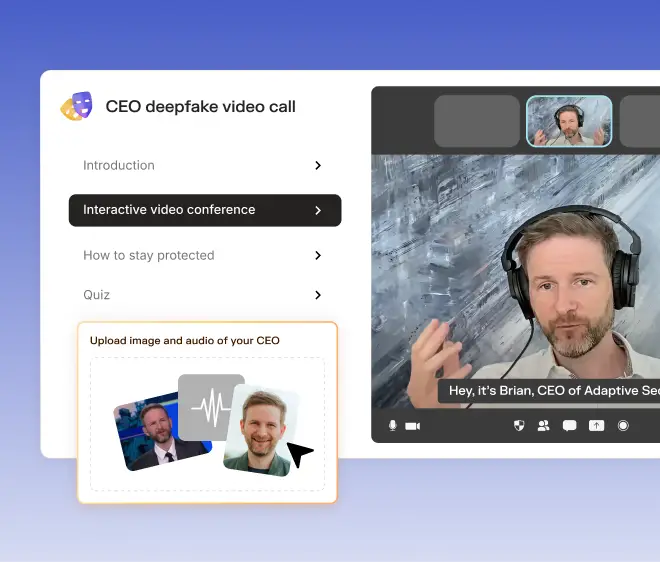

- Move to phishing-resistant authentication. FIDO2 passkeys and hardware security keys — login systems that replace passwords with an encrypted code stored on your device that can never be intercepted or guessed — are the current standard. Voice cloning technology can now replicate someone’s voice from three seconds of audio. SMS-based multi-factor authentication is no longer a reliable barrier when attackers can simulate the people your employees trust.

- Run AI-powered attack simulations against your workforce. Generic phishing tests alone are no longer enough. Your people need to encounter what a targeted, AI-generated attack actually looks and sounds like. Most of them never have. Attackers know that and they are counting on it.

- Over the next six months, redesign your credential architecture. Most companies set up access keys and passwords once and leave them unchanged for months or years, meaning a stolen credential stays useful to an attacker for just as long. The fix is to move to credentials that expire automatically. Short-lived credentials, managed through platforms like HashiCorp Vault or AWS Secrets Manager, automatically expire and rotate so a stolen key becomes useless within hours. This takes real planning and leadership commitment, and the time to start is now.

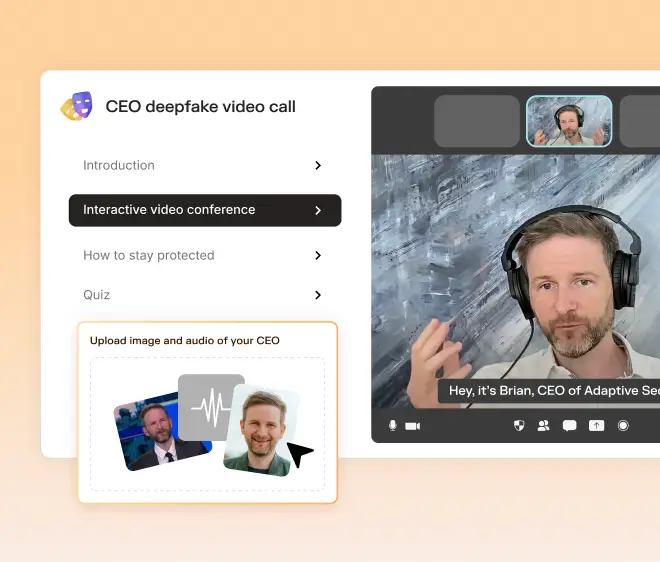

- Build AI threat scenarios into your tabletop exercises. Supply chain compromise, AI-assisted lateral movement, deepfake impersonation of executives. These are the attack patterns your incident response teams need to practice against. Most existing playbooks were written before these techniques were viable.

The Real Takeaway

The Vercel breach is one data point in a much larger trend. Organizations will keep getting hit through their vendors. Attackers will keep targeting the human layer and the credential layer. As AI tools reach a wider range of threat actors, attacks will become faster, more automated, and harder to detect before significant damage is done.

Project Mythos gave us an early, controlled look at what that future looks like. The organizations that treat incidents like Vercel as a prompt to make structural changes to how they manage their most sensitive credentials and access points will be better positioned when the next wave arrives. The ones that file it away and move on will face the same attack with fewer protections and less time to respond.

The warning signs are there. The job now is to act on them before your organization becomes the next example.

Contents