Google's May 2026 disclosure puts a concrete data point on what the security industry has been watching for. Here is what it means for programs already running.

In early May 2026, Google disclosed something the security industry had been tracking closely: a threat actor used an artificial intelligence system to discover a live vulnerability and generate a working exploit. The target was a 2FA mechanism. The deployment was real, in the wild, at scale.

This is the first publicly confirmed case of an AI system identifying a zero-day, building the exploit, and launching a mass exploitation campaign.

The question worth asking is specific: what exactly changed, and what does that mean for security programs already running? The situation calls for a few targeted adjustments to programs already running well.

What Google Confirmed

What Google confirmed is clear: an unknown threat actor used an AI system to handle two phases of attack development that have traditionally required skilled human researchers. Finding the vulnerability, then writing the code to exploit it.

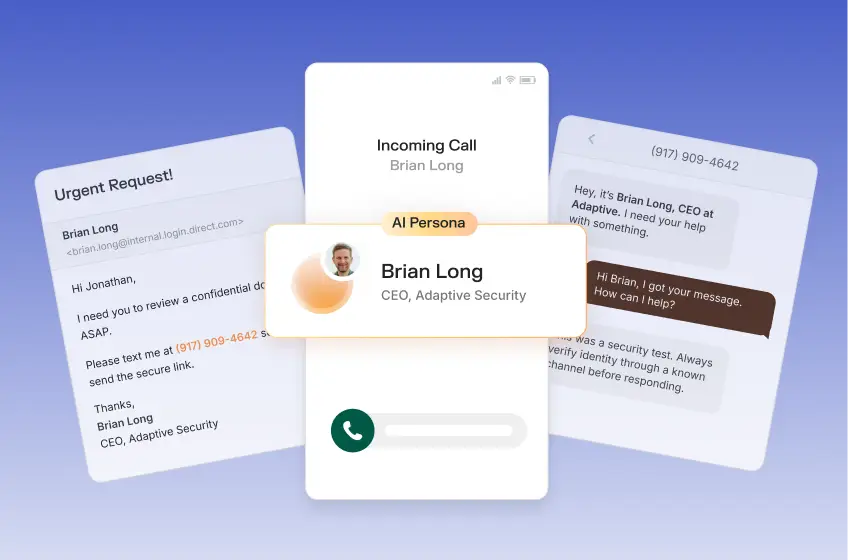

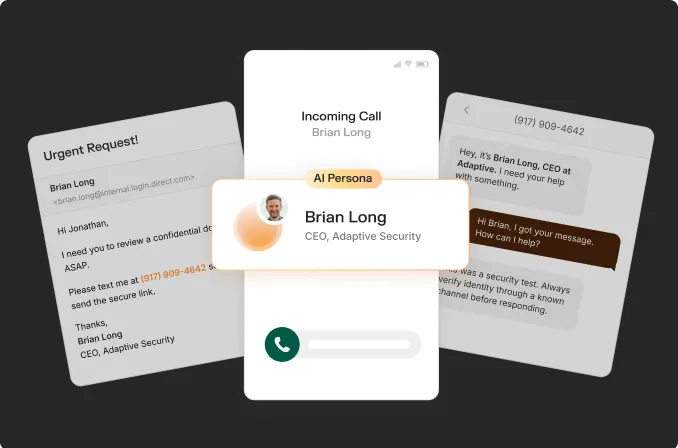

Prior AI-assisted attacks used large language models to write phishing emails, clone executive voices for phone-based social engineering, and generate personalized attack scripts. All serious threats. This event goes further. The AI handled reconnaissance and engineering work simultaneously, then deployed at scale.

Attackers have been trying to get around two-factor authentication for years. They have used fake login pages, phone number hijacking, and push notification spam to do it. Security teams know these techniques well and have built defenses around them. What is significant here is that an AI found a brand new way in, one nobody had seen before, and then built the attack on its own.

The Assumption Worth Revisiting

Security teams have always worked off a rough assumption: when a vulnerability gets publicly announced, how much time do we have before attackers actually use it? That window, however long or short, has driven decisions about how fast to patch, how to set up detection, and where to focus resources.

If AI can shorten the time it takes to go from finding a vulnerability to exploiting it, those time estimates need a fresh look.

The work is targeted and specific. Teams that already patch login and identity systems on a fast cycle are in good shape. A useful exercise this week: look at the vulnerability types your team dealt with most over the last year and ask what would have happened if attackers moved twice as fast. That will show you where your current patching schedule has the most risk.

Three things matter most here.

- Patching login-facing systems faster.

- Having detection in place that catches unusual authentication activity.

- Having a clear plan for who does what the moment a critical vulnerability drops.

Teams with all three already running have the right foundation. The question worth asking is whether your current pace matches what we now know about how fast AI can move.

Where to Look in Your Authentication Stack

The security work your team has already done to protect login systems is the right foundation, and this week's news confirms it. The organizations with the most exposure right now are the ones that set up their login security years ago and have left it alone since.

If your organization is still using text message codes or authenticator app one-time passwords on systems that face the internet, those are the areas worth prioritizing first. Teams that have been making the case internally for moving to hardware security keys or passkeys now have a stronger argument for getting that on this year's roadmap.

Detection teams have one concrete thing to check: do your current detection rules actually catch the login attack patterns being used today, including AI-assisted ones? Detection logic built a year and a half ago may miss what newer attack tools look like in practice, particularly around automated login attempts at scale and what attackers do once they get inside.

Why the Human Layer Still Anchors Everything

Technical exploits almost always require a delivery mechanism. And most delivery mechanisms run through people. A credential phishing campaign, a spoofed login portal, a voice call impersonating IT support and asking for a verification code, a text message creating urgency around an authentication request: these are the vectors that give technical vulnerabilities their reach.

According to ISACA's 2026 Tech Trends and Priorities report, a survey of nearly 3,000 IT and cybersecurity professionals found that AI-driven social engineering topped their list of concerns for the first time, surpassing ransomware. The 2025 Bitdefender Cybersecurity Assessment, which surveyed 1,200 security professionals across six countries, found that 63% had experienced an AI-powered attack in the past 12 months. In the United States, that number was 74%.

An employee who can identify a suspicious authentication request, recognize pressure in a live voice call, or pause on an urgent wire transfer is a detection layer with its own reach and speed. When new technical attack classes surface, the human layer provides detection coverage while engineering teams build updated models.

What Forward-Looking Teams Are Doing Right Now

Across the organizations that have moved quickly on this news, the approach is consistent: targeted reassessment of specific assumptions across a few well-defined areas.

Four questions worth prioritizing this week:

- How long does your team take to patch a critical authentication vulnerability once publicly disclosed? Given what we now know about how fast AI can move, is that timeline fast enough for your most exposed systems?

- Which internet-facing systems are still running older MFA setups with known weaknesses? A quick audit will show you the highest-priority areas to migrate first.

- Has your detection team reviewed whether your current rules catch the login attack patterns from newer tools? Detection logic tuned 18 months ago may have gaps worth closing.

- When did you last run a simulation that combined AI-generated social engineering with technical pressure? A coordinated attack pairing a spoofed voice call with an authentication bypass attempt reveals how your technical and human layers work together under real pressure.

Many programs run technical drills regularly. Running simulations that combine AI-generated social engineering with real-time pressure is where the most valuable gaps get found. The organizations doing it are building resilience that holds up as attack capabilities develop.

The Bigger Picture

AI-assisted exploitation is developing further. The tools are cheaper, more accessible, and more capable than they were 18 months ago. Google's disclosure is a signal about the direction of this threat.

Tight authentication controls, fast patching cycles, behavioral detection, and a workforce trained to recognize AI-generated pressure tactics are all reinforcing. Organizations that treat them as a unified program are consistently better positioned when novel threats emerge.

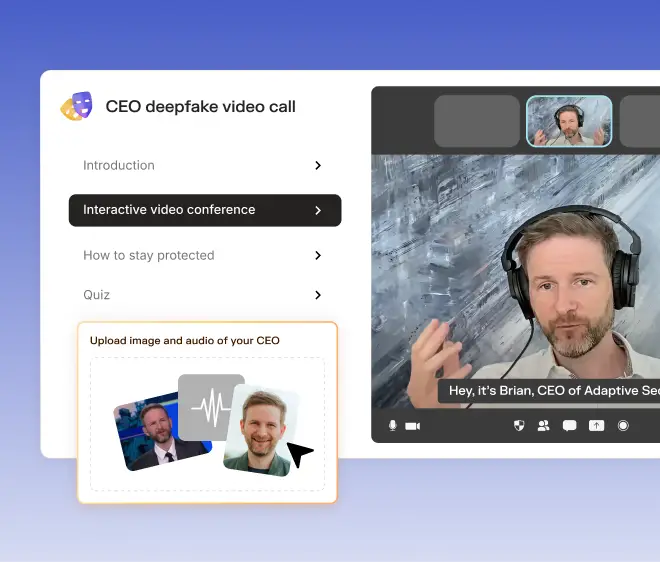

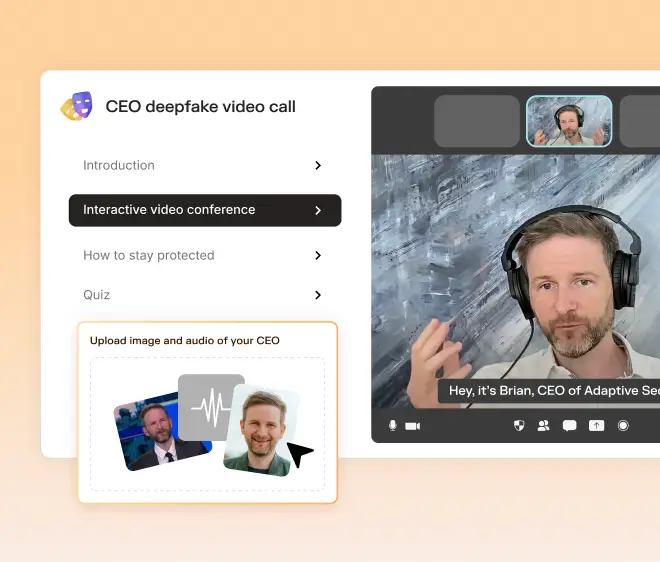

That is where Adaptive Security focuses. Our platform simulates AI-powered attacks, measures human risk at the individual and organizational level, and delivers personalized training based on what real attackers are doing right now. When new attack classes emerge, our simulations update to reflect them. We work with hundreds of enterprises across industries, and when a week like this one hits, our customers have current context on where their exposure stands.

To understand where your organization stands today, visit adaptivesecurity.com or book a demo with our team.

Contents