The EU AI Act is now in effect — and Adaptive Security is compliant.

For security and compliance leaders at organizations operating in or selling into the EU, this matters. The EU AI Act is the world's first comprehensive legal framework regulating AI systems, and its requirements touch everything from transparency and human oversight to data governance and risk management. For AI-powered security tools, the bar is high.

What the EU AI Act Requires

The EU AI Act classifies AI systems by risk level. Tools used in security-sensitive contexts, where AI outputs can influence decisions about people or organizational risk, fall under heightened scrutiny. High-risk AI systems must meet specific requirements:

- Transparency: Users must understand they're interacting with AI and have visibility into how it works

- Human oversight: AI decisions must be reviewable and overridable by humans

- Accuracy and robustness: Systems must perform reliably and be resilient to adversarial inputs

- Data governance: Training data must meet quality and bias standards

- Technical documentation: Systems must be documented in ways that allow for auditing

Why We Built This

Security teams are under increasing pressure to vet every tool in their stack for regulatory compliance. A security platform that creates EU AI Act liability is the last thing a CISO needs.

We built compliance into Adaptive's architecture from the ground up rather than retrofitting it, which means the controls are durable as the Act's implementing regulations continue to roll out through 2027.

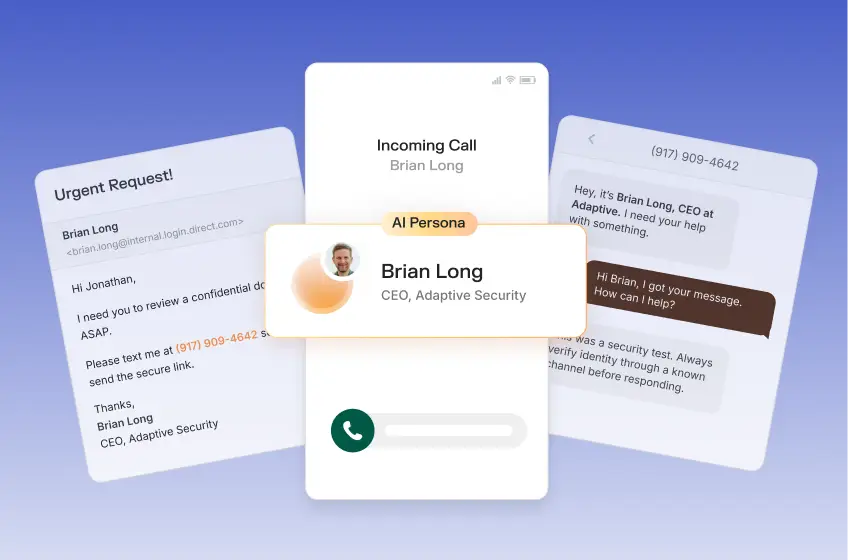

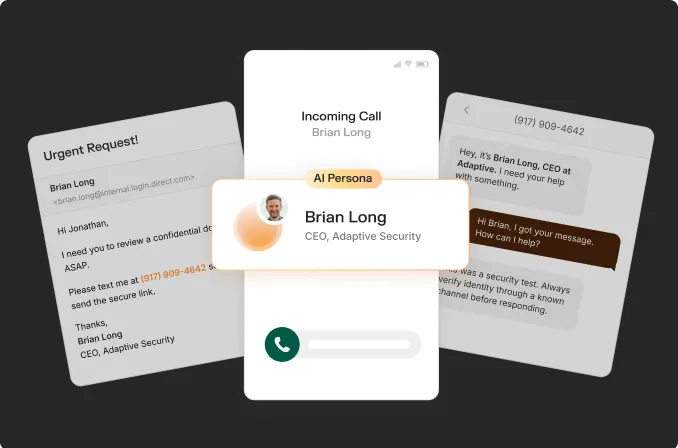

Brian Long is the CEO & Co-Founder of Adaptive Security.

Contents