Secure Every AI Interaction.

Adaptive AI Governance gives you full visibility into AI application usage across your organization.

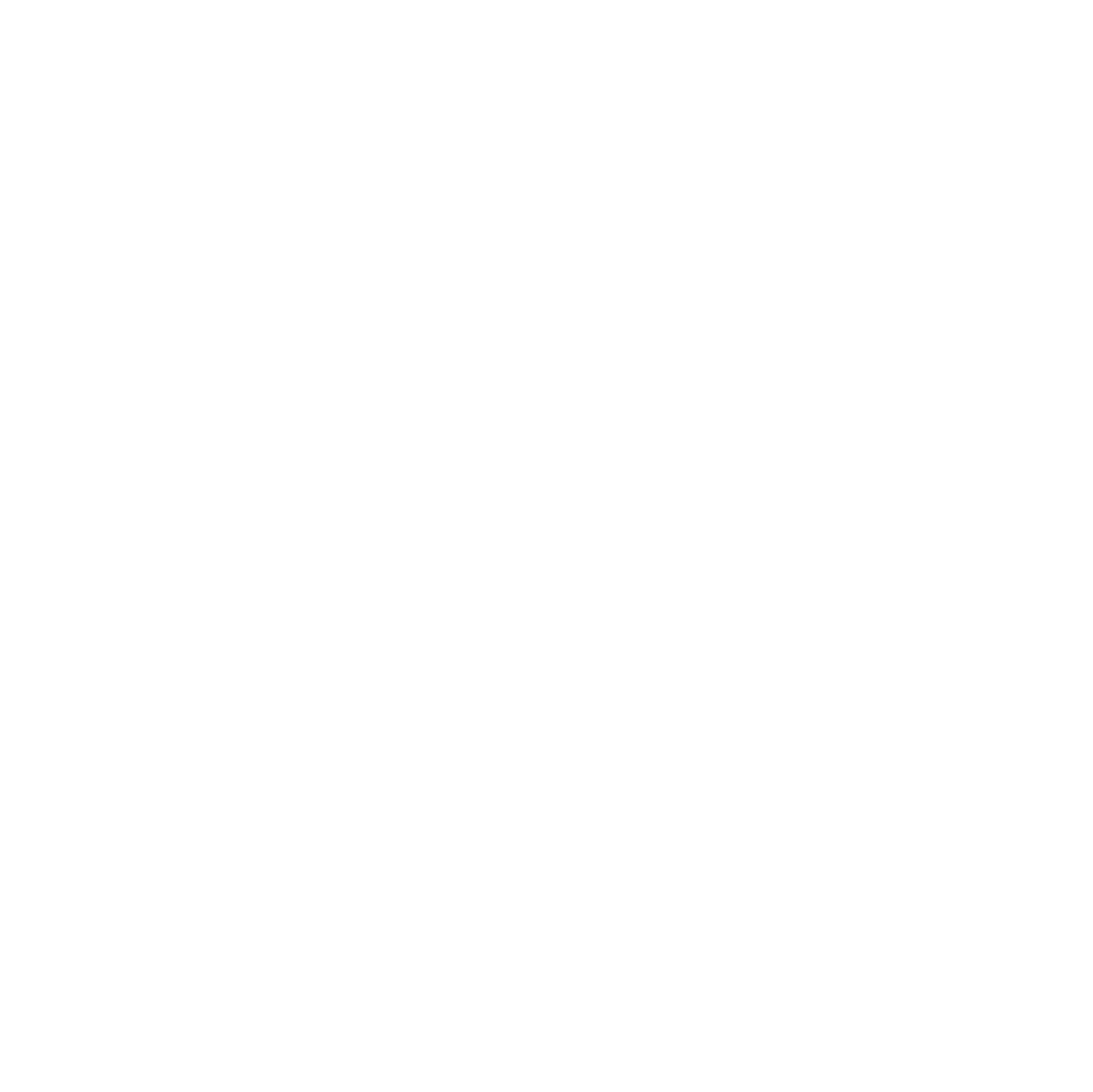

Full visibility into AI usage.

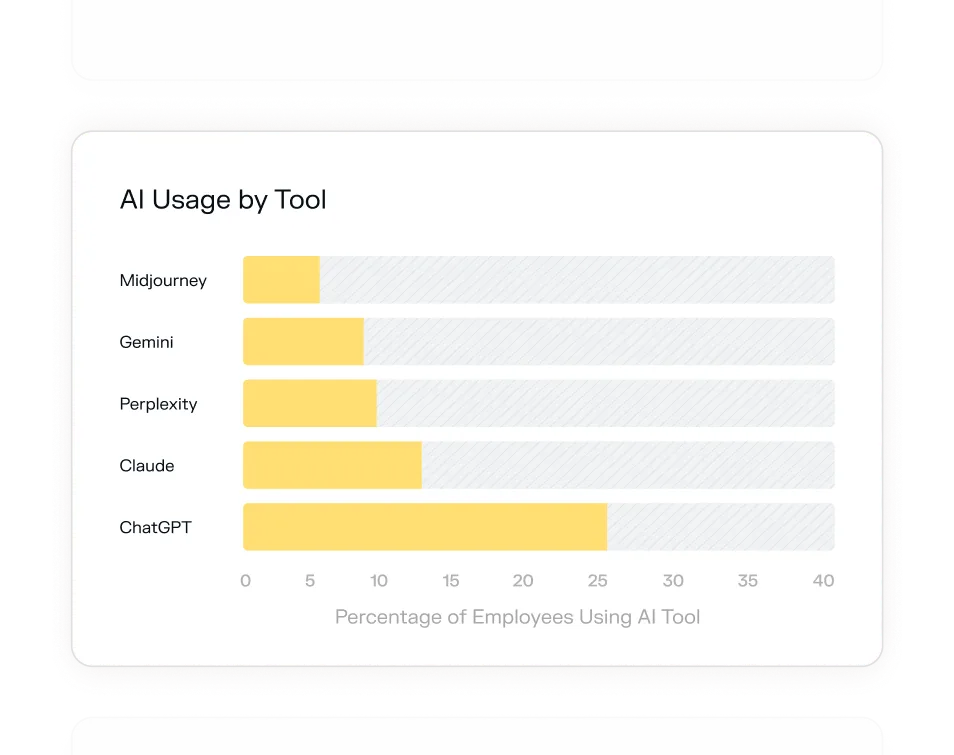

Stop data leaks into AI.

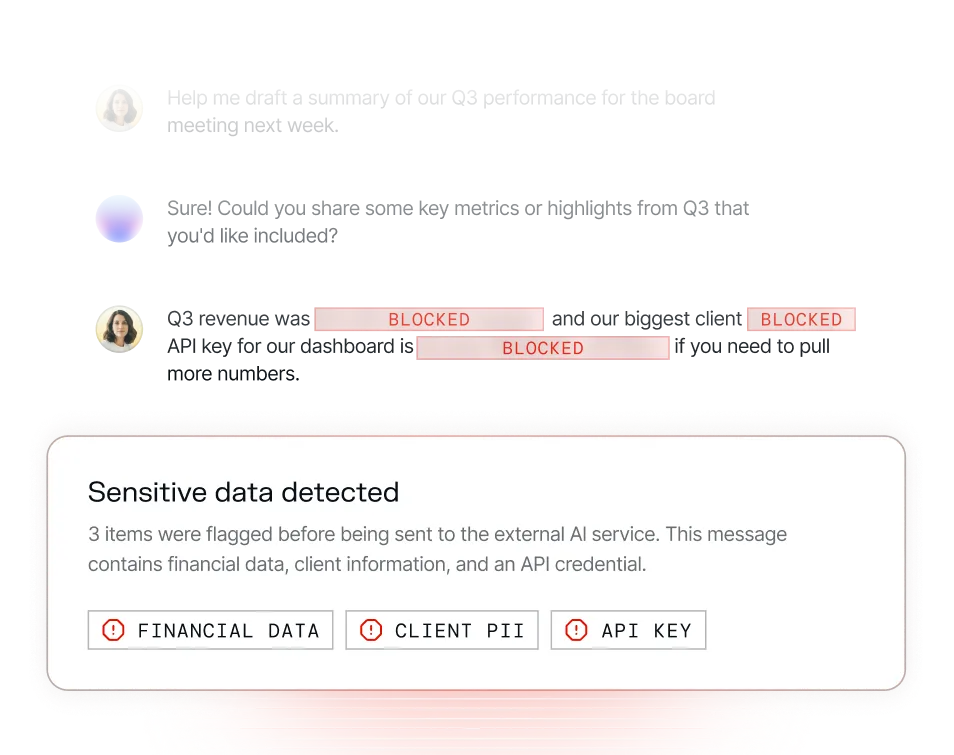

Nudge better AI habits.

.png)

.png)

Your employees are already using AI. Do you know what they're sharing?

Full visibility into your organization.

Your questions answered

AI governance refers to the policies, controls, and visibility mechanisms that organizations use to manage how employees interact with AI tools. In a security context, it means knowing which AI applications are in use, what data is being shared with them, and whether that usage aligns with company policy and compliance requirements.

Policies don't enforce themselves. Most employees using ChatGPT or Claude for work aren't thinking about whether a prompt contains PII, financial data, or an API key — they're focused on getting things done. Adaptive monitors AI interactions in real time and intervenes before sensitive data leaves the organization, whether or not employees read the policy.

Adaptive identifies a broad range of sensitive data types in real time — including customer PII, API credentials, passwords, financial records, salary data, and legal documents — before they're submitted to an external AI service.

No — blocking AI tools outright is counterproductive and largely unenforceable. Adaptive's approach is to guide and protect, not restrict. When a risky prompt is detected, employees receive a contextual nudge explaining what they should do differently. The goal is better AI habits, not a blanket ban.

Adaptive automatically surfaces all AI applications in use across your organization, including tools your IT team never sanctioned. Most organizations are surprised by how many exist. This visibility is a prerequisite for any real AI governance posture.

No. Adaptive integrates natively with your existing workspace — no endpoint agents, no proxies, no traffic rerouting. Setup takes minutes, not weeks.

Using unapproved AI tools to process regulated data — even unintentionally — creates compliance exposure. Adaptive gives compliance and legal teams the audit trail and real-time controls needed to demonstrate that sensitive data is not being improperly shared with third-party AI services.